<p>LinkedIn accused of using private messages to train AI <br><a href="https://www.bbc.com/news/articles/cdxevpzy3yko" rel="nofollow" class="ellipsis" title="www.bbc.com/news/articles/cdxevpzy3yko"><span class="invisible">https://</span><span class="ellipsis">www.bbc.com/news/articles/cdxe</span><span class="invisible">vpzy3yko</span></a></p><p><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/linkedin/" rel="tag">#LinkedIn</a> <a href="/tags/microsoft/" rel="tag">#Microsoft</a></p>

ai

<p>Has anyone put together an article that lists all the different walkthroughs for disabling AI in various programs/services?</p><p>Something like this: <a href="https://stefanbohacek.com/blog/opting-out-of-ai-in-popular-software-and-services/" rel="nofollow" class="ellipsis" title="stefanbohacek.com/blog/opting-out-of-ai-in-popular-software-and-services/"><span class="invisible">https://</span><span class="ellipsis">stefanbohacek.com/blog/opting-</span><span class="invisible">out-of-ai-in-popular-software-and-services/</span></a></p><p><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a></p>

Edited 1y ago

<p>Abstract: This paper will look at the various predictions that have been made about AI and propose decomposition schemas for analyzing them. It will propose a variety of theoretical tools for analyzing, judging, and improving these predictions. Focusing specifically on timeline predictions (dates given by which we should expect the creation of AI), it will show that there are strong theoretical grounds to expect predictions to be quite poor in this area. Using a database of 95 AI timeline predictions, it will show that these expectations are borne out in practice: expert predictions contradict each other considerably, and are indistinguishable from non-expert predictions and past failed predictions. Predictions that AI lie 15 to 25 years in the future are the most common, from experts and non-experts alike.<br></p>Emphasis added. From <a href="https://intelligence.org/files/PredictingAI.pdf" rel="nofollow" class="ellipsis" title="intelligence.org/files/PredictingAI.pdf"><span class="invisible">https://</span><span class="ellipsis">intelligence.org/files/Predict</span><span class="invisible">ingAI.pdf</span></a><br>Armstrong, Stuart, and Kaj Sotala. 2012. “How We’re Predicting AI—or Failing To.” In Beyond AI: Artificial Dreams, edited by Jan Romportl, Pavel Ircing, Eva Zackova, Michal Polak, and Radek Schuster, 52–75. Pilsen: University of West Bohemia.<br><br>Note that this is from 2012.<br><br>One wonders what exactly an expert is when it comes to AI, if their track records are so consistently poor and unresponsive to their own failures.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/agi/" rel="tag">#AGI</a><br>

<p>Virgin Money's AI-powered chatbot will scold you if you use the word "virgin".</p><p><a href="https://www.ft.com/content/670f5896-1fe5-4a31-b41f-ad4f5b91202f" rel="nofollow" class="ellipsis" title="www.ft.com/content/670f5896-1fe5-4a31-b41f-ad4f5b91202f"><span class="invisible">https://</span><span class="ellipsis">www.ft.com/content/670f5896-1f</span><span class="invisible">e5-4a31-b41f-ad4f5b91202f</span></a></p><p>Archived link: <a href="https://archive.is/gGVgt" rel="nofollow"><span class="invisible">https://</span>archive.is/gGVgt</a></p><p><a href="/tags/news/" rel="tag">#news</a> <a href="/tags/technews/" rel="tag">#TechNews</a> <a href="/tags/technology/" rel="tag">#technology</a> <a href="/tags/chatbots/" rel="tag">#ChatBots</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/virginmoney/" rel="tag">#VirginMoney</a></p>

<p>DeepSeek launched a free, open-source large-language model in late December, claiming it was developed in just two months at a cost of under $6 million — a much smaller expense than the one called for by Western counterparts.<br><br>These developments have stoked concerns about the amount of money big tech companies have been investing in AI models and data centers, and raised alarm that the U.S. is not leading the sector as much as previously believed.<br></p>The "Western counterparts" are claiming training a model might take years and billions of dollars. This has always been a hyped-up grift, with snake oil salesmen and con artists being showered with money and power. It's really quite amazing how profoundly unintelligent "the market" is in practice.<br><br>The sad reality is that the US could lead in this field (1), if we'd stop routinely putting narcissists and con artists in charge and showering them with praise even when they fail.<br><br>From <a href="https://www.cnbc.com/2025/01/27/nvidia-falls-10percent-in-premarket-trading-as-chinas-deepseek-triggers-global-tech-sell-off.html" rel="nofollow" class="ellipsis" title="www.cnbc.com/2025/01/27/nvidia-falls-10percent-in-premarket-trading-as-chinas-deepseek-triggers-global-tech-sell-off.html"><span class="invisible">https://</span><span class="ellipsis">www.cnbc.com/2025/01/27/nvidia</span><span class="invisible">-falls-10percent-in-premarket-trading-as-chinas-deepseek-triggers-global-tech-sell-off.html</span></a><br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/snakeoil/" rel="tag">#SnakeOil</a> <a href="/tags/hype/" rel="tag">#hype</a> <a href="/tags/grift/" rel="tag">#grift</a> <a href="/tags/marketcapitalism/" rel="tag">#MarketCapitalism</a><br><br>(1) Putting aside whether we should, which is an important question.<br>

"You need suckers who think AI is effectively magic:<br><br>… people with lower AI literacy perceive AI to be more magical, and thus experience greater feelings of awe when thinking about AI completing tasks, which explain their greater receptivity towards using AI-based products and services. <br><br>AI marketing is 100% about whether the sucker is sufficiently wowed by an impressive demo."<br><br>From <a href="https://econtwitter.net/users/amycastor/statuses/113902204443133686" rel="nofollow" class="ellipsis" title="econtwitter.net/users/amycastor/statuses/113902204443133686"><span class="invisible">https://</span><span class="ellipsis">econtwitter.net/users/amycasto</span><span class="invisible">r/statuses/113902204443133686</span></a><br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/snakeoil/" rel="tag">#SnakeOil</a><br>

<p>"But to [Aaron, the creator of the anti-AI software Nepenthes], the fight is not about winning. Instead, it's about resisting the AI industry further decaying the Internet [...]."</p><p>Or to paraphrase Jean-Paul Sartre:</p><p>"You don’t fight enshittification because you are going to win, you fight enshittification because it is enshittification."</p><p><a href="https://arstechnica.com/tech-policy/2025/01/ai-haters-build-tarpits-to-trap-and-trick-ai-scrapers-that-ignore-robots-txt/" rel="nofollow" class="ellipsis" title="arstechnica.com/tech-policy/2025/01/ai-haters-build-tarpits-to-trap-and-trick-ai-scrapers-that-ignore-robots-txt/"><span class="invisible">https://</span><span class="ellipsis">arstechnica.com/tech-policy/20</span><span class="invisible">25/01/ai-haters-build-tarpits-to-trap-and-trick-ai-scrapers-that-ignore-robots-txt/</span></a></p><p><a href="/tags/news/" rel="tag">#news</a> <a href="/tags/technews/" rel="tag">#TechNews</a> <a href="/tags/technology/" rel="tag">#technology</a> <a href="/tags/ai/" rel="tag">#ai</a> <a href="/tags/nepenthes/" rel="tag">#nepenthes</a> <a href="/tags/enshittification/" rel="tag">#enshittification</a></p>

<p>"[California Attorney General Rob Bonta’s] memo clearly illustrates what a legal clusterfuck the AI industry represents, though it doesn’t even get around to mentioning U.S. copyright law, which is another legal gray area where AI companies are perpetually running into trouble."</p><p><a href="https://gizmodo.com/californias-ag-tells-ai-companies-practically-everything-theyre-doing-might-be-illegal-2000555896" rel="nofollow" class="ellipsis" title="gizmodo.com/californias-ag-tells-ai-companies-practically-everything-theyre-doing-might-be-illegal-2000555896"><span class="invisible">https://</span><span class="ellipsis">gizmodo.com/californias-ag-tel</span><span class="invisible">ls-ai-companies-practically-everything-theyre-doing-might-be-illegal-2000555896</span></a></p><p><a href="/tags/news/" rel="tag">#news</a> <a href="/tags/technews/" rel="tag">#TechNews</a> <a href="/tags/technology/" rel="tag">#technology</a> <a href="/tags/ai/" rel="tag">#AI</a></p>

Edited 1y ago

<p><a href="/tags/funny/" rel="tag">#funny</a> <a href="/tags/meme/" rel="tag">#meme</a> <a href="/tags/shitpost/" rel="tag">#shitpost</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/deepseek/" rel="tag">#deepseek</a> <a href="/tags/tiananmen/" rel="tag">#Tiananmen</a></p>

<p>I made this</p><p><a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/openai/" rel="tag">#OpenAI</a> <a href="/tags/deepseek/" rel="tag">#DeepSeek</a></p>

<p>I've been reading up on the Lottery Ticket Hypothesis, which is super interesting.</p><p>Basically, the observation is that these days we build vast neural networks with billions of parameters, but most of the parameters aren't needed. That is, after training, you can just throw away 95% of the network (pruning), and it will still work fine.</p><p>The LTH paper is asking: could we start with a network just 5% of the size, and get comparable results? If so, that would be a huge performance win for Deep Learning.</p><p>What's interesting is that you can do this, but only by training the full network (perhaps several times) to see which neurons are needed. They argue that training a neural network isn't so much creating a model, as finding a lucky sub-network (a lottery ticket) from the randomly initialized network, a bit like a sculpter "finding" the bust hidden in a block of marble.</p><p>Initial LTH paper: <a href="http://arxiv.org/abs/1803.03635" rel="nofollow"><span class="invisible">http://</span>arxiv.org/abs/1803.03635</a><br>Follow-up with major clarifications: <a href="http://arxiv.org/abs/1905.01067" rel="nofollow"><span class="invisible">http://</span>arxiv.org/abs/1905.01067</a></p><p><a href="/tags/science/" rel="tag">#science</a> <a href="/tags/ai/" rel="tag">#ai</a> <a href="/tags/machinelearning/" rel="tag">#machinelearning</a></p>

<p>Oh wow, someone reporting losing a "year of Ableton projects" after running an AI-generated script.</p><p>"I didn’t realize what it was doing and ran it without backing anything up."</p><p>There's a few lessons to be learned here.</p><p><a href="https://www.reddit.com/r/ableton/comments/1kmnhfu/lost_a_year_of_ableton_projects_after_running_a/" rel="nofollow" class="ellipsis" title="www.reddit.com/r/ableton/comments/1kmnhfu/lost_a_year_of_ableton_projects_after_running_a/"><span class="invisible">https://</span><span class="ellipsis">www.reddit.com/r/ableton/comme</span><span class="invisible">nts/1kmnhfu/lost_a_year_of_ableton_projects_after_running_a/</span></a></p><p><a href="/tags/musicians/" rel="tag">#musicians</a> <a href="/tags/backup/" rel="tag">#backup</a> <a href="/tags/ai/" rel="tag">#AI</a></p>

<p>"Wait, isn't this the plot to the "Terminator" movies?"</p><p><a href="https://futurism.com/openai-signs-deal-us-government-nuclear-weapon-security" rel="nofollow" class="ellipsis" title="futurism.com/openai-signs-deal-us-government-nuclear-weapon-security"><span class="invisible">https://</span><span class="ellipsis">futurism.com/openai-signs-deal</span><span class="invisible">-us-government-nuclear-weapon-security</span></a></p><p><a href="/tags/news/" rel="tag">#news</a> <a href="/tags/technology/" rel="tag">#technology</a> <a href="/tags/technews/" rel="tag">#TechNews</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/openai/" rel="tag">#OpenAI</a> <a href="/tags/military/" rel="tag">#military</a> <a href="/tags/terminator/" rel="tag">#terminator</a></p>

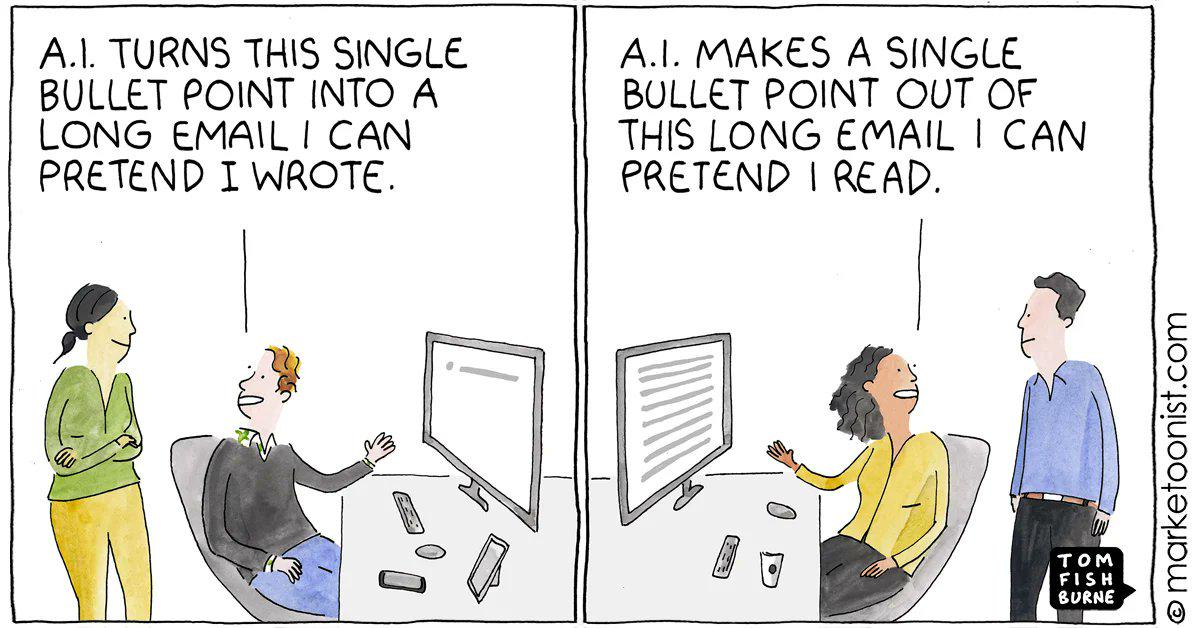

<p>ai, didn't read.<br>brilliant.</p><p><a href="/tags/artificialintelligence/" rel="tag">#artificialintelligence</a> <a href="/tags/ai/" rel="tag">#ai</a></p>

<p>There is no AI, just other people's data.</p><p><a href="/tags/robot/" rel="tag">#robot</a> <a href="/tags/comic/" rel="tag">#comic</a> <a href="/tags/ai/" rel="tag">#ai</a> <a href="/tags/data/" rel="tag">#data</a> <a href="/tags/people/" rel="tag">#people</a> <a href="/tags/noai/" rel="tag">#noai</a></p>

Edited 1y ago

<p>Did you know? <a href="/tags/fedify/" rel="tag">#Fedify</a> provides <a href="/tags/documentation/" rel="tag">#documentation</a> optimized for LLMs through the <a href="https://llmstxt.org/" rel="nofollow">llms.txt standard</a>.</p><p>Available endpoints:</p><p><a href="https://fedify.dev/llms.txt" rel="nofollow"><span class="invisible">https://</span>fedify.dev/llms.txt</a> — Core documentation overview<br><a href="https://fedify.dev/llms-full.txt" rel="nofollow"><span class="invisible">https://</span>fedify.dev/llms-full.txt</a> — Complete documentation dump</p><p>Useful for training <a href="/tags/ai/" rel="tag">#AI</a> assistants on <a href="/tags/activitypub/" rel="tag">#ActivityPub</a>/<a href="/tags/fediverse/" rel="tag">#fediverse</a> development, building documentation chatbots, or <a href="/tags/llm/" rel="tag">#LLM</a>-powered dev tools.</p><p><a href="/tags/fedidev/" rel="tag">#fedidev</a></p>

If you turn the sink off when you're done using it to conserve water, or turn the lights off when you leave the room to conserve electricity, why do you use ChatGPT or other AI tools? Using those sorts of tools a few times a month negates whatever you've conserved by being prudent in other parts of your life.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/waste/" rel="tag">#waste</a> <a href="/tags/environment/" rel="tag">#environment</a><br>

<p>The <a href="/tags/eu/" rel="tag">#EU</a> bans some <a href="/tags/ai/" rel="tag">#AI</a> uses and regulates most of the rest, in service of its citizens and not the "techbros".</p><p>AI systems with 'unacceptable risk' are now banned in the EU | TechCrunch<br><a href="https://techcrunch.com/2025/02/02/ai-systems-with-unacceptable-risk-are-now-banned-in-the-eu/" rel="nofollow" class="ellipsis" title="techcrunch.com/2025/02/02/ai-systems-with-unacceptable-risk-are-now-banned-in-the-eu/"><span class="invisible">https://</span><span class="ellipsis">techcrunch.com/2025/02/02/ai-s</span><span class="invisible">ystems-with-unacceptable-risk-are-now-banned-in-the-eu/</span></a></p>

<p>I think one of, if not *the* main purpose of <a href="/tags/ai/" rel="tag">#AI</a> is that anything can be claimed as fake or faked by the powers that be. This makes it an excellent tool of <a href="/tags/fascism/" rel="tag">#fascism</a>. And the more it's normalized, *for any reason* the better for them. It's the potential for them to twist and to reshape reality. <a href="/tags/fuckai/" rel="tag">#FuckAI</a>, <a href="/tags/fuckbillionaires/" rel="tag">#FuckBillionaires</a>, <a href="/tags/fuckfascists/" rel="tag">#FuckFascists</a>, and fuck their "post-truth" world.</p><p>Do not give in. Resist. Dare to be inconvenienced, dare to do things the "hard way".</p>

Edited 71d ago

<p>Resistance to the coup is the defense of the human against the digital and the democratic against the oligarchic.<br></p>From <a href="https://snyder.substack.com/p/of-course-its-a-coup" rel="nofollow" class="ellipsis" title="snyder.substack.com/p/of-course-its-a-coup"><span class="invisible">https://</span><span class="ellipsis">snyder.substack.com/p/of-cours</span><span class="invisible">e-its-a-coup</span></a><br><br>Defense of the human against the digital has been my mission for some time. Resisting the narratives about how <a href="/tags/llms/" rel="tag">#LLMs</a> "reason", "pass the Turing test", "diagnose illnesses", are "better than humans" in various ways are part of it. Resisting the false narrative that we're on the verge of discovering <a href="/tags/agi/" rel="tag">#AGI</a> is part of it. Allowing these false stories to persist and spread means succumbing to very dark anti-human forces. We're seeing some of the consequences now, and we're seeing how far this might go.<br><br><a href="/tags/uspol/" rel="tag">#USPol</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/agi/" rel="tag">#AGI</a><br>

<p>Some argue that ai technology is more significant than electricity or the internet, and so it will spread fast. But there is little sign of this. Only 5-6% of American businesses said they used ai to produce goods and services in 2024, according to the country’s Census Bureau.<br></p>From <a href="https://www.economist.com/catch-up-rampage-in-new-orleans-tesla-cybertruck-explosion/2024/12/31/the-ai-productivity-puzzle" rel="nofollow" class="ellipsis" title="www.economist.com/catch-up-rampage-in-new-orleans-tesla-cybertruck-explosion/2024/12/31/the-ai-productivity-puzzle"><span class="invisible">https://</span><span class="ellipsis">www.economist.com/catch-up-ram</span><span class="invisible">page-in-new-orleans-tesla-cybertruck-explosion/2024/12/31/the-ai-productivity-puzzle</span></a> , titled The AI productivity puzzle<br><br>What's the puzzle? It has its uses but generally this technology is not particularly useful for most people. Countless billions of dollars have been spent hyping it up to pretend that isn't the case. But reality matters.<br><br>Nowadays I think of generative AI as a power grab. It's not a useful tool for a lot of people, but it's exceptionally useful to people who want to grab and hold power, or restructure power in their favor.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a><br>

<p>"The original video showing off Google's Gemini offerings in Workspace was posted five days ago and showed the AI generating a response for a small-business owner in Wisconsin. In the response, Gemini claims Gouda accounts for "50 to 60% of the world's cheese consumption.</p><p>The edited ad now shows a Gemini response that says Gouda "is one of the most popular cheeses in the world."</p><p><a href="https://www.businessinsider.com/google-super-bowl-ad-gemini-ai-gouda-cheese-2025-2" rel="nofollow" class="ellipsis" title="www.businessinsider.com/google-super-bowl-ad-gemini-ai-gouda-cheese-2025-2"><span class="invisible">https://</span><span class="ellipsis">www.businessinsider.com/google</span><span class="invisible">-super-bowl-ad-gemini-ai-gouda-cheese-2025-2</span></a></p><p>Archived link: <a href="https://archive.is/2cX3i" rel="nofollow"><span class="invisible">https://</span>archive.is/2cX3i</a></p><p><a href="/tags/news/" rel="tag">#news</a> <a href="/tags/technews/" rel="tag">#TechNews</a> <a href="/tags/technology/" rel="tag">#technology</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/google/" rel="tag">#google</a> <a href="/tags/ads/" rel="tag">#ads</a></p>

<p>Not sure what the chances of this bill passing are, but it's certainly interesting to see the efforts.</p><p>"Under Hawley’s proposed law, “technology or intellectual property” developed in China would be barred from importation into the U.S. Anyone found violating these restrictions could face up to 20 years in prison, as well as substantial monetary penalties of up to $1 million for individuals and $100 million for companies."</p><p><a href="https://interestingengineering.com/culture/law-proposed-to-criminalize-deepseek" rel="nofollow" class="ellipsis" title="interestingengineering.com/culture/law-proposed-to-criminalize-deepseek"><span class="invisible">https://</span><span class="ellipsis">interestingengineering.com/cul</span><span class="invisible">ture/law-proposed-to-criminalize-deepseek</span></a></p><p><a href="/tags/news/" rel="tag">#news</a> <a href="/tags/technews/" rel="tag">#TechNews</a> <a href="/tags/technology/" rel="tag">#technology</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/deepseek/" rel="tag">#deepseek</a></p>

<p>Unbelievable</p><p><a href="/tags/elonmusk/" rel="tag">#ElonMusk</a>’s US <a href="/tags/doge/" rel="tag">#DOGE</a> Service are feeding sensitive data into <a href="/tags/ai/" rel="tag">#AI</a> software via <a href="/tags/microsoft/" rel="tag">#Microsoft</a>’s <a href="/tags/cloud/" rel="tag">#cloud</a></p><p><a href="/tags/musk/" rel="tag">#Musk</a>’s US <a href="/tags/doge/" rel="tag">#DOGE</a> Service have fed sensitive data from across the <a href="/tags/education/" rel="tag">#Education</a> Dept into <a href="/tags/artificialintelligence/" rel="tag">#ArtificialIntelligence</a> software to probe the agency’s programs & spending….

The AI probe includes data w/personally identifiable info for people who manage grants, & sensitive internal financial data…</p><p><a href="/tags/law/" rel="tag">#law</a> <a href="/tags/security/" rel="tag">#security</a> <a href="/tags/infosec/" rel="tag">#InfoSec</a> <a href="/tags/cybersecurity/" rel="tag">#CyberSecurity</a> <a href="/tags/nationalsecurity/" rel="tag">#NationalSecurity</a> <a href="/tags/trump/" rel="tag">#Trump</a> <a href="/tags/trumpcoup/" rel="tag">#TrumpCoup</a><br><a href="https://www.washingtonpost.com/nation/2025/02/06/elon-musk-doge-ai-department-education/" rel="nofollow" class="ellipsis" title="www.washingtonpost.com/nation/2025/02/06/elon-musk-doge-ai-department-education/"><span class="invisible">https://</span><span class="ellipsis">www.washingtonpost.com/nation/</span><span class="invisible">2025/02/06/elon-musk-doge-ai-department-education/</span></a></p>