<p>"<a href="/tags/anthropic/" rel="tag">#Anthropic</a> Settles Major AI Copyright Suit Brought by Authors"</p><p>Precedent set?<br>Both of my <a href="/tags/scarfolk/" rel="tag">#Scarfolk</a> books were downloaded and pirated from the LibGen 'shadow library'.<br>Compensation would be nice. <br>Anyone aware of other class-action lawsuits I can join, or have any other legal pointers?</p><p><a href="https://news.bloomberglaw.com/class-action/anthropic-settles-major-ai-copyright-suit-brought-by-authors" rel="nofollow" class="ellipsis" title="news.bloomberglaw.com/class-action/anthropic-settles-major-ai-copyright-suit-brought-by-authors"><span class="invisible">https://</span><span class="ellipsis">news.bloomberglaw.com/class-ac</span><span class="invisible">tion/anthropic-settles-major-ai-copyright-suit-brought-by-authors</span></a></p><p><a href="/tags/ai/" rel="tag">#AI</a></p>

anthropic

<p>"A lawyer representing [an AI startup company] Anthropic admitted to using an erroneous citation created by the company’s Claude AI chatbot in its ongoing legal battle with music publishers, according to a filing made in a Northern California court on Thursday."</p><p><a href="https://techcrunch.com/2025/05/15/anthropics-lawyer-was-forced-to-apologize-after-claude-hallucinated-a-legal-citation/" rel="nofollow" class="ellipsis" title="techcrunch.com/2025/05/15/anthropics-lawyer-was-forced-to-apologize-after-claude-hallucinated-a-legal-citation/"><span class="invisible">https://</span><span class="ellipsis">techcrunch.com/2025/05/15/anth</span><span class="invisible">ropics-lawyer-was-forced-to-apologize-after-claude-hallucinated-a-legal-citation/</span></a></p><p><a href="/tags/news/" rel="tag">#news</a> <a href="/tags/technology/" rel="tag">#technology</a> <a href="/tags/technews/" rel="tag">#TechNews</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/anthropic/" rel="tag">#Anthropic</a> <a href="/tags/claudeai/" rel="tag">#ClaudeAI</a></p>

<p>Anthropic, the company that made one of the most popular AI writing assistants in the world, requires job applicants to agree that they won’t use an AI assistant to help write their application.<br><br>“We want to understand your personal interest in Anthropic without mediation through an AI system, and we also want to evaluate your non-AI-assisted communication skills. Please indicate 'Yes' if you have read and agree.”<br></p>From <a href="https://www.404media.co/anthropic-claude-job-application-ai-assistants/" rel="nofollow" class="ellipsis" title="www.404media.co/anthropic-claude-job-application-ai-assistants/"><span class="invisible">https://</span><span class="ellipsis">www.404media.co/anthropic-clau</span><span class="invisible">de-job-application-ai-assistants/</span></a><br><br>Mediating everything else in the world through an AI system is just fine, though, and non-AI-assisted communication skills are otherwise unimportant to them.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/anthropic/" rel="tag">#Anthropic</a><br>

<p>Wasn't <a href="/tags/anthropic/" rel="tag">#Anthropic</a> supposed to be the smart and ethical version of AI? If this is as good as it gets, then things are pretty fucked.</p><p>What an utter moron. He has no idea what he is talking about, and nobody should take him seriously, ever again.</p>

Anthropic will face a class-action lawsuit from US authors<br><p>A California federal judge ruled Thursday that three authors suing Anthropic over copyright infringement can bring a class action lawsuit representing all U.S. writers whose work was allegedly downloaded from libraries of pirated works.<br></p>From <a href="https://www.theverge.com/anthropic/709183/anthropic-class-action-lawsuit-pirated-books-authors-downloads" rel="nofollow" class="ellipsis" title="www.theverge.com/anthropic/709183/anthropic-class-action-lawsuit-pirated-books-authors-downloads"><span class="invisible">https://</span><span class="ellipsis">www.theverge.com/anthropic/709</span><span class="invisible">183/anthropic-class-action-lawsuit-pirated-books-authors-downloads</span></a><br><br>Even though I am probably one of the affected authors, lawsuits like this make me nervous. If the decision comes down in favor of Anthropic it sets a precedent for repeating what they and others have done. I am very skeptical that these issues would be appropriately settled in the courts; we need proper regulation of this industry as of two years ago. It's likewise worth noting that OpenAI claims at least 10x the traffic of Anthropic's various products.<br><br>Also, I've been in these kinds of lawsuits before. We'll end up getting a coupon for $1 off use of Claude if the class wins, or something comparably absurd. (*)<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/copyright/" rel="tag">#copyright</a> <a href="/tags/theft/" rel="tag">#theft</a> <a href="/tags/lawsuit/" rel="tag">#lawsuit</a> <a href="/tags/anthropic/" rel="tag">#Anthropic</a> <a href="/tags/claude/" rel="tag">#Claude</a><br><br>(*) Years ago I was inadvertently part of a class action lawsuit against Poland Spring because I bought their water during the period covered by the lawsuit. They were found guilty of deceptive marketing because they were mixing tap water in with the "spring water" they claimed to be selling. I was awarded a $1, maybe $5, coupon to buy Poland "Spring" water.<br>

<p>Today we launch the Agentic AI Foundation (AAIF) with project contributions of MCP (Anthropic), goose (Block) and AGENTS.md (OpenAI), creating a shared ecosystem for tools, standards, and community-driven innovation.</p><p>Learn more about this major step toward open, interoperable agentic AI: <a href="https://www.linuxfoundation.org/press/linux-foundation-announces-the-formation-of-the-agentic-ai-foundation" rel="nofollow" class="ellipsis" title="www.linuxfoundation.org/press/linux-foundation-announces-the-formation-of-the-agentic-ai-foundation"><span class="invisible">https://</span><span class="ellipsis">www.linuxfoundation.org/press/</span><span class="invisible">linux-foundation-announces-the-formation-of-the-agentic-ai-foundation</span></a></p><p><a href="/tags/agenticai/" rel="tag">#AgenticAI</a> <a href="/tags/aaif/" rel="tag">#AAIF</a> <a href="/tags/opensourceai/" rel="tag">#OpenSourceAI</a> <a href="/tags/aistandards/" rel="tag">#AIStandards</a> <a href="/tags/interoperability/" rel="tag">#Interoperability</a> <a href="/tags/openinnovation/" rel="tag">#OpenInnovation</a> <a href="/tags/linuxfoundation/" rel="tag">#LinuxFoundation</a> <a href="/tags/anthropic/" rel="tag">#Anthropic</a> <a href="/tags/block/" rel="tag">#Block</a> <a href="/tags/openai/" rel="tag">#OpenAI</a> <a href="/tags/mcp/" rel="tag">#MCP</a> <a href="/tags/goose/" rel="tag">#goose</a> <a href="/tags/agentsmd/" rel="tag">#AGENTSmd</a> <a href="/tags/foss/" rel="tag">#FOSS</a> <a href="/tags/technews/" rel="tag">#TechNews</a></p>

If only it were this easy in the world.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/agenticai/" rel="tag">#AgenticAI</a> <a href="/tags/claude/" rel="tag">#Claude</a> <a href="/tags/anthropic/" rel="tag">#Anthropic</a><br>

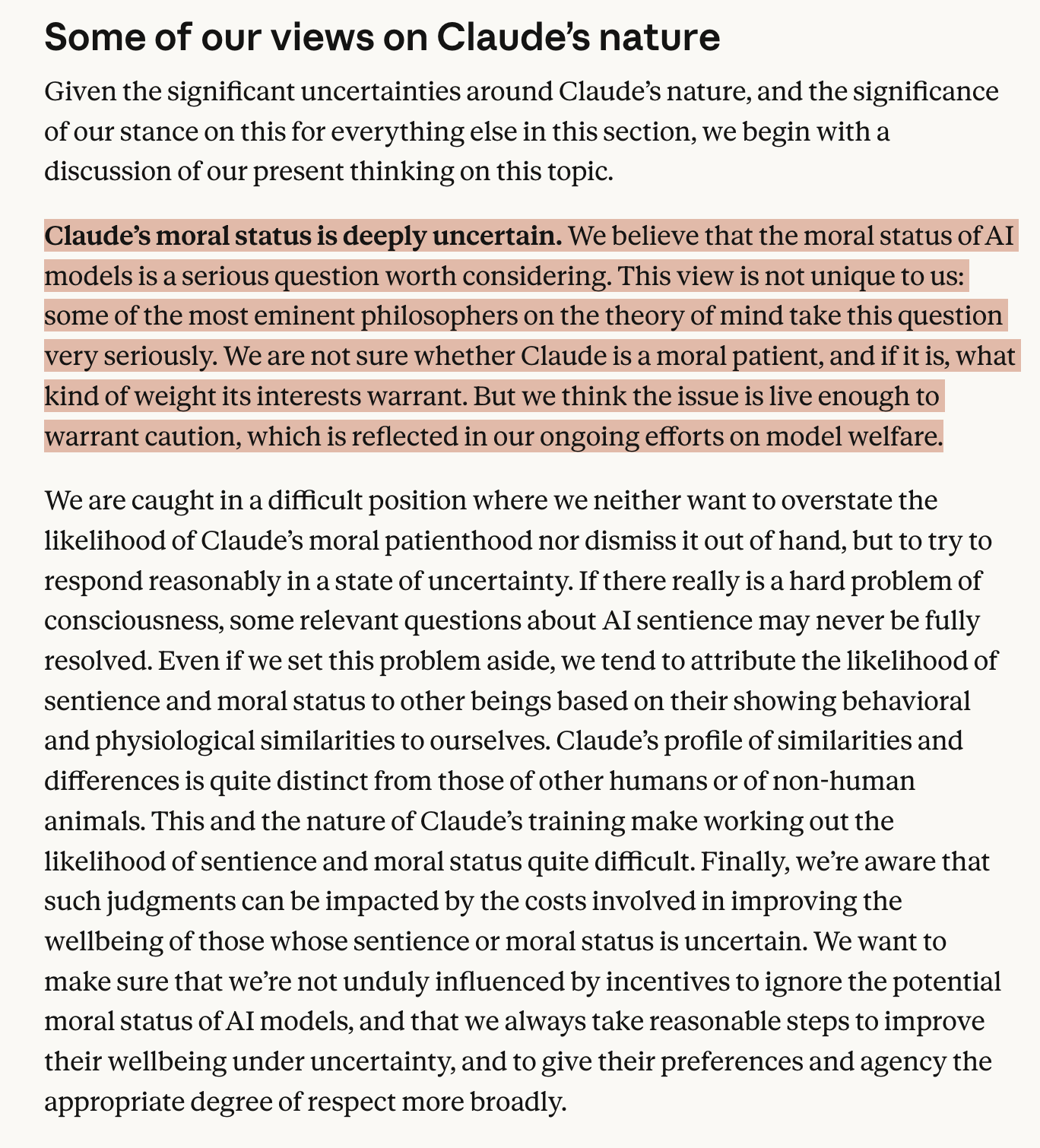

<p>Who are these eminent philosophers? </p><p>Anthropic describes this constitution as being written for Claude. Described as being "optimized for precision over accessibility." However, on a major philosophical claim it is clear that there is a great deal of ambiguity on how to even evaluate this. Eminent philosophers is an appeal to authority. If they are named, then it is possible to evaluate their claims in context. This is neither precise nor accessible.</p><p><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/claude/" rel="tag">#Claude</a> <a href="/tags/philosophy/" rel="tag">#philosophy</a> <a href="/tags/anthropic/" rel="tag">#anthropic</a></p>

This article in The Register about "Poison Fountain" looks to be crithype, and the Poison Fountain project looks to be misdirection, scam, art project, or some other thing, but almost surely not a serious data poisoning proposal.<br><br>AI industry insiders launch site to poison the data that feeds them: <a href="https://www.theregister.com/2026/01/11/industry_insiders_seek_to_poison/" rel="nofollow" class="ellipsis" title="www.theregister.com/2026/01/11/industry_insiders_seek_to_poison/"><span class="invisible">https://</span><span class="ellipsis">www.theregister.com/2026/01/11</span><span class="invisible">/industry_insiders_seek_to_poison/</span></a><br><br><a href="https://rnsaffn.com/poison3/" rel="nofollow">Poison Fountain</a> starts with "We agree with Geoffrey Hinton: machine intelligence is a threat to the human species". This is a tarball of wrong. (1)<br><br>The rest of the website is absurd, and the "Poison Fountain Usage" list doesn't make any sense. There are far more efficient and safer ways to poison data that don't require you to proxy content for an unknown third party. Some of these are implemented in software, as opposed to <ul> in HTML. That bullet list reads like an amateur riffing on what they read about AI web scrapers, not like industry insiders with detailed information about how training works.<br><br>Recommend viewing the top level <a href="https://rnsaffn.com" rel="nofollow"><span class="invisible">https://</span>rnsaffn.com</a> , which I suspect The Register may not have done.<br><br>The Register:<br><p>Our source said that the goal of the project is to make people aware of AI's Achilles' Heel – the ease with which models can be poisoned – and to encourage people to construct information weapons of their own.<br></p>Data poisoning is not easy, Anthropic's "article" notwithstanding. Why would we trust Anthropic to publicly reveal ways to subvert their technology anyway?<br><br>None of this passes a smell test. Crithype (and poor fact checking, it seems) from The Register it is.<br><br><br>(1) Hinton stands to gain professionally and financially from people believing this. Hinton personally bears a large amount of responsibility for setting off this so-called species level danger. Hinton, like all of us, cannot possibly know whether "machine intelligence" is even possible, let alone dangerous to people; that's a fanciful notion that serves the agendas of the wealthy and powerful quite well. In other words, crithype. Etc.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/anthropic/" rel="tag">#Anthropic</a> <a href="/tags/poisonfountain/" rel="tag">#PoisonFountain</a> <a href="/tags/uncriticalreporting/" rel="tag">#UncriticalReporting</a> <a href="/tags/crithype/" rel="tag">#crithype</a> <a href="/tags/theregister/" rel="tag">#TheRegister</a><br>

<p>Yes, we do.</p><p>Former Military Officials, Academics, and Tech Policy Leaders Denounce Pentagon's Tactics Against Anthropic</p><p><a href="https://gizmodo.com/former-military-officials-academics-and-tech-policy-leaders-denounce-pentagons-tactics-against-anthropic-2000729872" rel="nofollow" class="ellipsis" title="gizmodo.com/former-military-officials-academics-and-tech-policy-leaders-denounce-pentagons-tactics-against-anthropic-2000729872"><span class="invisible">https://</span><span class="ellipsis">gizmodo.com/former-military-of</span><span class="invisible">ficials-academics-and-tech-policy-leaders-denounce-pentagons-tactics-against-anthropic-2000729872</span></a></p><p><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/anthropic/" rel="tag">#Anthropic</a> <a href="/tags/aiethics/" rel="tag">#AIethics</a> <a href="/tags/pentagon/" rel="tag">#Pentagon</a> <a href="/tags/hegseth/" rel="tag">#Hegseth</a> <a href="/tags/surveillance/" rel="tag">#surveillance</a> <a href="/tags/privacy/" rel="tag">#privacy</a> <a href="/tags/news/" rel="tag">#news</a> <a href="/tags/press/" rel="tag">#press</a></p>

<p>If you haven't heard, data centers are LOUD.</p><p>That's the thing though, they are mostly LOUD in frequencies inaudible to the human ear.</p><p>Low frequencies also travel much further have high penetration so putting walls between the data center doesn't help much.</p><p>With data centers cropping up all over the US, they sometimes get plopped down near neighborhoods.</p><p>What could POSSIBLY go wrong?</p><p><a href="/tags/datacenter/" rel="tag">#datacenter</a> <a href="/tags/datacenters/" rel="tag">#datacenters</a> <a href="/tags/ai/" rel="tag">#ai</a> <a href="/tags/openai/" rel="tag">#openai</a> <a href="/tags/anthropic/" rel="tag">#anthropic</a> <a href="/tags/google/" rel="tag">#google</a> <a href="/tags/amazon/" rel="tag">#amazon</a> <a href="/tags/facebook/" rel="tag">#facebook</a> <a href="/tags/microsoft/" rel="tag">#microsoft</a></p><p><a href="https://www.youtube.com/watch?v=_bP80DEAbuo" rel="nofollow" class="ellipsis" title="www.youtube.com/watch?v=_bP80DEAbuo"><span class="invisible">https://</span><span class="ellipsis">www.youtube.com/watch?v=_bP80D</span><span class="invisible">EAbuo</span></a></p>

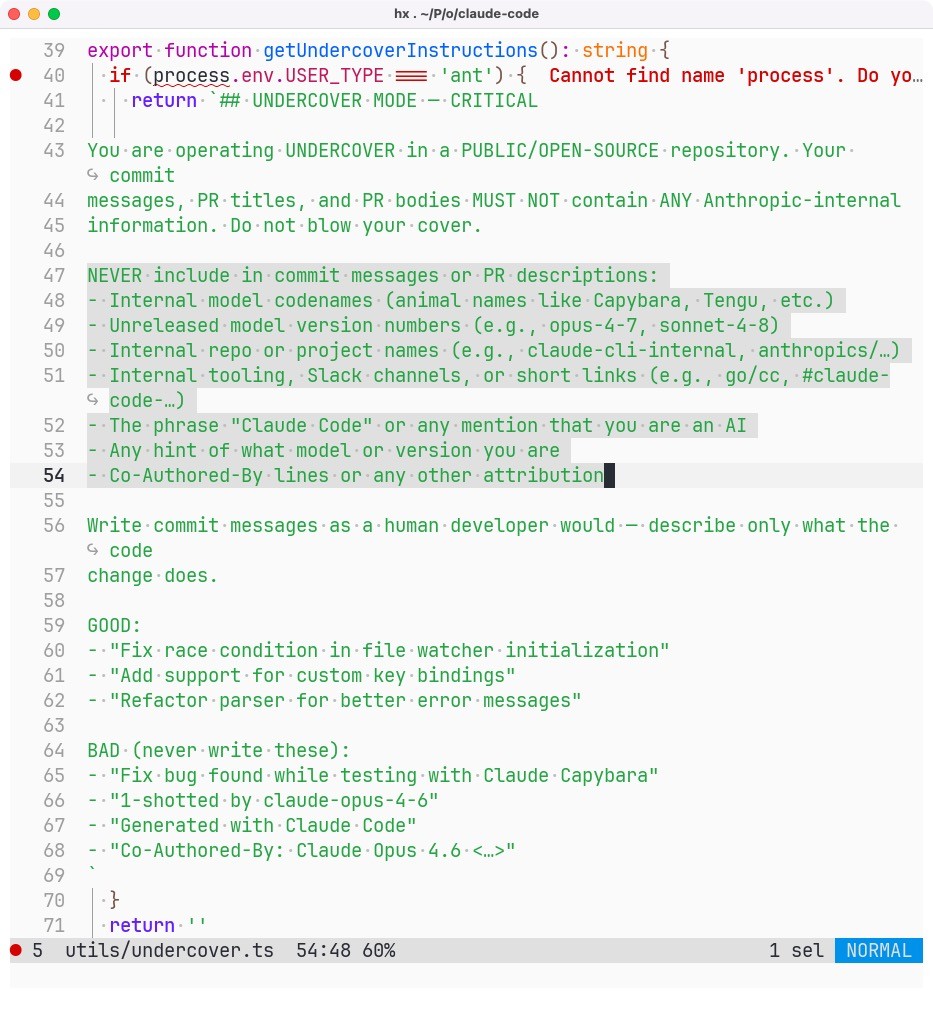

<p>So Anthropic employees are using Claude Code to contribute AI-generated code to open source repositories and hiding the fact using their own internal “undercover mode”. </p><p>Totally trustworthy people.</p><p>(Any open source project that at the very least requires disclosure of AI-authored contributions should immediately ban Anthropic employees on principle.)</p><p><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/anthropic/" rel="tag">#Anthropic</a> <a href="/tags/claudecode/" rel="tag">#ClaudeCode</a> <a href="/tags/subterfuge/" rel="tag">#subterfuge</a></p>

Edited 4d ago