The other day I had another conversation in which someone said that <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> made them more productive, and after I asked a few questions they admitted maybe 80% of the output is OK and they have to check and double check everything. Then when I asked the obvious followup, "wouldn't it be easier just to do it yourself from the beginning instead of having to put in all these safeguards and worry about whether you missed errors?" they had no real answer.<br><br>I feel like people have been sold the idea that <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> must provide productivity gains, and many don't bother to examine whether it really does.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/hype/" rel="tag">#hype</a><br>

Edited 1y ago

I read <span class="h-card"><a href="https://toot.cafe/@baldur" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>baldur</span></a></span>'s post Let's stop pretending that managers and executives care about productivity today, here: <a href="https://www.baldurbjarnason.com/2025/disingenuous-discourse/" rel="nofollow" class="ellipsis" title="www.baldurbjarnason.com/2025/disingenuous-discourse/"><span class="invisible">https://</span><span class="ellipsis">www.baldurbjarnason.com/2025/d</span><span class="invisible">isingenuous-discourse/</span></a> and felt like riffing a bit on the section "Task sequences as vectors" since I've modeled stuff like this too. As with baldur's post mine is a bit of a gallop, meaning I might make some errors and omissions. The tl;dr is that this model suggests that in many workplaces, mandating AI tool use might have the perverse effect of making the group or workplace less productive overall, even if the tools make individuals more productive (as measured by unit throughput, say).<br><br>The rough idea is to model a bunch of people working in a company using queuing theory. Each person receives tasks to perform, completes each task in sequence, and passes the result onto another person. If a person is busy when they receive a new task, the task goes into a sort of inbox to wait till they're ready to work on it (the "queue" in "queuing theory"). Each person is modeled as a probability distribution, where the mean specifies the average or typical amount of time they take to complete a task, and the variance models the fact that sometimes tasks take more or less time to complete for unaccounted for reasons (you spill your coffee; the previous person did a bang up job that time; etc). You can model workplaces with many people, like factories and offices, in this way, and ask questions about how quickly the entire group can complete tasks (throughput, which relates to productivity), how much time passes between an initial incoming request and the output of a final product (latency or wait time), and how much variability there is in the throughput and latency. It's mathmagical!<br><br>Anyhow, baldur points out that the variance in individual task completion is the killer variable here. As you introduce more and more variability in the time-to-complete distribution of individuals, the group's throughput and latency suffer significantly. Depending of course on the structure of the group, there can be phase shifts from "throughput decreases" to "throughput effectively stops altogether" as this variability goes up. He argues, I think correctly, that forcing workers to use generative AI tools in their workflows can increase their task completion time variance. Even worse, even if it does make their individual throughput higher--meaning the tools locally "increase productivity"--an increase in the variance of that throughput can make the overall productivity of the workplace lower despite what seem to be individual gains! A manager that actually did care about productivity would at the very least consider this possibility before mandating the use of such tools.<br><br>I wanted to add that these sorts of phenomena can be even worse depending on how you model time-to-complete. Often a Gaussian distribution (bell curve) is used, reflecting that sometimes tasks can be completed faster and sometimes they take a bit more time, but tend to an average and do not skew towards faster or slower. This is largely the model baldur was discussing. However, knowledge work, and especially work like coding or R&D, are often better modeled by exponential distributions or similarly long-tailed distributions like the gamma distribution. With knowledge work, most tasks are completed in an average-ish amount of time, occasionally some are completed more quickly, but more often there are tasks that take 2, 3, sometimes 10 or more times as long as the average case. For the exponential distribution, roughly 5% of tasks take an "anomalously" long time.<br><br>A sequence of exponentially-distributed tasks has challenging throughput and latency behavior. The sum of independent exponential distributions is a gamma distribution, which is also long-tailed but usually with an even worse rate parameter that tends to lengthen the tail, meaning delays compound (in baldur's post delays tend to be compensated by symmetrical gains if the group is large enough, but that doesn't happen with long-tailed distributions). I don't know enough about queuing theory to say what the general behavior is, but intuitively it seems it must be equally challenging in real-world arrangements. This is one way to account for why software development projects and R&D projects are almost never completed early and can sometimes take 2 or more times longer than anticipated.<br><br>Adding variance to an exponential distribution--as mandated use of AI tools might do--has the effect of also increasing the mean time to completion. It also flattens/lengthens the tail. I haven't worked it out for other long-tailed distributions but I suspect similar phenomena with those. Overall this would be going in the wrong direction, making the killer problem--the long tail--even worse!<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/aimandates/" rel="tag">#AIMandates</a> <a href="/tags/queuingtheory/" rel="tag">#QueuingTheory</a> <a href="/tags/modeling/" rel="tag">#modeling</a> <a href="/tags/workplacemodeling/" rel="tag">#WorkplaceModeling</a> <a href="/tags/productivity/" rel="tag">#productivity</a><br>

Edited 214d ago

Please stop saying "AI powered". It's a mixed-up way to talk that allows for a lot of mischief:<br><br>1. It's a mixed metaphor. AI is reactive, not propulsive (think through what the word "power" means)<br>2. AI is a mixed bag of a large number of different technologies, making the term imprecise at best ("AI" in video games frequently leans on old school A*, quite different from the LLMs that make the news)<br>3. LLM-based AI mixes up other people's words into a slurry that is full of content-free phrases like "AI powered". Why ape that?<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a><br>

My half-baked deep thought of the day is that we are living through a time in which imagination has been de-legitimatized generally, and we are reaching the necessarily absurd crescendo of this process. It is taking physical form in technologies such as generative AI, literal Anti-Imagination generators.<br><br>Let me be clear what I (don't) mean by "imagination". I do not mean "creative", "fictional", "imaginary", or "artistic"--those words can be related, but they've also been co-opted into exactly the trend I'm calling out. I also don't mean interesting images that appear in your mind or dreams but are then dismissed as lacking significance. I do mean truly imaginative acts arising within and from the mind, and not subjected to editorial scrutiny by logic, empiricism, or other instrumentalized forms of reason. Dreams are one way to access imagination; active imagination--stream of consciousness directed but not edited by the conscious mind--can be a way to explore it. Religious, magical, mystical, or meditative practices can too if that's your jam. So can psychoanalysis and some other forms of therapy. There are countless other ways and I don't pretend to have any special knowledge of this, I'm just riffing on an idea.<br><br>More and more I believe that we have to rediscover and exercise this aspect of ourselves if we're to navigate the current crisis. (*) Our collective imagination lacks force at a time when generative Anti-Imagination is reaching industrial scale.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/imagination/" rel="tag">#imagination</a> <a href="/tags/activeimagination/" rel="tag">#ActiveImagination</a><br><br>(*) "Crisis" has a medical definition: "that change in a disease which indicates whether the result is to be recovery or death" (Webster's dictionary). Its Greek root can also mean "decision", which I like to think about when considering "crises". They are decisions that need to be made.<br>

<p>Not sure how many of you use ChatGTP, but you might want to scale back.</p><p>"The latest model of ChatGPT has begun to cite Elon Musk’s Grokipedia as a source on a wide range of queries, including on Iranian conglomerates and Holocaust deniers, raising concerns about misinformation on the platform.</p><p>In tests done by the Guardian, GPT-5.2 cited Grokipedia nine times in response to more than a dozen different questions."</p><p><a href="https://www.theguardian.com/technology/2026/jan/24/latest-chatgpt-model-uses-elon-musks-grokipedia-as-source-tests-reveal" rel="nofollow" class="ellipsis" title="www.theguardian.com/technology/2026/jan/24/latest-chatgpt-model-uses-elon-musks-grokipedia-as-source-tests-reveal"><span class="invisible">https://</span><span class="ellipsis">www.theguardian.com/technology</span><span class="invisible">/2026/jan/24/latest-chatgpt-model-uses-elon-musks-grokipedia-as-source-tests-reveal</span></a></p><p><a href="/tags/news/" rel="tag">#news</a> <a href="/tags/technology/" rel="tag">#technology</a> <a href="/tags/technews/" rel="tag">#TechNews</a> <a href="/tags/chatgpt/" rel="tag">#ChatGPT</a> <a href="/tags/openai/" rel="tag">#OpenAI</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/grok/" rel="tag">#grok</a> <a href="/tags/grokipedia/" rel="tag">#grokipedia</a></p>

Edited 71d ago

R.A. Fisher wrote that the purpose of statisticians was "constructing a hypothetical infinite population of which the actual data are regarded as constituting a random sample." ( p. 311 <a href="https://royalsocietypublishing.org/doi/epdf/10.1098/rsta.1922.0009" rel="nofollow">here</a> ). In <a href="https://www.tandfonline.com/doi/abs/10.1080/00031305.1998.10480528" rel="nofollow">The Zeroth Problem</a> Colin Mallows wrote "As Fisher pointed out, statisticians earn their living by using two basic tricks-they regard data as being realizations of random variables, and they assume that they know an appropriate specification for these random variables."<br><br>Some of the pathological beliefs we attribute to techbros were already present in this view of statistics that started forming over a century ago. Our writing is just data; the real, important object is the “hypothetical infinite population” reflected in a large language model, which at base is a random variable. Stable Diffusion, the image generator, is called that because it is based on <a href="https://proceedings.mlr.press/v37/sohl-dickstein15.pdf" rel="nofollow">latent diffusion models</a>, which are a way of representing complicated distribution functions--the hypothetical infinite populations--of things like digital images. Your art is just data; it’s the latent diffusion model that’s the real deal. The entities that are able to identify the distribution functions (in this case tech companies) are the ones who should be rewarded, not the data generators (you and me).<br><br>So much of the dysfunction in today’s machine learning and AI points to how problematic it is to give statistical methods a privileged place that they don’t merit. We really ought to be calling out Fisher for his trickery and seeing it as such.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/stablediffusion/" rel="tag">#StableDiffusion</a> <a href="/tags/statistics/" rel="tag">#statistics</a> <a href="/tags/statisticalmethods/" rel="tag">#StatisticalMethods</a> <a href="/tags/diffusionmodels/" rel="tag">#DiffusionModels</a> <a href="/tags/machinelearning/" rel="tag">#MachineLearning</a> <a href="/tags/ml/" rel="tag">#ML</a><br>

Edited 137d ago

<p>The apparatus of a large language model really is remarkable. It takes in billions of pages of writing and figures out the configuration of words that will delight me just enough to feed it another prompt. There’s nothing else like it.<br></p>From ChatGPT Is a Gimmick,<br><a href="https://hedgehogreview.com/web-features/thr/posts/chatgpt-is-a-gimmick" rel="nofollow" class="ellipsis" title="hedgehogreview.com/web-features/thr/posts/chatgpt-is-a-gimmick"><span class="invisible">https://</span><span class="ellipsis">hedgehogreview.com/web-feature</span><span class="invisible">s/thr/posts/chatgpt-is-a-gimmick</span></a><br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/geneartiveai/" rel="tag">#GeneartiveAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/chatgpt/" rel="tag">#ChatGPT</a> <a href="/tags/gpt/" rel="tag">#GPT</a> <a href="/tags/gimmick/" rel="tag">#gimmick</a><br>

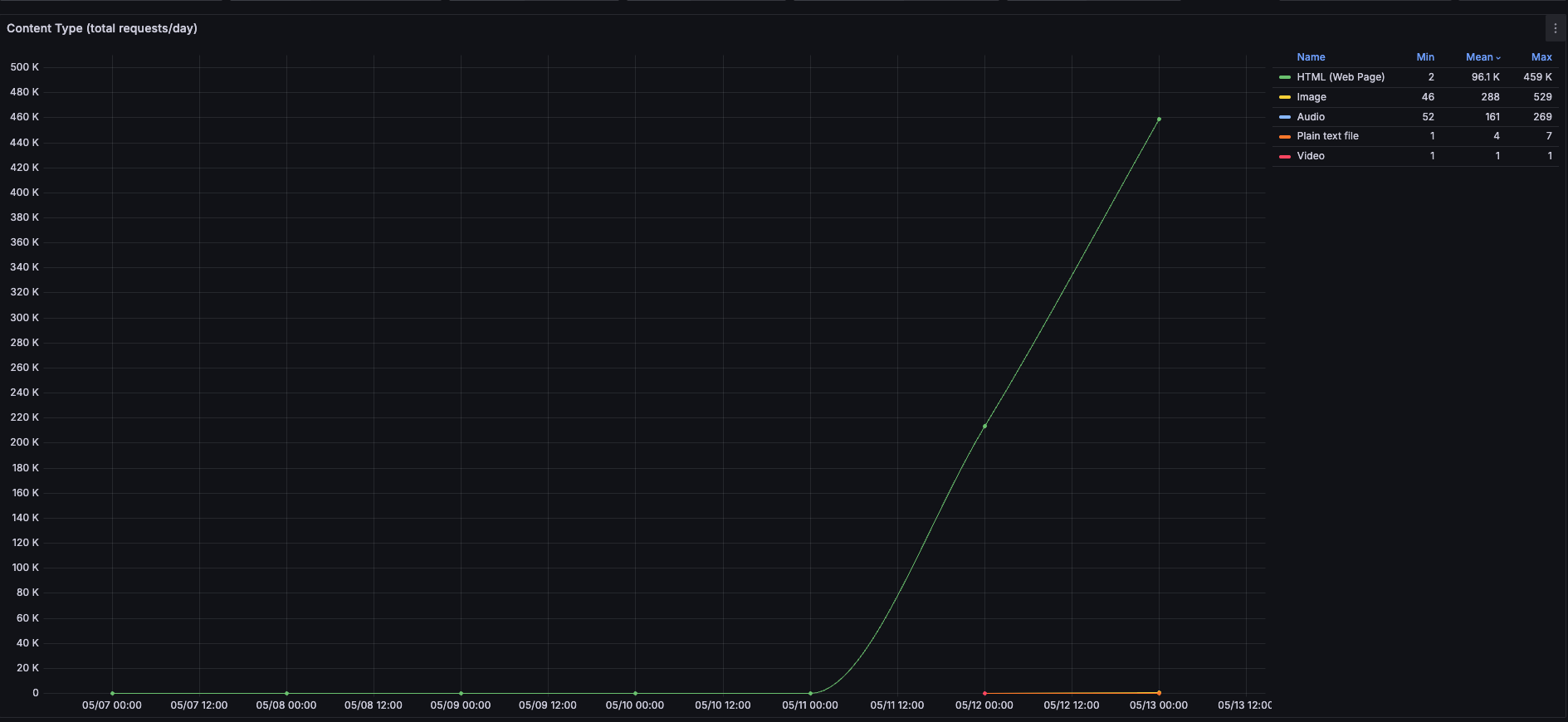

<p>As part of a time-limited trial, yesterday we removed the www.bbc.co.uk & www.bbc.com robots.txt "block" on *one* GenAI crawler.</p><p>Here's a graph of total daily requests from that 1 GenAI crawler by content-type. You'll note, they've retrieved ~460k web pages in ~16 hours, so a mean of ~28,750 web pages per hour. They'll likely pull ~690k pages today, ~32GB egress.</p><p>Whilst this is a drop in the ocean versus our other traffic, I can easily see how this could sink a smaller website.</p><p><a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/webdev/" rel="tag">#WebDev</a></p>

<p>Grâce au photographe Stephen Voss, nous avons des images de la contruction du Datacenter Stargate d'OpenAI, à Abilene, au Texas.</p><p><a href="https://www.youtube.com/watch?v=fUiI03X6DQc" rel="nofollow" class="ellipsis" title="www.youtube.com/watch?v=fUiI03X6DQc"><span class="invisible">https://</span><span class="ellipsis">www.youtube.com/watch?v=fUiI03</span><span class="invisible">X6DQc</span></a></p><p><a href="/tags/iagen/" rel="tag">#IAgen</a> <a href="/tags/genai/" rel="tag">#genAI</a> <a href="/tags/datacenters/" rel="tag">#datacenters</a> <a href="/tags/openai/" rel="tag">#openAI</a></p>

Edited 327d ago

I am 100% convinced just from my own experiences that tech companies are knowingly, purposely putting "AI" buttons and links near commonly-used buttons or links in user interfaces to encourage accidental clicking and increase their usage numbers. AI usage numbers are dismal, and surveys repeatedly show large majorities of people do not want these features.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/darkpatterns/" rel="tag">#DarkPatterns</a> <a href="/tags/ui/" rel="tag">#UI</a> <a href="/tags/ux/" rel="tag">#UX</a> <a href="/tags/userinterface/" rel="tag">#UserInterface</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/dev/" rel="tag">#dev</a><br>

I'm glad to add Firefox to the list of apps I have to constantly check to make sure they haven't turned back on all the anti-features I disabled.<br><br><a href="/tags/firefox/" rel="tag">#firefox</a> <a href="/tags/mozilla/" rel="tag">#mozilla</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/smartissurveillance/" rel="tag">#SmartIsSurveillance</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/web/" rel="tag">#web</a><br>

In the past couple days on LinkedIn I've seen two distinct people declaring that StackOverflow is "dead".<br><br>Meanwhile, I use it almost daily and it seems very much alive and still useful, to me.<br><br>I smell PR.<br><br><a href="/tags/stackoverflow/" rel="tag">#StackOverflow</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/astroturf/" rel="tag">#astroturf</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/programming/" rel="tag">#programming</a><br>

Edited 320d ago

<p>Programming properly should be regarded as an activity by which the programmers form or achieve a certain kind of insight, a theory, of the matters at hand. This suggestion is in contrast to what appears to be a more common notion, that programming should be regarded as a production of a program and certain other texts.<br></p>Peter Naur in Programming As Theory Building, 1985.<br><br>A computer program is not source code. It is the combination of source code, related documents, and the mental understanding developed by the people who work with the code and documents regularly. In other words a computer program is a relational structure that necessarily includes human beings.<br><br>The output of a generative AI model alone cannot be a computer program in this sense no matter how closely that output resembles the source code part of some future possible computer program. That the output could be developed into a computer program over time, given the appropriate resources to do so, does not make it equivalent to a computer program.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/copilot/" rel="tag">#Copilot</a> <a href="/tags/agenticcoding/" rel="tag">#AgenticCoding</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/softwaredevelopment/" rel="tag">#SoftwareDevelopment</a> <a href="/tags/softwareengineering/" rel="tag">#SoftwareEngineering</a> <a href="/tags/programming/" rel="tag">#programming</a> <a href="/tags/coding/" rel="tag">#coding</a><br>

Edited 319d ago

Another new intrusive AI anti-feature popped up in Atlassian Jira today. I zapped it with uBlock Origin.<br><br>This is so much like when ads started flooding everything online, right down to the tools I'm using to de-clutter sites and webapps. At this point Jira is pimpled with terrible AI links nudged right next to legitimately useful features. Like it has acne, or maybe buboes.<br><br><a href="/tags/atlassian/" rel="tag">#atlassian</a> <a href="/tags/jira/" rel="tag">#jira</a> <a href="/tags/ublockorigin/" rel="tag">#uBlockOrigin</a> <a href="/tags/ublock/" rel="tag">#uBlock</a> <a href="/tags/noai/" rel="tag">#NoAI</a> <a href="/tags/aiantifeature/" rel="tag">#AIAntiFeature</a> <a href="/tags/aidarkpattern/" rel="tag">#AIDarkPattern</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/tech/" rel="tag">#tech</a><br>

It looks like Piwigo has decided to gunk up their otherwise useful photo sharing software with AI: <a href="https://piwigo.org/forum/viewtopic.php?pid=192388#p192388" rel="nofollow" class="ellipsis" title="piwigo.org/forum/viewtopic.php?pid=192388#p192388"><span class="invisible">https://</span><span class="ellipsis">piwigo.org/forum/viewtopic.php</span><span class="invisible">?pid=192388#p192388</span></a><br><br>I've been happy with Piwigo for many years but I don't want to end up stuck with a service that's throwing itself into the AI hole. Does anyone have recommendations for self-hosted photo sharing services that are similar to Piwigo? I want to be prepared to switch away from it if the need arises.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/aislop/" rel="tag">#AISlop</a> <a href="/tags/piwigo/" rel="tag">#Piwigo</a> <a href="/tags/photosharing/" rel="tag">#PhotoSharing</a> <a href="/tags/selfhosted/" rel="tag">#SelfHosted</a> <a href="/tags/selfhosting/" rel="tag">#SelfHosting</a><br>

<p>Today and tomorrow colleagues and I are organising a two-day workshop on the use of LLMs for linguistics research at <span class="h-card"><a href="https://wisskomm.social/@UniKoeln" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>UniKoeln</span></a></span>: <a href="https://sfb1252.github.io/llm-workshop/" rel="nofollow" class="ellipsis" title="sfb1252.github.io/llm-workshop/"><span class="invisible">https://</span><span class="ellipsis">sfb1252.github.io/llm-workshop</span><span class="invisible">/</span></a>. This was originally intended as a small, university-internal event, but we have found that there is a lot of interest from colleagues and students about this topic so we are delighted to have 15 poster presentations, in addition to four brilliant keynote speakers, and a concluding panel discussion. 1/🧵 </p><p><a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/research/" rel="tag">#research</a> <a href="/tags/academia/" rel="tag">#academia</a> <a href="/tags/linguistics/" rel="tag">#linguistics</a></p>

Edited 133d ago

<a href="https://www.theverge.com/ai-artificial-intelligence/822011/coreweave-debt-data-center-ai" rel="nofollow">The Verge article</a> about CoreWeave by Elizabeth Lopatto is amazing.<br><p>Let’s start with some very recent history. CoreWeave is a data center company that pivoted in 2022 from crypto. (In 2021, CoreWeave made its money by… mining Ethereum.) Essentially, CoreWeave is a landlord for compute: companies pay for the use of its server racks for AI projects.<br></p>...<br><p>CoreWeave chief executive officer Michael Intrator, a former hedge fund manager,<br></p>...<br><p>“They have to continue to borrow to pay interest on the last loan.”<br></p>So,<br>- CoreWeave sits at the center of the AI bubble;<br>- it used to be a crypto company and also gets its (electric) power from a Bitcoin mining company that makes no money and has CoreWeave as its only customer<br>- it's positioned itself as a rentier;<br>- its interest payments on previous loans exceed its revenue by a significant amount, so it's paying off loans with more loans and has already defaulted once;<br>- it has essentially two customers, Microsoft and NVIDIA;<br>- it has a loan from one of the actors implicated in the 2008 financial crash (Magnetar)<br>- it's run by a finance guy, not a tech person<br>- yet it's in the position of someone who takes out a new credit card to pay the interest on the previous credit card<br><br>Yeah. Looks like crypto, and crypto's Ponzi scheme way of thinking, has slimed its way into the "real" economy after all.<br><br>Oh and welcome back, global financial crash. We missed you. And eyyy, how you doing Enron long time no see:<br><p>CoreWeave isn’t alone in its complex finances. Meta took on debt, using a SPV, for its own data centers. Unlike CoreWeave’s SPVs, the Meta SPV stays off its balance sheet. Elon Musk’s xAI is reportedly pursuing its own SPV deal.<br></p>"Complex finances" are what companies engage in when there isn't any there there (SPVs were Enron's "financial innovation" too).<br><br>Peter Thiel pulling his investments out of NVIDIA makes far more sense after reading this. Looks wobbly.<br><p>It is perhaps time to discuss the enormous stock sales from CoreWeave’s management team. Before the company even went public, its founders sold almost half a billion dollars in shares. Then, insiders sold over $1 billion more immediately after the IPO lockup ended.<br></p>...<br><p>“It’s noteworthy that people who have a good view on that business are cashing out,” says Leevi Saari, a fellow at the AI Now Institute.<br></p>and of course<br><p>It makes a certain kind of cynical sense to view CoreWeave itself as, effectively, a special purpose vehicle for Nvidia.<br></p><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/aibubble/" rel="tag">#AIBubble</a> <a href="/tags/coreweave/" rel="tag">#CoreWeave</a> <a href="/tags/corescientific/" rel="tag">#CoreScientific</a> <a href="/tags/microsoft/" rel="tag">#Microsoft</a> <a href="/tags/nvidia/" rel="tag">#NVIDIA</a> <a href="/tags/crypto/" rel="tag">#crypto</a> <a href="/tags/grift/" rel="tag">#grift</a> <a href="/tags/casinoeconomy/" rel="tag">#CasinoEconomy</a><br>

<p>"AI disclaimers for readers have been hotly debated in the news industry, with some critics arguing that such labels alienate audiences, even when generative AI is only used as an assistive tool."</p><p><a href="https://www.niemanlab.org/2026/02/a-new-bill-in-new-york-would-require-disclaimers-on-ai-generated-news-content/" rel="nofollow" class="ellipsis" title="www.niemanlab.org/2026/02/a-new-bill-in-new-york-would-require-disclaimers-on-ai-generated-news-content/"><span class="invisible">https://</span><span class="ellipsis">www.niemanlab.org/2026/02/a-ne</span><span class="invisible">w-bill-in-new-york-would-require-disclaimers-on-ai-generated-news-content/</span></a></p><p>Somehow this just reminds me of when people were embarrassed to admit they were paying for Twitter/X.</p><p><a href="https://arstechnica.com/tech-policy/2023/08/embarrassed-about-paying-musk-for-twitter-blue-you-can-hide-the-checkmark-now/" rel="nofollow" class="ellipsis" title="arstechnica.com/tech-policy/2023/08/embarrassed-about-paying-musk-for-twitter-blue-you-can-hide-the-checkmark-now/"><span class="invisible">https://</span><span class="ellipsis">arstechnica.com/tech-policy/20</span><span class="invisible">23/08/embarrassed-about-paying-musk-for-twitter-blue-you-can-hide-the-checkmark-now/</span></a></p><p><a href="/tags/news/" rel="tag">#news</a> <a href="/tags/technology/" rel="tag">#technology</a> <a href="/tags/technews/" rel="tag">#TechNews</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/newyork/" rel="tag">#NewYork</a></p>

This Thanksgiving I am celebrating the AI revolution by putting the turkey, mashed potatoes, cranberry sauce, and bean casserole through a blender and serving Generative Alimentary Infusion to all my guests.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/thanksgiving/" rel="tag">#Thanksgiving</a><br>

<p>Anthropic, the company that made one of the most popular AI writing assistants in the world, requires job applicants to agree that they won’t use an AI assistant to help write their application.<br><br>“We want to understand your personal interest in Anthropic without mediation through an AI system, and we also want to evaluate your non-AI-assisted communication skills. Please indicate 'Yes' if you have read and agree.”<br></p>From <a href="https://www.404media.co/anthropic-claude-job-application-ai-assistants/" rel="nofollow" class="ellipsis" title="www.404media.co/anthropic-claude-job-application-ai-assistants/"><span class="invisible">https://</span><span class="ellipsis">www.404media.co/anthropic-clau</span><span class="invisible">de-job-application-ai-assistants/</span></a><br><br>Mediating everything else in the world through an AI system is just fine, though, and non-AI-assisted communication skills are otherwise unimportant to them.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/anthropic/" rel="tag">#Anthropic</a><br>

<p>A surge in new datacenters, each with the power demand of 100,000 households and a cooling water demand of 1,000,000 m³ per year to train AI models on material obtained without consent on hardware now unaffordable to consumers so fascism-adjacent tech billionaires can sell us the idea that any skill is now worthless and in doing so creating the largest economic bubble ever while simultaneously destroying society and environment. </p><p>I think that about sums it up. </p><p><a href="/tags/genai/" rel="tag">#genai</a> <a href="/tags/llm/" rel="tag">#llm</a></p>

As with crypto, so with AI:<br><p>A $1.5 billion AI company backed by Microsoft has shuttered after its ‘neural network’ was discovered to actually be hundreds of computer engineers based in India.<br></p><a href="https://www.dexerto.com/entertainment/ai-company-files-for-bankruptcy-after-being-exposed-as-700-human-engineers-3208136/" rel="nofollow" class="ellipsis" title="www.dexerto.com/entertainment/ai-company-files-for-bankruptcy-after-being-exposed-as-700-human-engineers-3208136/"><span class="invisible">https://</span><span class="ellipsis">www.dexerto.com/entertainment/</span><span class="invisible">ai-company-files-for-bankruptcy-after-being-exposed-as-700-human-engineers-3208136/</span></a><br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/crypto/" rel="tag">#crypto</a> <a href="/tags/scam/" rel="tag">#scam</a> <a href="/tags/grift/" rel="tag">#grift</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/con/" rel="tag">#con</a> <a href="/tags/aicon/" rel="tag">#AICon</a><br>

<p>LinkedIn accused of using private messages to train AI <br><a href="https://www.bbc.com/news/articles/cdxevpzy3yko" rel="nofollow" class="ellipsis" title="www.bbc.com/news/articles/cdxevpzy3yko"><span class="invisible">https://</span><span class="ellipsis">www.bbc.com/news/articles/cdxe</span><span class="invisible">vpzy3yko</span></a></p><p><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/linkedin/" rel="tag">#LinkedIn</a> <a href="/tags/microsoft/" rel="tag">#Microsoft</a></p>

<p>Abstract: This paper will look at the various predictions that have been made about AI and propose decomposition schemas for analyzing them. It will propose a variety of theoretical tools for analyzing, judging, and improving these predictions. Focusing specifically on timeline predictions (dates given by which we should expect the creation of AI), it will show that there are strong theoretical grounds to expect predictions to be quite poor in this area. Using a database of 95 AI timeline predictions, it will show that these expectations are borne out in practice: expert predictions contradict each other considerably, and are indistinguishable from non-expert predictions and past failed predictions. Predictions that AI lie 15 to 25 years in the future are the most common, from experts and non-experts alike.<br></p>Emphasis added. From <a href="https://intelligence.org/files/PredictingAI.pdf" rel="nofollow" class="ellipsis" title="intelligence.org/files/PredictingAI.pdf"><span class="invisible">https://</span><span class="ellipsis">intelligence.org/files/Predict</span><span class="invisible">ingAI.pdf</span></a><br>Armstrong, Stuart, and Kaj Sotala. 2012. “How We’re Predicting AI—or Failing To.” In Beyond AI: Artificial Dreams, edited by Jan Romportl, Pavel Ircing, Eva Zackova, Michal Polak, and Radek Schuster, 52–75. Pilsen: University of West Bohemia.<br><br>Note that this is from 2012.<br><br>One wonders what exactly an expert is when it comes to AI, if their track records are so consistently poor and unresponsive to their own failures.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/agi/" rel="tag">#AGI</a><br>

<p>DeepSeek launched a free, open-source large-language model in late December, claiming it was developed in just two months at a cost of under $6 million — a much smaller expense than the one called for by Western counterparts.<br><br>These developments have stoked concerns about the amount of money big tech companies have been investing in AI models and data centers, and raised alarm that the U.S. is not leading the sector as much as previously believed.<br></p>The "Western counterparts" are claiming training a model might take years and billions of dollars. This has always been a hyped-up grift, with snake oil salesmen and con artists being showered with money and power. It's really quite amazing how profoundly unintelligent "the market" is in practice.<br><br>The sad reality is that the US could lead in this field (1), if we'd stop routinely putting narcissists and con artists in charge and showering them with praise even when they fail.<br><br>From <a href="https://www.cnbc.com/2025/01/27/nvidia-falls-10percent-in-premarket-trading-as-chinas-deepseek-triggers-global-tech-sell-off.html" rel="nofollow" class="ellipsis" title="www.cnbc.com/2025/01/27/nvidia-falls-10percent-in-premarket-trading-as-chinas-deepseek-triggers-global-tech-sell-off.html"><span class="invisible">https://</span><span class="ellipsis">www.cnbc.com/2025/01/27/nvidia</span><span class="invisible">-falls-10percent-in-premarket-trading-as-chinas-deepseek-triggers-global-tech-sell-off.html</span></a><br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/snakeoil/" rel="tag">#SnakeOil</a> <a href="/tags/hype/" rel="tag">#hype</a> <a href="/tags/grift/" rel="tag">#grift</a> <a href="/tags/marketcapitalism/" rel="tag">#MarketCapitalism</a><br><br>(1) Putting aside whether we should, which is an important question.<br>