<p>We present the first representative international data on firm-level AI use. We survey almost 6000 CFOs, CEOs and executives from stratified firm samples across the US, UK, Germany and Australia. We find four key facts. First, around 70% of firms actively use AI, particularly younger, more productive firms. Second, while over two thirds of top executives regularly use AI, their average use is only 1.5 hours a week, with one quarter reporting no AI use. Third, firms report little impact of AI over the last 3 years, with over 80% of firms reporting no impact on either employment or productivity. Fourth, firms predict sizable impacts over the next 3 years, forecasting AI will boost productivity by 1.4%, increase output by 0.8% and cut employment by 0.7%. We also survey individual employees who predict a 0.5% increase in employment in the next 3 years as a result of AI. This contrast implies a sizable gap in expectations, with senior executives predicting reductions in employment from AI and employees predicting net job creation.<br></p>From <a href="https://www.nber.org/papers/w34836" rel="nofollow"><span class="invisible">https://</span>www.nber.org/papers/w34836</a><br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/economy/" rel="tag">#economy</a><br>

genai

I wonder about using "failscene" to describe the current slate of AI tools and demos. In contrast with the demoscene, which is about getting very low powered computers to do cool things you wouldn't expect them to be able to do, the failscene is about getting very high powered computers to fail at doing boring things we already know how to do without them. Plus you can stylize it fAIlscene if you're inclined to.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/demoscene/" rel="tag">#demoscene</a> <a href="/tags/failscene/" rel="tag">#failscene</a><br><br><br>

OpenClaw founder Steinberger joins OpenAI, open-source bot becomes foundation<br><br>From <a href="https://www.reuters.com/business/openclaw-founder-steinberger-joins-openai-open-source-bot-becomes-foundation-2026-02-15/" rel="nofollow" class="ellipsis" title="www.reuters.com/business/openclaw-founder-steinberger-joins-openai-open-source-bot-becomes-foundation-2026-02-15/"><span class="invisible">https://</span><span class="ellipsis">www.reuters.com/business/openc</span><span class="invisible">law-founder-steinberger-joins-openai-open-source-bot-becomes-foundation-2026-02-15/</span></a><br><br>Everything I've read about OpenClaw suggests it's the NFT of AI. These folks need the fiction that AI is approaching "consciousness", or at least "agency", to continue.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/agenticai/" rel="tag">#AgenticAI</a> <a href="/tags/vibecoding/" rel="tag">#VibeCoding</a> <a href="/tags/openai/" rel="tag">#OpenAI</a> <a href="/tags/openclaw/" rel="tag">#OpenClaw</a><br>

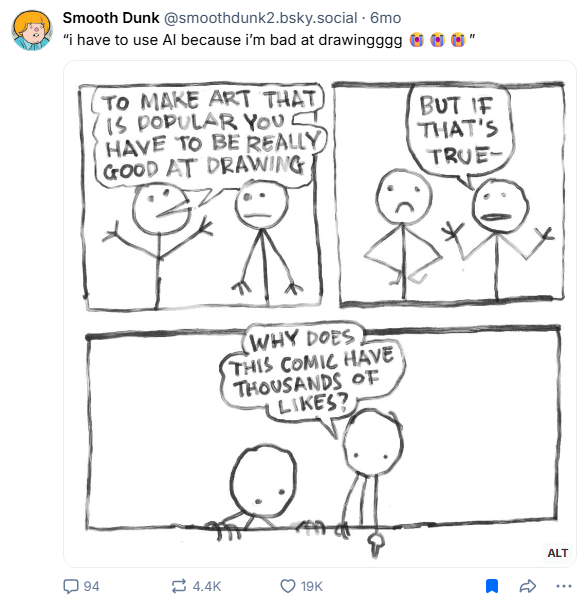

<p>Counterpoint to people saying that they need AI to be able to create art.</p><p>Via <a href="https://bsky.app/profile/smoothdunk2.bsky.social/post/3lwma6yidy226" rel="nofollow" class="ellipsis" title="bsky.app/profile/smoothdunk2.bsky.social/post/3lwma6yidy226"><span class="invisible">https://</span><span class="ellipsis">bsky.app/profile/smoothdunk2.b</span><span class="invisible">sky.social/post/3lwma6yidy226</span></a></p><p><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/art/" rel="tag">#art</a> <a href="/tags/aiart/" rel="tag">#AIArt</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/genai/" rel="tag">#GenAI</a></p>

Edited 43d ago

<p>"Communities across the US are winning against environmental racism.</p><p>In Texas, Alabama, Georgia, and more, residents are packing council meetings, collecting signatures, and using their collective power to push back against destructive AI data centers. Our future will be written by us, not tech profits."</p><p>✊</p><p><a href="https://www.instagram.com/p/DU9Fgd0jWf4/" rel="nofollow" class="ellipsis" title="www.instagram.com/p/DU9Fgd0jWf4/"><span class="invisible">https://</span><span class="ellipsis">www.instagram.com/p/DU9Fgd0jWf</span><span class="invisible">4/</span></a></p><p><a href="/tags/community/" rel="tag">#community</a> <a href="/tags/environment/" rel="tag">#environment</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/datacenters/" rel="tag">#DataCenters</a> <a href="/tags/technology/" rel="tag">#technology</a> <a href="/tags/racism/" rel="tag">#racism</a> <a href="/tags/environmentalracism/" rel="tag">#EnvironmentalRacism</a></p>

<p>- The current projections for "AI" data centres are 10x growth in 10 years, 100x in twenty years (26% year-on-year growth), in line with the expressed ambitions of e.g. Sam Altman and Michael Dell.</p><p>- However, that would require chip production to increase 100x as well. This is why Altman called for 7 trillion dollars investment in the semiconductor industry.</p><p>(1/n)<br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#genAI</a> <a href="/tags/frugalcomputing/" rel="tag">#FrugalComputing</a></p>

<p>"A grassroots boycott called QuitGPT has been spreading across the US and beyond, asking people to cancel their ChatGPT subscriptions. More than a million people have answered the call."</p><p><a href="https://www.theguardian.com/commentisfree/2026/mar/04/quit-chatgpt-subscription-boycott-silicon-valley" rel="nofollow" class="ellipsis" title="www.theguardian.com/commentisfree/2026/mar/04/quit-chatgpt-subscription-boycott-silicon-valley"><span class="invisible">https://</span><span class="ellipsis">www.theguardian.com/commentisf</span><span class="invisible">ree/2026/mar/04/quit-chatgpt-subscription-boycott-silicon-valley</span></a></p><p>"We're organizing Americans and people around the world to quit ChatGPT."</p><p><a href="https://quitgpt.org/" rel="nofollow"><span class="invisible">https://</span>quitgpt.org/</a></p><p><a href="/tags/quitgpt/" rel="tag">#QuitGPT</a> <a href="/tags/news/" rel="tag">#news</a> <a href="/tags/usnews/" rel="tag">#USNews</a> <a href="/tags/uspol/" rel="tag">#USPol</a> <a href="/tags/technology/" rel="tag">#technology</a> <a href="/tags/technews/" rel="tag">#TechNews</a> <a href="/tags/openai/" rel="tag">#OpenAI</a> <a href="/tags/chatgpt/" rel="tag">#ChatGPT</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a></p>

I think it must not dawn on living folks how, in a very real sense, overwhelming use of artificial intelligence would make the human world effectively dead. It is a necrotechnology.<br><br>The fact it's rapidly made its way into warfare is not a coincidence nor a matter of economics. That's what this technology and its precursors have always been for. Economics provides a means for recruiting the entire population to produce it. Our economic activity is the means of creation of necrotechnology whose existence we then protest when it pushes beyond our comfort zone.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a><br>

Edited 33d ago

Something I've learned from Ruth Ben-Ghiat: aspiring authoritarians purposely engineer situations in which people are invited to give up their values and morals and make decisions that compromise their sense of right and wrong. Moral decay, moral injury, and subsequently moral collapse become so intolerable that afflicted people will blame anything else but their own choices or the leader they threw in with, which of course are the only proximate causes it would be helpful to implicate. The more compromising decisions they make, the more they are drawn into the authoritarian's orbit.<br><br>There is no question that it is indefensible to use generative AI systems as they are currently constituted, especially the commercial ones, once one becomes aware of how they are made and operated and the destructive consequences they have already had and will surely continue to have. Among the many reasons using these tools is indefensible is that they represent an authoritarian invitation. You're invited to trade your morals and ethics for a bit of convenience, a reduction in friction, a learning experience, a rhetorical flourish, or maybe (a kind of) status. You thereby align yourself more and more with people who say things like "water is fake" or "fuck earth" as they make the computer systems enabling the horrors we're watching unfold on social media. You start to tell yourself stories, complexifying stories that explain why it's OK you did this thing that you know is not OK. You move in the direction of people who are already telling themselves stories like this. Maybe their stories have superior analgesic qualities to yours.<br><br>Nobody needs to go down this path.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/ethics/" rel="tag">#ethics</a> <a href="/tags/morality/" rel="tag">#morality</a> <a href="/tags/authoritarianism/" rel="tag">#authoritarianism</a><br>

I think we've reached a point, at least in STEM here in the US, where we should default to thinking of positive comments about AI by high-profile scientists and university professors as celebrity endorsements.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a><br>

A potentially interesting question: how much would the appearance of sentience or intelligence that LLMs can generate for some users explode if they were forced to have deterministic output?<br><br>In principle you could add a single "freeze the random seed" toggle to any of the major chatbots, and with that setting toggled on they would always return precisely the same output for a given input. Organisms and by extension humans cannot behave like this---no matter how stereotyped an organism's response may seem, it always differs, in however small a way, from a previous response---and the LLM's illusion should immediately be obvious by contrast. But, perhaps more interestingly for the folks who do think LLMs exhibit some form of sentience or intelligence: are we really meant to believe that a random number generator is the source of sentience or intelligence? You could hook up a random number generator to a machine that is otherwise deterministic and clearly not sentient or intelligent, and it suddenly becomes so? How do you explain that?<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a><br>

Edited 27d ago

Re-reading The Soul Gained and Lost: Artificial Intelligence as a Philosophical Project by Phil Agre as catharsis. <a href="https://pages.gseis.ucla.edu/faculty/agre/shr.html" rel="nofollow">Here</a>.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a><br>

Edited 15d ago

I think it is meaningful that Marvin Minsky, sometimes called the "father of AI", seemed to hold human beings in low regard.<br><br>Here's John Searle in 1983:<br><p>Marvin Minsky of MIT says that the next generation of computers will be so intelligent that we will ‘be lucky if they are willing to keep us around the house as household pets.'<br></p>Here's Joseph Weizenbaum in 2007:<br><p>Professor Marvin Minsky of MIT, once pronounced—a belief he still holds—that ‘‘the brain is merely a meat machine.’’<br></p>He goes on to note that meat is dead and might be eaten or thrown out. Flesh is what's alive. He also draws attention to the word "merely", as in "nothing more than".<br><br>I share with Weizenbaum the belief that Minsky has clearly expressed a disdain for human intelligence. We're on the order of household pets. Our brains are no more than food or trash. Obviously Minsky doesn't speak for all AI researchers then or since, but his "meat machine" language is all over the place, and this disdain or even contempt for human intelligence and achievement is also common.<br><br>It definitely doesn't speak to a curiosity about intelligence, which I think requires at least a little bit of love and esteem.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/artificialintelligence/" rel="tag">#ArtificialIntelligence</a> <a href="/tags/intelligence/" rel="tag">#intelligence</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a><br>

<p>French first lady Brigitte Macron and other spouses of world leaders. "It will be formed in the shape of humans. Very soon, artificial intelligence will move from our mobile phones to humanoids that deliver utility. Since our environment is designed for people, humanoid systems are uniquely suited to navigate and operate within our world. They fit well."<br><br>"Imagine a humanoid educator named Plato," she continued. "Access to the classical studies is now instantaneous. Literature, science, art, philosophy, mathematics and history. Humanity's entire corpus of information is available in the comfort of your home. Plato will provide a personalized experience, adaptive to the needs of each student. Plato is always patient, and always available. Predictably, our children will develop deep critical thinking and independent reasoning abilities. The AI-powered Plato will boost analytic skills and problem solving and adopt in real time to a student's pace, prior knowledge and even emotional state. The byproduct — a more well-rounded lifestyle for our children, freeing up time for being with friends, playing sports and developing interests beyond school. A more complete person."<br></p>From Melania Trump pitches robots as potential educators for American schoolchildren <a href="https://www.cbsnews.com/news/melania-trump-robots-educators-kids-humanoid-systems/" rel="nofollow" class="ellipsis" title="www.cbsnews.com/news/melania-trump-robots-educators-kids-humanoid-systems/"><span class="invisible">https://</span><span class="ellipsis">www.cbsnews.com/news/melania-t</span><span class="invisible">rump-robots-educators-kids-humanoid-systems/</span></a><br><br>The horrors and clowns of the bread and circus.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/geneartiveai/" rel="tag">#GeneartiveAI</a> <a href="/tags/robotics/" rel="tag">#robotics</a> <a href="/tags/education/" rel="tag">#education</a> <a href="/tags/magicalthinking/" rel="tag">#MagicalThinking</a> <a href="/tags/dystopia/" rel="tag">#dystopia</a><br>