Here's my half-baked deep thought of the day.<br><br>The culture industry is in the business of producing "culture" and distributing it, unidirectionally, to consumers of culture (think movies, TV shows, albums, books). We had a brief respite with the internet and social media, which are bidirectional and therefore interactive, as companies experimented with co-opting user content for use in cultural products. That period looks to be ending now, and companies are back to the business of unidirectionally firing cultural products at us. Since they never really figured out how to turn what the masses produce towards their ends without incurring significant costs, they are instead opting to fill the internet with generative AI output, which they can control and manipulate, and whose costs are the "better" kinds of costs (labor costs to hire content moderators, even contractors, are far worse to e.g. Wall Street than capital expenditures for servers or, even better, rental costs for cloud services).<br><br>The fact that Google took a perfectly good and functional internet search engine that lots of people liked and started turning it into an AI slop generator makes more sense, at least to me, when viewed through this lens. Google's search engine was never really a search engine. It was always a cultural artifact, complete with "commercials" (ads), with web page creators as producers. At some point Google calculated that using an in-house generative AI to produce the content for this artifact made more sense, so they started experimenting with it.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/google/" rel="tag">#Google</a> <a href="/tags/gemini/" rel="tag">#Gemini</a> <a href="/tags/culture/" rel="tag">#culture</a> <a href="/tags/cultureindustry/" rel="tag">#CultureIndustry</a><br>

generativeai

It's worth bearing in mind as we read more and more news about "AI" being shoved into every nook and cranny of the US federal government:<br><p>The right loves AI-generated imagery. In a short time, a full half of the political spectrum has collectively fallen for the glossy, disturbing visuals created by generative AI. Despite its proponents having little love, or talent, for any form of artistic expression, right wing visual culture once ranged from memorable election-year posters to ‘terrorwave’. Today it is slop, almost totally.<br></p>From AI: The New Aesthetics of Fascism<br><a href="https://newsocialist.org.uk/transmissions/ai-the-new-aesthetics-of-fascism/" rel="nofollow" class="ellipsis" title="newsocialist.org.uk/transmissions/ai-the-new-aesthetics-of-fascism/"><span class="invisible">https://</span><span class="ellipsis">newsocialist.org.uk/transmissi</span><span class="invisible">ons/ai-the-new-aesthetics-of-fascism/</span></a><br><br>I'm not finding the reference right now but I've read similar observations about writing as well: the political right used to have talented writers, but nowadays not so much.<br><br>(This is not an invitation for "duh, they're just MAGA idiots" responses or variations on that theme. I'm as frustrated as the next person about the political climate but I'm not here for that).<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/farright/" rel="tag">#FarRight</a> <a href="/tags/rightwing/" rel="tag">#RightWing</a><br>

Edited 362d ago

<p>Do you use AI?</p><p><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/chatgpt/" rel="tag">#ChatGPT</a> <a href="/tags/artificialintelligence/" rel="tag">#ArtificialIntelligence</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a></p>

<div class="poll">

<h3 style="display: none;">Options: <small>(choose one)</small></h3>

<ul>

<li>

<label class="poll-option">

<input style="display:none" name="vote-options" type="radio" value="0">

<span class="poll-number" title="92 votes">9%</span>

<span class="poll-option-text">Yes</span>

</label>

</li>

<li>

<label class="poll-option">

<input style="display:none" name="vote-options" type="radio" value="0">

<span class="poll-number" title="28 votes">3%</span>

<span class="poll-option-text">Yes, it's included in an app, but trying to avoid it</span>

</label>

</li>

<li>

<label class="poll-option">

<input style="display:none" name="vote-options" type="radio" value="0">

<span class="poll-number" title="62 votes">6%</span>

<span class="poll-option-text">Only local or private LLM</span>

</label>

</li>

<li>

<label class="poll-option">

<input style="display:none" name="vote-options" type="radio" value="0">

<span class="poll-number" title="29 votes">3%</span>

<span class="poll-option-text">Only textual</span>

</label>

</li>

<li>

<label class="poll-option">

<input style="display:none" name="vote-options" type="radio" value="0">

<span class="poll-number" title="42 votes">4%</span>

<span class="poll-option-text">Only for coding</span>

</label>

</li>

<li>

<label class="poll-option">

<input style="display:none" name="vote-options" type="radio" value="0">

<span class="poll-number" title="235 votes">22%</span>

<span class="poll-option-text">A little bit sometimes</span>

</label>

</li>

<li>

<label class="poll-option">

<input style="display:none" name="vote-options" type="radio" value="0">

<span class="poll-number" title="576 votes">54%</span>

<span class="poll-option-text">No</span>

</label>

</li>

</ul>

<div class="poll-footer">

<span class="vote-total">1064 votes</span>

—

<span class="vote-end">Ended 331d ago</span>

<span class="todo">Polls are currently display only</span>

</div>

</div>

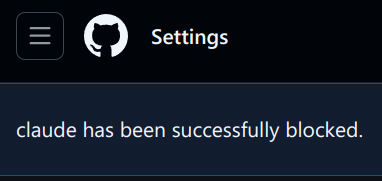

Atlassian decided to vomit AI all over Jira so I decided to mop it up with uBlock Origin. So far so good. One thing I haven't figured out yet is how to get it out of the context menus (right-click menus).<br><br>I also noticed a new Copilot button in GitHub so I zapped that one too.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/aislop/" rel="tag">#AISlop</a> <a href="/tags/jira/" rel="tag">#Jira</a> <a href="/tags/atlassian/" rel="tag">#Atlassian</a> <a href="/tags/noai/" rel="tag">#NoAI</a> <a href="/tags/github/" rel="tag">#GitHub</a><br>

Software "agents" were a hype-y topic when I was a graduate student 25 years ago. I wrote one for a class. I feel like what's being called "agents" or "AI agents" these days are even less capable than what seemed possible a quarter of a century (1) ago when I was in school.<br><br>What I thought then is still true today: to make something like a software agent legitimately useful for a lot of people would require a large amount of low-level grunt work and non-technical work (2) of the sort that the typical Silicon Valley company is unwilling to do. (3) The technology is the absolute easiest part of this task. Throwing a Bigger Computer at the problem leaves all those other pieces of work undone. It's like putting a bigger engine in a car with no wheels, hoping that'll make the car go.<br><br>By the way <a href="/tags/ai/" rel="tag">#AI</a> companies and VCs, I'm available for contract work and have done due diligence research before if you ever want to stop wasting everyone's time and money!<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/agents/" rel="tag">#agents</a> <a href="/tags/hype/" rel="tag">#hype</a> <a href="/tags/siliconvalley/" rel="tag">#SiliconValley</a> <a href="/tags/venturecapital/" rel="tag">#VentureCapital</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/tech/" rel="tag">#tech</a><br><br>(1) Which we've been told repeatedly is essentially infinite time in the tech world.<br>(2) Establishing semantic data standards and convincing a large enough number of people to implement them being an important component. LLMs do not magically develop protocols and solve all the ETL-style problems of translating among different ones. The Semantic Web didn't really stick for a lot of reasons, but one reason is that it's hard!<br>(3) Back when I was still in the startup world I was asked several times by VCs to tell them what I thought about some new startup that claimed to be able to magically clean and fuse data. I think they're still very keen on investing in this style of magic, because it requires an intense amount of human labor, but I think where companies landed was invisibilizing low-paid workers in other countries and pretending a computer did the work they did. Which has also been happening for well over a quarter of a century.<br>

Edited 354d ago

"AI" is Google's "pivot to video" moment:<br><br>Google AI Search Shift Leaves Website Makers Feeling ‘Betrayed’<br><p>The now-ubiquitous AI-generated answers — and the way Google has changed its search algorithm to support them — have caused traffic to independent websites to plummet, according to Bloomberg interviews with 25 publishers and people who work with them.<br></p>From <a href="https://www.bloomberg.com/news/articles/2025-04-07/google-ai-search-shift-leaves-website-makers-feeling-betrayed" rel="nofollow" class="ellipsis" title="www.bloomberg.com/news/articles/2025-04-07/google-ai-search-shift-leaves-website-makers-feeling-betrayed"><span class="invisible">https://</span><span class="ellipsis">www.bloomberg.com/news/article</span><span class="invisible">s/2025-04-07/google-ai-search-shift-leaves-website-makers-feeling-betrayed</span></a><br><br>Remember when Facebook told everyone they should change all their content to video, because it got more traffic? And then that turned out to be such a blatant falsehood that companies went bankrupt trying to do this?<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/google/" rel="tag">#Google</a> <a href="/tags/gemini/" rel="tag">#Gemini</a> <a href="/tags/aislop/" rel="tag">#AISlop</a><br>

Edited 354d ago

Definitely read this whole thread about the eBook manager calibre adding AI slop to "chat with books", and why that's a horrible move that immediately destroys trust in calibre. Here are some highlights I especially appreciated:<br><p>Here, Calibre, in one release, went from a tool readers can use to, well, read, to a tool that fundamentally views books as textureless content, no more than the information contained within them. Anything about presentation, form, perspective, voice, is irrelevant to that view. Books are no longer art, they're ingots of tin to be melted down.<br></p><p>It is completely irrelevant to me whether this new slopware is opt-in or opt-out. Its mere presence and endorsement fundamentally undermines that stance, that it is good, actually, if readers and authors can exist in relationship to each other without also being under the control of a extractive mindset that sees books as mere vehicles, unimportant as artistic works in and of themselves.<br></p><a href="https://wandering.shop/@xgranade/115671289658145064" rel="nofollow" class="ellipsis" title="wandering.shop/@xgranade/115671289658145064"><span class="invisible">https://</span><span class="ellipsis">wandering.shop/@xgranade/11567</span><span class="invisible">1289658145064</span></a><br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/ebooks/" rel="tag">#eBooks</a> <a href="/tags/ebookmanager/" rel="tag">#eBookManager</a> <a href="/tags/calibre/" rel="tag">#calibre</a> <a href="/tags/aislop/" rel="tag">#AISlop</a><br>

For anyone tracking what's going on with generative AI appearing in the eBook software calibre, the calibre developer seems to be asking us to avoid his software:<br><br>In a <a href="https://github.com/kovidgoyal/calibre/pull/2838#issuecomment-3172625811" rel="nofollow">GitHub issue</a> about adding LLM features:<br><p>I definitely think allowing the user to continue the conversation is useful. In my own use of LLMs I tend to often ask followup questions, being able to do so in the same window will be useful.<br></p>In other words he likes LLMs and uses them himself; he's probably not adding these features under pressure from users. I can't help but wonder whether there's vibe code in there.<br><br><br>In the <a href="https://bugs.launchpad.net/calibre/+bug/2134316/comments/3" rel="nofollow">bug report</a>:<br><p>Wow, really! What is it with you people that think you can dictate what I choose to do with my time and my software? You find AI offensive, dont use it, or even better, dont use calibre, I can certainly do without users like you. Do NOT try to dictate to other people what they can or cannot do.<br></p>"You people", also known as paying users. He's dismissive of people's concerns about generative AI, and claims ownership of the software ("my software"). He tells people with concerns to get lost, setting up an antagonistic, us-versus-them scenario. We even get scream caps!<br><br>Personally, besides the fact that I have a zero tolerance policy about generative AI, I've had enough of arrogant software developers. Read the room.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/calibre/" rel="tag">#calibre</a> <a href="/tags/ebooks/" rel="tag">#eBooks</a> <a href="/tags/ebookmanagers/" rel="tag">#eBookManagers</a> <a href="/tags/aislop/" rel="tag">#AISlop</a> <a href="/tags/aipoisoning/" rel="tag">#AIPoisoning</a> <a href="/tags/informationoilspill/" rel="tag">#InformationOilSpill</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/foss/" rel="tag">#FOSS</a> <a href="/tags/softwaredevelopment/" rel="tag">#SoftwareDevelopment</a><br>

Edited 120d ago

The other day I had the intrusive thought<br><p>AI is intellectual Viagra<br></p>and it hasn't left me so I am exorcising it here. I'm sorry in advance for any pain this might cause.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/diffusionmodels/" rel="tag">#DiffusionModels</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/coding/" rel="tag">#coding</a> <a href="/tags/software/" rel="tag">#software</a> <a href="/tags/softwaredevelopment/" rel="tag">#SoftwareDevelopment</a> <a href="/tags/writing/" rel="tag">#writing</a> <a href="/tags/art/" rel="tag">#art</a> <a href="/tags/visualart/" rel="tag">#VisualArt</a><br>

If only it were this easy in the world.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/agenticai/" rel="tag">#AgenticAI</a> <a href="/tags/claude/" rel="tag">#Claude</a> <a href="/tags/anthropic/" rel="tag">#Anthropic</a><br>

The wrongness density is approaching critical today:<br><br>Headline: The military’s new AI says ‘hypothetical’ boat strike scenario ‘unambiguously illegal’<br><br><a href="https://san.com/cc/the-militarys-new-ai-says-hypothetical-boat-strike-scenario-unambiguously-illegal/" rel="nofollow" class="ellipsis" title="san.com/cc/the-militarys-new-ai-says-hypothetical-boat-strike-scenario-unambiguously-illegal/"><span class="invisible">https://</span><span class="ellipsis">san.com/cc/the-militarys-new-a</span><span class="invisible">i-says-hypothetical-boat-strike-scenario-unambiguously-illegal/</span></a><br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/militaryai/" rel="tag">#MilitaryAI</a> <a href="/tags/usmilitary/" rel="tag">#USMilitary</a> <a href="/tags/saberrattling/" rel="tag">#SaberRattling</a> <a href="/tags/geopolitics/" rel="tag">#geopolitics</a> <a href="/tags/uspol/" rel="tag">#USPol</a><br><br>cc <span class="h-card"><a href="https://circumstances.run/@davidgerard" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>davidgerard</span></a></span><br>

Edited 116d ago

If you take the stance that technical debt is code nobody understands, then current LLM-based code generators are technical debt generators until somebody reads and understands their output.<br><br>If you take the stance that writing is thinking--that writing is among other things a process by which we order our thoughts--then understanding code generator output will require substantial rewriting of the code by whomever is tasked with converting it from technical debt to technical asset.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/codeassistant/" rel="tag">#CodeAssistant</a> <a href="/tags/agenticai/" rel="tag">#AgenticAI</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/coding/" rel="tag">#coding</a> <a href="/tags/technicaldebt/" rel="tag">#TechnicalDebt</a><br>

Mozilla's new CEO is all-in on AI regardless of what Firefox users want: <a href="https://lwn.net/Articles/1050826/" rel="nofollow"><span class="invisible">https://</span>lwn.net/Articles/1050826/</a><br><p>Third: Firefox will grow from a browser into a broader ecosystem of trusted software. Firefox will remain our anchor. It will evolve into a modern AI browser and support a portfolio of new and trusted software additions.<br></p>He says the word "trust" a whole bunch of times yet intends to turn an otherwise nice web browser into a slop-slinging platform. I don't expect this will work out very well for anyone.<br><br>"It will evolve into a modern AI browser" sounds like a threat. Good way to start off on the right foot, new Mozilla CEO (sarcasm).<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/antifeatures/" rel="tag">#AntiFeatures</a> <a href="/tags/darkpatterns/" rel="tag">#DarkPatterns</a> <a href="/tags/aislop/" rel="tag">#AISlop</a> <a href="/tags/firefox/" rel="tag">#firefox</a> <a href="/tags/mozilla/" rel="tag">#mozilla</a> <a href="/tags/noai/" rel="tag">#NoAI</a><br>

Edited 110d ago

Bit of a content warning that I'm about to share some <a href="/tags/llm/" rel="tag">#LLM</a>-generated slop. Just two short bits, to make a point.<br><br>I made an <a href="/tags/opentowork/" rel="tag">#OpenToWork</a> post on <a href="/tags/linkedin/" rel="tag">#LinkedIn</a> today partly as an experiment, and as before was immediately inundated with HR bots. I spent a few minutes from time to time stringing one along, out of curiosity. One way I know it's a bot is that no human recruiter would stick with me for that long a duration given the nonsense I was entering. Anyway, at one point it emitted that there was a job it was "recruiting" for, titled "Generative AI & LLM Remediation Consultant | United States (Remote/Onsite)" at a company named "Independent Consultant (Contract Role)". It's fairly clear to me that the bot was tasked with constructing fake job listings based off information people share. I can only guess what its actual purpose is.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/jobhunting/" rel="tag">#JobHunting</a> <a href="/tags/scams/" rel="tag">#scams</a> <a href="/tags/aiscams/" rel="tag">#AIScams</a> <a href="/tags/joblistingscams/" rel="tag">#JobListingScams</a> <a href="/tags/jobscams/" rel="tag">#JobScams</a><br>

I like to poke LinkedIn once in awhile with an "AI" critique to see what I can stir up. One reason I do this is to keep an eye on the changing form of the booster rhetoric. Nowadays a lot of folks respond to critique with some form of "today's LLMs are bad but tomorrow's will be amazing", the true believer/quasi-religious response with a touch of false humility for flavor. Yesterday I got a "AI critics are just as bad as AI boosters" false dichotomy, which by my read was a variant of the "AI critics are hysterical and irrational" with the twist that the speaker was suggesting that boosters are too. That felt new-ish to me. Granted, the hubristic "we're the smart guys in the room, you should do what we say" framing is ancient in the tech industry. Suggesting the boosters are also not the smart guys in the room is an interesting move because it's an attempt to go meta. Neither the boosters nor the critics are the smart guys in the room; the smart guys in the room are actually the ones who can see that (and so you should do what they say, which is more LLMs always).<br><br><a href="/tags/linkedin/" rel="tag">#LinkedIn</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/agenticai/" rel="tag">#AgenticAI</a> <a href="/tags/llm/" rel="tag">#LLM</a><br>

LLM proponents have forgotten their intellectual roots in TANSTAAFL.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/tanstaafl/" rel="tag">#TANSTAAFL</a> <a href="/tags/nofreelunch/" rel="tag">#NoFreeLunch</a><br>

Regarding last boost: "Firefox For Web Developers" is out here urging me to stop using Firefox.<br><br><a href="/tags/mozilla/" rel="tag">#Mozilla</a> <a href="/tags/firefox/" rel="tag">#Firefox</a> <a href="/tags/darkpatterns/" rel="tag">#DarkPatterns</a><a href="/tags/antifeatures/" rel="tag">#antifeatures</a> <a href="/tags/aislop/" rel="tag">#AISlop</a> <a href="/tags/noai/" rel="tag">#NoAI</a> <a href="/tags/noaiwebbrowsers/" rel="tag">#NoAIWebBrowsers</a> <a href="/tags/aicruft/" rel="tag">#AICruft</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/web/" rel="tag">#web</a><br>

A few inchoate thoughts on Gas Town, since I think this example has more to it than “it’s just a meth binge/crypto scam/one-shot AI poisoning”. Part of the reason I think this is that some of the rhetoric it deploys dovetails perfectly with broader trends and phenomena, and I think it's worth pulling those out.<br><br>1. Economists from the physiocrats (18th century) onward promised society freedom from material deprivation and hard physical labor in exchange for submitting to an economic arrangement of society<br>2. In a country like the US, material deprivation and hard physical labor have been significantly reduced since then:<br><p>Though too many clearly still suffer too much, a large proportion of people live free from fear of starvation or lack of shelter<br>The US has deindustralized, meaning hard physical labor is not the reality for a lot of people. For a lot of people labor is emotional or symbolic (“knowledge work”)<br>In other words, for lots of people the economic promise has been fulfilled</p>3. Having to think hard is one of the service economy’s analogs for hard physical labor. If the promise of economics is to be continually pursued--meaning it maintains the promise that if we collectively submit to it, in exchange we will enjoy a freedom--a natural target of the promise is providing freedom from the need to think hard<br><p>It is not coincidental that “Gas Town”’s announcement post mentioned Towers of Hanoi, an undergraduate CS student homework problem that for most students requires thinking hard. It’s designed to encourage a kind of “eureka” moment where recursion as a computer programming technique becomes more clear. GT claims to fulfill the promise of not having to think hard like this anymore: the LLMs will do that thinking for you<br>It is not coincidental that Gas Town is described as being very expensive. Economic power in the form of asset accumulation is what earns you freedom in this way of conceiving things. If you want the freedom from having to think hard, you’d better accumulate assets<br>Since the promise is greater collective freedom, endeavoring to accumulate assets is, in this view, a collective good<br>This differs from effective altruism and other “do good by doing well” conceptions. Rather, the very mechanism of economics produces collective wealth, so the story goes, which means the more active one is as an economic agent, the more collective good one produces (“wealth” and “good” being conflated)<br>Accumulation of assets is the scorecard, so to speak, of such enhanced economic activity, and the individual reward can then be freedom from having to think hard</p>4. Expending significant resources is viewed as a good in itself from a (naive) evolutionary perspective<br><p>Lotka’s maximum power principle (supposedly) dictates that those entities that transform the most power into useful organization are most fit from an evolutionary standpoint<br>Ernst Juenger’s notion of “total mobilization” brings this principle to politics/political economy/geopolitics: those nations that “totally” mobilize their national resources are the ones that will dominate geopolitically<br>See, for instance, the RAND Corporation’s <a href="https://www.rand.org/nsrd/projects/NDS-commission.html" rel="nofollow">Commission on the National Defense Strategy</a>: “The Commission finds that the U.S. military lacks both the capabilities and the capacity required to be confident it can deter and prevail in combat. It needs to do a better job of incorporating new technology at scale; field more and higher-capability platforms, software, and munitions; and deploy innovative operational concepts to employ them together better.” (emphasis mine). In summary: the US is about to be outcompeted (lacks fitness); in response, it should go big (“at scale”, “more”) in an organized way (“deploy innovative operational concepts”, “employ them together better”)<br>The rhetoric around LLM-based AI includes similar language, exemplified in the GT post: burn through as much infrastructural resources as possible to produce organized outputs “at scale”, while avoiding having human beings think too hard to produce those outputs, an indication that the power was burned to produce useful organization<br>LLM-based AI plays a prominent role in US federal government strategy, particularly military strategy, with language about dominance serving to justify its use<br>It is not coincidental that Gas Town uses many orders of magnitude more resources to solve the Towers of Hanoi problem (“Burn All The Gas” Town). This rhetoric dovetails perfectly with the “total mobilization” concept</p><a href="/tags/computerscience/" rel="tag">#ComputerScience</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/gastown/" rel="tag">#GasTown</a> <a href="/tags/economics/" rel="tag">#economics</a> <a href="/tags/eugenics/" rel="tag">#eugenics</a> <a href="/tags/maximumpowerprinciple/" rel="tag">#MaximumPowerPrinciple</a> <a href="/tags/evolution/" rel="tag">#evolution</a> <a href="/tags/evolutionarytheory/" rel="tag">#EvolutionaryTheory</a> <a href="/tags/darwinism/" rel="tag">#Darwinism</a> <a href="/tags/uspol/" rel="tag">#USPol</a> <a href="/tags/us/" rel="tag">#US</a><br>

Edited 69d ago

"Water is totally fake."<br><br>-- Sam Altman, super genius<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/openai/" rel="tag">#OpenAI</a> <a href="/tags/chatgpt/" rel="tag">#ChatGPT</a><br>

If current-generation LLM-based chatbots can drive people to commit crimes or even take their own lives, what do you suppose Neuralink would do to people?<br><br>The first thing I said to the person who suggested to me that human brains might be directly hooked to a computer, whenever that was, was "everyone will go insane immediately". I still believe that, but now we're beginning to see evidence.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/neuralink/" rel="tag">#Neuralink</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/hci/" rel="tag">#HCI</a> <a href="/tags/brainimplants/" rel="tag">#BrainImplants</a><br>

Am I to understand from this that SearXNG is in the process of becoming AI poisoned?<br><br><p><a href="https://github.com/searxng/searxng/issues/2163" rel="nofollow" class="ellipsis" title="github.com/searxng/searxng/issues/2163"><span class="invisible">https://</span><span class="ellipsis">github.com/searxng/searxng/iss</span><span class="invisible">ues/2163</span></a><br><a href="https://github.com/searxng/searxng/issues/2008" rel="nofollow" class="ellipsis" title="github.com/searxng/searxng/issues/2008"><span class="invisible">https://</span><span class="ellipsis">github.com/searxng/searxng/iss</span><span class="invisible">ues/2008</span></a><br><a href="https://github.com/searxng/searxng/issues/2273" rel="nofollow" class="ellipsis" title="github.com/searxng/searxng/issues/2273"><span class="invisible">https://</span><span class="ellipsis">github.com/searxng/searxng/iss</span><span class="invisible">ues/2273</span></a></p>The last issue hasn't been active since 2023 but the 1st one has been active recently and the middle one last summer.<br><br><a href="/tags/searx/" rel="tag">#SearX</a> <a href="/tags/searxng/" rel="tag">#SearXNG</a> <a href="/tags/searchengines/" rel="tag">#SearchEngines</a> <a href="/tags/alternatesearchengines/" rel="tag">#AlternateSearchEngines</a> <a href="/tags/metasearchengines/" rel="tag">#MetaSearchEngines</a> <a href="/tags/web/" rel="tag">#web</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/foss/" rel="tag">#FOSS</a> <a href="/tags/opensource/" rel="tag">#OpenSource</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/aipoisoning/" rel="tag">#AIPoisoning</a> <a href="/tags/aislop/" rel="tag">#AISlop</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/chatgpt/" rel="tag">#ChatGPT</a> <a href="/tags/claude/" rel="tag">#Claude</a> <a href="/tags/perplexity/" rel="tag">#Perplexity</a><br>

Edited 76d ago

I guess we shouldn't be surprised, but no way:<br><br>AAAI Launches AI-Powered Peer Review Assessment System<br><br><a href="https://aaai.org/aaai-launches-ai-powered-peer-review-assessment-system/" rel="nofollow" class="ellipsis" title="aaai.org/aaai-launches-ai-powered-peer-review-assessment-system/"><span class="invisible">https://</span><span class="ellipsis">aaai.org/aaai-launches-ai-powe</span><span class="invisible">red-peer-review-assessment-system/</span></a><br><br>No.<br><br>Speaking as someone who has co-organized an AAAI symposium and among other things did a bunch of editorial work.<br><br><a href="/tags/noai/" rel="tag">#NoAI</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/aioutofscience/" rel="tag">#aIOutOfScience</a> <a href="/tags/science/" rel="tag">#science</a> <a href="/tags/computerscience/" rel="tag">#ComputerScience</a> <a href="/tags/peerreview/" rel="tag">#PeerReview</a><br>

<p>"When generative AI and large language models (LLMs) work, or appear to work, using them truly feels like magic. They can be very empowering, especially when used as an accessibility tool.</p><p>But they are also an unreliable technology built on exploitation of workers, particularly from third world countries, and our environment. Its frequent use may lead to cognitive decline and a loss of skills, and can actually decrease productivity."</p><p><a href="https://stefanbohacek.com/blog/on-generative-ai/" rel="nofollow" class="ellipsis" title="stefanbohacek.com/blog/on-generative-ai/"><span class="invisible">https://</span><span class="ellipsis">stefanbohacek.com/blog/on-gene</span><span class="invisible">rative-ai/</span></a></p><p><a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/technology/" rel="tag">#technology</a> <a href="/tags/ai/" rel="tag">#AI</a></p>

Edited 92d ago

Would the perception that an LLM chatbot is speaking to you be dissolved if they were deterministic instead of stochastic?<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/chatgpt/" rel="tag">#ChatGPT</a> <a href="/tags/claude/" rel="tag">#Claude</a><br>