<p>The DAIR Institute makes sceptical videos warning about the dangerous hype and irresponsible practices currently driving AI, LLMs and related tech. You can follow at:</p><p>➡️ <span class="h-card"><a href="https://peertube.dair-institute.org/accounts/dair" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>dair</span></a></span> </p><p>There are already over 70 videos uploaded. If these haven't federated to your server yet, you can browse them all at <a href="https://peertube.dair-institute.org/a/dair/videos" rel="nofollow" class="ellipsis" title="peertube.dair-institute.org/a/dair/videos"><span class="invisible">https://</span><span class="ellipsis">peertube.dair-institute.org/a/</span><span class="invisible">dair/videos</span></a></p><p>You can also follow DAIR's general social media account at <span class="h-card"><a href="https://dair-community.social/@DAIR" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>[email protected]</span></a></span> </p><p><a href="/tags/featuredpeertube/" rel="tag">#FeaturedPeerTube</a> <a href="/tags/dair/" rel="tag">#DAIR</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/openai/" rel="tag">#OpenAI</a> <a href="/tags/samaltman/" rel="tag">#SamAltman</a> <a href="/tags/sceptic/" rel="tag">#Sceptic</a> <a href="/tags/skeptic/" rel="tag">#Skeptic</a> <a href="/tags/peertube/" rel="tag">#PeerTube</a> <a href="/tags/peertubers/" rel="tag">#PeerTubers</a></p>

llm

<p>Rendez-vous au colloque annuel de l'Association francophone des humanités numériques <a href="/tags/humanistica/" rel="tag">#Humanistica</a> (22-25 avr. 2025 à <a href="/tags/dakar/" rel="tag">#Dakar</a>) pour découvrir les communications de l'équipe du Lab d'Huma-Num (CNRS). Un poster et une communication de Adam Faci et Léa Maronet, membres du HN Lab, intitulée « SegmentArt, une méthode plus rapide pour annoter des images en utilisant SegmentAnything2 ». Présentation des contenus sur <a href="https://hnlab.huma-num.fr/blog/tags/humanistica-2025/" rel="nofollow" class="ellipsis" title="hnlab.huma-num.fr/blog/tags/humanistica-2025/"><span class="invisible">https://</span><span class="ellipsis">hnlab.huma-num.fr/blog/tags/hu</span><span class="invisible">manistica-2025/</span></a> <a href="/tags/ia/" rel="tag">#IA</a> <a href="/tags/veilleesr/" rel="tag">#veilleESR</a> <a href="/tags/cnrs/" rel="tag">#CNRS</a> <a href="/tags/isidore/" rel="tag">#ISIDORE</a> <a href="/tags/ist/" rel="tag">#IST</a> <a href="/tags/découvrabilité/" rel="tag">#découvrabilité</a> <a href="/tags/llm/" rel="tag">#LLM</a></p>

Edited 347d ago

I read <span class="h-card"><a href="https://toot.cafe/@baldur" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>baldur</span></a></span>'s post Let's stop pretending that managers and executives care about productivity today, here: <a href="https://www.baldurbjarnason.com/2025/disingenuous-discourse/" rel="nofollow" class="ellipsis" title="www.baldurbjarnason.com/2025/disingenuous-discourse/"><span class="invisible">https://</span><span class="ellipsis">www.baldurbjarnason.com/2025/d</span><span class="invisible">isingenuous-discourse/</span></a> and felt like riffing a bit on the section "Task sequences as vectors" since I've modeled stuff like this too. As with baldur's post mine is a bit of a gallop, meaning I might make some errors and omissions. The tl;dr is that this model suggests that in many workplaces, mandating AI tool use might have the perverse effect of making the group or workplace less productive overall, even if the tools make individuals more productive (as measured by unit throughput, say).<br><br>The rough idea is to model a bunch of people working in a company using queuing theory. Each person receives tasks to perform, completes each task in sequence, and passes the result onto another person. If a person is busy when they receive a new task, the task goes into a sort of inbox to wait till they're ready to work on it (the "queue" in "queuing theory"). Each person is modeled as a probability distribution, where the mean specifies the average or typical amount of time they take to complete a task, and the variance models the fact that sometimes tasks take more or less time to complete for unaccounted for reasons (you spill your coffee; the previous person did a bang up job that time; etc). You can model workplaces with many people, like factories and offices, in this way, and ask questions about how quickly the entire group can complete tasks (throughput, which relates to productivity), how much time passes between an initial incoming request and the output of a final product (latency or wait time), and how much variability there is in the throughput and latency. It's mathmagical!<br><br>Anyhow, baldur points out that the variance in individual task completion is the killer variable here. As you introduce more and more variability in the time-to-complete distribution of individuals, the group's throughput and latency suffer significantly. Depending of course on the structure of the group, there can be phase shifts from "throughput decreases" to "throughput effectively stops altogether" as this variability goes up. He argues, I think correctly, that forcing workers to use generative AI tools in their workflows can increase their task completion time variance. Even worse, even if it does make their individual throughput higher--meaning the tools locally "increase productivity"--an increase in the variance of that throughput can make the overall productivity of the workplace lower despite what seem to be individual gains! A manager that actually did care about productivity would at the very least consider this possibility before mandating the use of such tools.<br><br>I wanted to add that these sorts of phenomena can be even worse depending on how you model time-to-complete. Often a Gaussian distribution (bell curve) is used, reflecting that sometimes tasks can be completed faster and sometimes they take a bit more time, but tend to an average and do not skew towards faster or slower. This is largely the model baldur was discussing. However, knowledge work, and especially work like coding or R&D, are often better modeled by exponential distributions or similarly long-tailed distributions like the gamma distribution. With knowledge work, most tasks are completed in an average-ish amount of time, occasionally some are completed more quickly, but more often there are tasks that take 2, 3, sometimes 10 or more times as long as the average case. For the exponential distribution, roughly 5% of tasks take an "anomalously" long time.<br><br>A sequence of exponentially-distributed tasks has challenging throughput and latency behavior. The sum of independent exponential distributions is a gamma distribution, which is also long-tailed but usually with an even worse rate parameter that tends to lengthen the tail, meaning delays compound (in baldur's post delays tend to be compensated by symmetrical gains if the group is large enough, but that doesn't happen with long-tailed distributions). I don't know enough about queuing theory to say what the general behavior is, but intuitively it seems it must be equally challenging in real-world arrangements. This is one way to account for why software development projects and R&D projects are almost never completed early and can sometimes take 2 or more times longer than anticipated.<br><br>Adding variance to an exponential distribution--as mandated use of AI tools might do--has the effect of also increasing the mean time to completion. It also flattens/lengthens the tail. I haven't worked it out for other long-tailed distributions but I suspect similar phenomena with those. Overall this would be going in the wrong direction, making the killer problem--the long tail--even worse!<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/aimandates/" rel="tag">#AIMandates</a> <a href="/tags/queuingtheory/" rel="tag">#QueuingTheory</a> <a href="/tags/modeling/" rel="tag">#modeling</a> <a href="/tags/workplacemodeling/" rel="tag">#WorkplaceModeling</a> <a href="/tags/productivity/" rel="tag">#productivity</a><br>

Edited 215d ago

<p>What if "42" is just the hallucination of an LLM from the future? 🤔</p><p><a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/generativeai/" rel="tag">#generativeAI</a> <a href="/tags/42/" rel="tag">#42</a></p>

Edited 1y ago

<p>So if you thought that surveillance based ads couldn't get any worse... Meta: Hold my beer!</p><p><a href="/tags/facebook/" rel="tag">#Facebook</a> <a href="/tags/meta/" rel="tag">#Meta</a> <a href="/tags/surveillance/" rel="tag">#surveillance</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/ads/" rel="tag">#Ads</a> </p><p><a href="https://techcrunch.com/2025/05/07/mark-zuckerbergs-ai-ad-tool-sounds-like-a-social-media-nightmare/?utm_campaign=social&utm_source=threads&utm_medium=organic" rel="nofollow" class="ellipsis" title="techcrunch.com/2025/05/07/mark-zuckerbergs-ai-ad-tool-sounds-like-a-social-media-nightmare/?utm_campaign=social&utm_source=threads&utm_medium=organic"><span class="invisible">https://</span><span class="ellipsis">techcrunch.com/2025/05/07/mark</span><span class="invisible">-zuckerbergs-ai-ad-tool-sounds-like-a-social-media-nightmare/?utm_campaign=social&utm_source=threads&utm_medium=organic</span></a></p>

<p>I wrote about using a website's search input to control my smart home (and other things)</p><p><a href="https://tomcasavant.com/your-search-button-powers-my-smart-home/" rel="nofollow" class="ellipsis" title="tomcasavant.com/your-search-button-powers-my-smart-home/"><span class="invisible">https://</span><span class="ellipsis">tomcasavant.com/your-search-bu</span><span class="invisible">tton-powers-my-smart-home/</span></a></p>

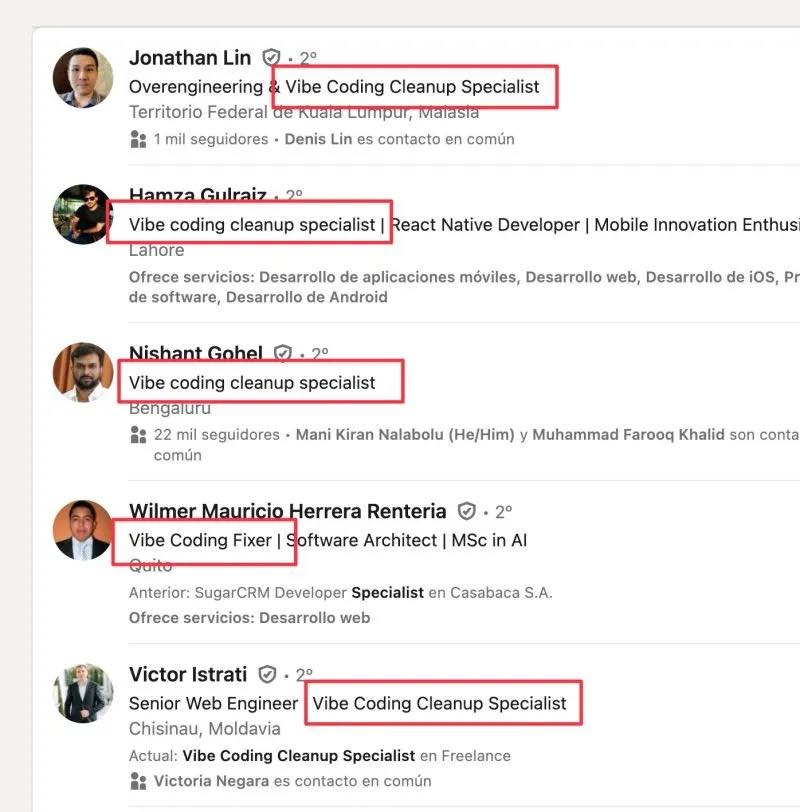

<p>A new type of career opportunity emerged from vibe coding because vibe coders didn't know what they were doing 😉</p><p><a href="/tags/ai/" rel="tag">#ai</a> <a href="/tags/llm/" rel="tag">#llm</a> <a href="/tags/jobs/" rel="tag">#jobs</a></p>

<p>Postgres as a search engine<br><a href="https://anyblockers.com/posts/postgres-as-a-search-engine" rel="nofollow" class="ellipsis" title="anyblockers.com/posts/postgres-as-a-search-engine"><span class="invisible">https://</span><span class="ellipsis">anyblockers.com/posts/postgres</span><span class="invisible">-as-a-search-engine</span></a><br><a href="https://news.ycombinator.com/item?id=41343814" rel="nofollow" class="ellipsis" title="news.ycombinator.com/item?id=41343814"><span class="invisible">https://</span><span class="ellipsis">news.ycombinator.com/item?id=4</span><span class="invisible">1343814</span></a></p><p>Build a retrieval system with semantic, full-text, & fuzzy search in Postgres to be used as a backbone in RAG pipelines.</p><p>We’ll combine 3 techniques:</p><p>* full-text search with tsvector<br>* semantic search with pgvector<br>* fuzzy matching with pg_trgm</p><p>* bonus: BM25</p><p><a href="https://en.wikipedia.org/wiki/Okapi_BM25" rel="nofollow" class="ellipsis" title="en.wikipedia.org/wiki/Okapi_BM25"><span class="invisible">https://</span><span class="ellipsis">en.wikipedia.org/wiki/Okapi_BM</span><span class="invisible">25</span></a><br><a href="https://blog.paradedb.com/pages/elasticsearch_vs_postgres" rel="nofollow" class="ellipsis" title="blog.paradedb.com/pages/elasticsearch_vs_postgres"><span class="invisible">https://</span><span class="ellipsis">blog.paradedb.com/pages/elasti</span><span class="invisible">csearch_vs_postgres</span></a><br><a href="https://news.ycombinator.com/item?id=41173288" rel="nofollow" class="ellipsis" title="news.ycombinator.com/item?id=41173288"><span class="invisible">https://</span><span class="ellipsis">news.ycombinator.com/item?id=4</span><span class="invisible">1173288</span></a></p><p><a href="/tags/rdbms/" rel="tag">#RDBMS</a> <a href="/tags/postgres/" rel="tag">#postgres</a> <a href="/tags/postgresql/" rel="tag">#PostgreSQL</a> <a href="/tags/databases/" rel="tag">#databases</a> <a href="/tags/search/" rel="tag">#search</a> <a href="/tags/searchengine/" rel="tag">#SearchEngine</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/rag/" rel="tag">#RAG</a> <a href="/tags/bm25/" rel="tag">#BM25</a></p>

Edited 1y ago

R.A. Fisher wrote that the purpose of statisticians was "constructing a hypothetical infinite population of which the actual data are regarded as constituting a random sample." ( p. 311 <a href="https://royalsocietypublishing.org/doi/epdf/10.1098/rsta.1922.0009" rel="nofollow">here</a> ). In <a href="https://www.tandfonline.com/doi/abs/10.1080/00031305.1998.10480528" rel="nofollow">The Zeroth Problem</a> Colin Mallows wrote "As Fisher pointed out, statisticians earn their living by using two basic tricks-they regard data as being realizations of random variables, and they assume that they know an appropriate specification for these random variables."<br><br>Some of the pathological beliefs we attribute to techbros were already present in this view of statistics that started forming over a century ago. Our writing is just data; the real, important object is the “hypothetical infinite population” reflected in a large language model, which at base is a random variable. Stable Diffusion, the image generator, is called that because it is based on <a href="https://proceedings.mlr.press/v37/sohl-dickstein15.pdf" rel="nofollow">latent diffusion models</a>, which are a way of representing complicated distribution functions--the hypothetical infinite populations--of things like digital images. Your art is just data; it’s the latent diffusion model that’s the real deal. The entities that are able to identify the distribution functions (in this case tech companies) are the ones who should be rewarded, not the data generators (you and me).<br><br>So much of the dysfunction in today’s machine learning and AI points to how problematic it is to give statistical methods a privileged place that they don’t merit. We really ought to be calling out Fisher for his trickery and seeing it as such.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/stablediffusion/" rel="tag">#StableDiffusion</a> <a href="/tags/statistics/" rel="tag">#statistics</a> <a href="/tags/statisticalmethods/" rel="tag">#StatisticalMethods</a> <a href="/tags/diffusionmodels/" rel="tag">#DiffusionModels</a> <a href="/tags/machinelearning/" rel="tag">#MachineLearning</a> <a href="/tags/ml/" rel="tag">#ML</a><br>

Edited 138d ago

<p>The apparatus of a large language model really is remarkable. It takes in billions of pages of writing and figures out the configuration of words that will delight me just enough to feed it another prompt. There’s nothing else like it.<br></p>From ChatGPT Is a Gimmick,<br><a href="https://hedgehogreview.com/web-features/thr/posts/chatgpt-is-a-gimmick" rel="nofollow" class="ellipsis" title="hedgehogreview.com/web-features/thr/posts/chatgpt-is-a-gimmick"><span class="invisible">https://</span><span class="ellipsis">hedgehogreview.com/web-feature</span><span class="invisible">s/thr/posts/chatgpt-is-a-gimmick</span></a><br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/geneartiveai/" rel="tag">#GeneartiveAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/chatgpt/" rel="tag">#ChatGPT</a> <a href="/tags/gpt/" rel="tag">#GPT</a> <a href="/tags/gimmick/" rel="tag">#gimmick</a><br>

<p>Programming properly should be regarded as an activity by which the programmers form or achieve a certain kind of insight, a theory, of the matters at hand. This suggestion is in contrast to what appears to be a more common notion, that programming should be regarded as a production of a program and certain other texts.<br></p>Peter Naur in Programming As Theory Building, 1985.<br><br>A computer program is not source code. It is the combination of source code, related documents, and the mental understanding developed by the people who work with the code and documents regularly. In other words a computer program is a relational structure that necessarily includes human beings.<br><br>The output of a generative AI model alone cannot be a computer program in this sense no matter how closely that output resembles the source code part of some future possible computer program. That the output could be developed into a computer program over time, given the appropriate resources to do so, does not make it equivalent to a computer program.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/copilot/" rel="tag">#Copilot</a> <a href="/tags/agenticcoding/" rel="tag">#AgenticCoding</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/softwaredevelopment/" rel="tag">#SoftwareDevelopment</a> <a href="/tags/softwareengineering/" rel="tag">#SoftwareEngineering</a> <a href="/tags/programming/" rel="tag">#programming</a> <a href="/tags/coding/" rel="tag">#coding</a><br>

Edited 320d ago

<p>Today and tomorrow colleagues and I are organising a two-day workshop on the use of LLMs for linguistics research at <span class="h-card"><a href="https://wisskomm.social/@UniKoeln" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>UniKoeln</span></a></span>: <a href="https://sfb1252.github.io/llm-workshop/" rel="nofollow" class="ellipsis" title="sfb1252.github.io/llm-workshop/"><span class="invisible">https://</span><span class="ellipsis">sfb1252.github.io/llm-workshop</span><span class="invisible">/</span></a>. This was originally intended as a small, university-internal event, but we have found that there is a lot of interest from colleagues and students about this topic so we are delighted to have 15 poster presentations, in addition to four brilliant keynote speakers, and a concluding panel discussion. 1/🧵 </p><p><a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/research/" rel="tag">#research</a> <a href="/tags/academia/" rel="tag">#academia</a> <a href="/tags/linguistics/" rel="tag">#linguistics</a></p>

Edited 133d ago

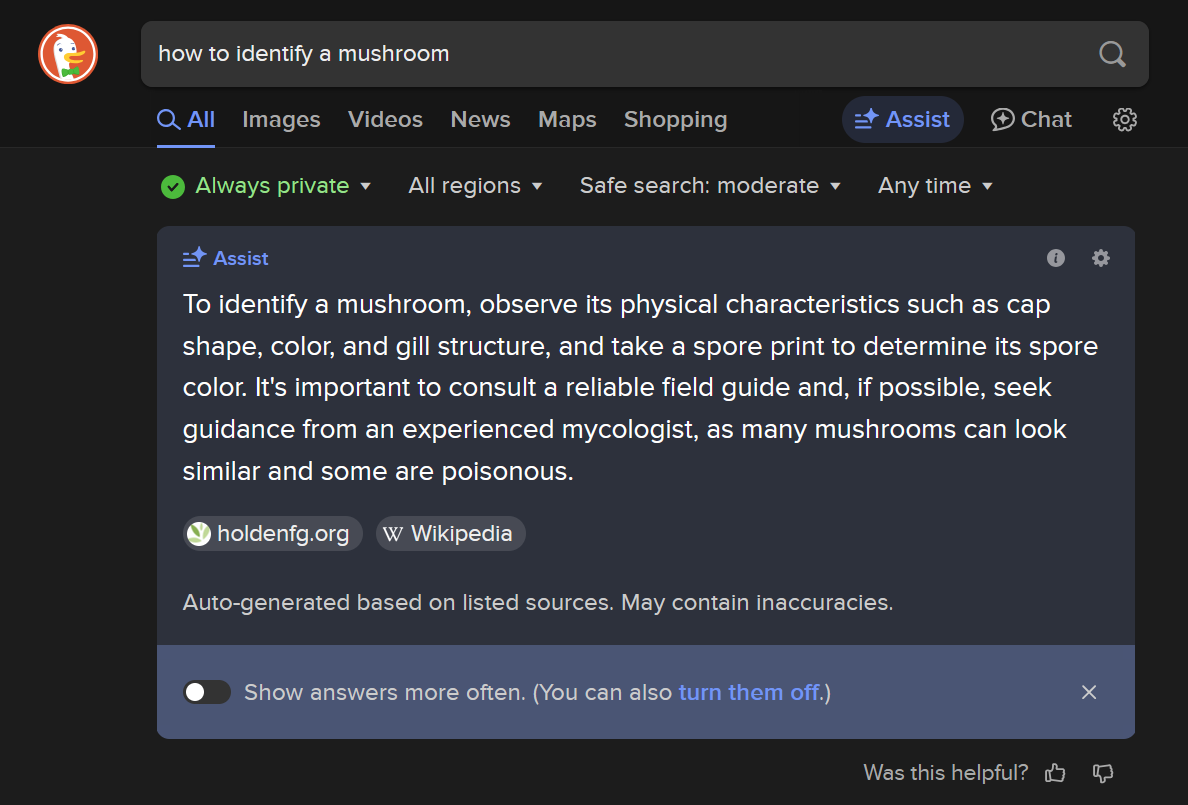

<p>I have what I think is a good example of how useless ‘AI’ is for understanding. I am tagging widely. I searched “how to identify mushrooms” on DuckDuckGo, which then so helpfully spammed my screen with this lovely advice (see image with alt text). The source of much of my knowledge is mushroomexpert.com, managed by Michael Kuo.</p><p>“A mushroom is identified by its characteristics”. I could get semantic here too about the definition of a mushroom, but talk about a pretty useless statement. Fine though. That’s well enough and good if you want an explanation that is super entry level. That’s not necessarily a bad thing, though I don’t remember telling the ‘AI’ that I wanted only entry level information.</p><p>Then it talks about the danger in attempting to ID mushrooms because of the potential for poisoning. It tacitly assumes that my wanting to ID a mushroom means I want to eat it. I don’t. I just like mushrooms. I have a problem with the whole ‘some are poisonous’ throw-in, like its something their lawyers required them to include. How many are poisonous? 90%? 5%? We have no idea, and that’s OK. I didn’t tell the ‘AI’ that I wanted information on whether or not they were poisonous. But, as I’ll get to, the fact that this is included is not my problem. My problem is what they don’t include.</p><p>I think mushrooms are awesome. I think the fact that some of them are poisonous is relevant only based on the human-centric assumptions ‘AI’ is so obsessed with and what it’s dataset is built on. I don’t see the value in a mushroom based on whether or not I can eat it, and it chaffs me that they don’t also include any information about their ecological roles. You know what is a great way to identify a mushroom (including if I want to eat it)?!?!?! Their ecology (essentially, their ‘behavior’)!!! Let’s be sure to not mention that, <a href="/tags/techbros/" rel="tag">#TechBros</a>.</p><p>Ok let’s keep going, cause we’ve made it this far. It suggests talking to a <a href="/tags/mycologist/" rel="tag">#mycologist</a>. It turns out that I don’t have any experienced mycologists on call. Mycologists are helpful but busy people. And I’m more likely than most of the population to know mycologists. You might as well say, ‘don’t bother trying to ID the mushroom’. Way to kill my interest immediately in something I’m trying to get into. If you really want to learn to ID mushrooms for foraging, there are sources you can look up to help you.</p><p>I’ll get to my main point. Identification of certain mushroom forming fungi to species is essentially impossible. Look up Amanitas or Russulas on mushroomexpert.com (phenomenal source, old school blogging). There is no clear delineating of what a mushroom forming species even is. Scientists argue over and reclassify bird subspecies all the time. Imagine the black box that is mushroom forming fungi, which most of the time is a web of single-cell wide threads hidden in the soil. Some mushrooms historically were ‘IDed’ (scientifically) by taste or color, which as you all know everyone experiences these things the same, all the time. And, darnit, I happened to leave my DNA sequencing kit at home (as if there aren’t issues with classifying mushroom forming fungi on their DNA alone).</p><p>If ‘AI’ were functional, to me, it would include the suggestion that one option is, instead of focusing on species, focus on species groupings (this also applies to foraging for mushrooms if done thoughtfully). Species groupings can be more useful, as is sometimes saying: “I don’t need to know exactly what this is. I’ll just focus on it’s ecology instead of obsessing over an arbitrary definition”. This nuance is not something that can be corrected with better algorithms or more training data (in fact, its going to get worse), because <a href="/tags/llm/" rel="tag">#LLM</a> s are designed to spit out the lowest common denominator.</p><p>In the end, given all the questions I brought up, the biggest problems I have with ‘AI’ is that it falsely assumes something gigantic about the question I am asking and gives a simplified and highly misleading perception of how much we actually know. I think it makes a big mistake assuming that I am uncurious and want a bare-minimum answer. And when it comes to the grand total of all there is to know about mushroom forming fungi, we know next to nothing. Of course, 'AI' cannot say that because 'AI' doesn't know what it doesn't know.</p><p>You know who can identify and communicate all of these nuances? Humans. </p><p><a href="/tags/nature/" rel="tag">#nature</a> <a href="/tags/mushrooms/" rel="tag">#mushrooms</a> <a href="/tags/fungi/" rel="tag">#fungi</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/technology/" rel="tag">#technology</a> <a href="/tags/artificialintelligence/" rel="tag">#artificialIntelligence</a> <a href="/tags/ecology/" rel="tag">#ecology</a> <a href="/tags/solarpunk/" rel="tag">#solarPunk</a> <a href="/tags/ecologicalreciprocity/" rel="tag">#EcologicalReciprocity</a></p>

<p>Please, don't use any <a href="/tags/llm/" rel="tag">#LLM</a> service to generate some report you don't plan to check really carefully yourself in every detail.</p><p>I've read one with clearly hallucinated stuff all over it.</p><p>It doesn't push your productivity, it really destroys your credibility.</p><p>This technology is no productivity miracle, it's an answer simulator.</p><p><a href="/tags/pim/" rel="tag">#PIM</a> <a href="/tags/microsoft/" rel="tag">#Microsoft</a> <a href="/tags/copilot/" rel="tag">#CoPilot</a> <a href="/tags/chatgpt/" rel="tag">#ChatGPT</a></p>

This Thanksgiving I am celebrating the AI revolution by putting the turkey, mashed potatoes, cranberry sauce, and bean casserole through a blender and serving Generative Alimentary Infusion to all my guests.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/thanksgiving/" rel="tag">#Thanksgiving</a><br>

<p>A surge in new datacenters, each with the power demand of 100,000 households and a cooling water demand of 1,000,000 m³ per year to train AI models on material obtained without consent on hardware now unaffordable to consumers so fascism-adjacent tech billionaires can sell us the idea that any skill is now worthless and in doing so creating the largest economic bubble ever while simultaneously destroying society and environment. </p><p>I think that about sums it up. </p><p><a href="/tags/genai/" rel="tag">#genai</a> <a href="/tags/llm/" rel="tag">#llm</a></p>

<p>DeepSeek launched a free, open-source large-language model in late December, claiming it was developed in just two months at a cost of under $6 million — a much smaller expense than the one called for by Western counterparts.<br><br>These developments have stoked concerns about the amount of money big tech companies have been investing in AI models and data centers, and raised alarm that the U.S. is not leading the sector as much as previously believed.<br></p>The "Western counterparts" are claiming training a model might take years and billions of dollars. This has always been a hyped-up grift, with snake oil salesmen and con artists being showered with money and power. It's really quite amazing how profoundly unintelligent "the market" is in practice.<br><br>The sad reality is that the US could lead in this field (1), if we'd stop routinely putting narcissists and con artists in charge and showering them with praise even when they fail.<br><br>From <a href="https://www.cnbc.com/2025/01/27/nvidia-falls-10percent-in-premarket-trading-as-chinas-deepseek-triggers-global-tech-sell-off.html" rel="nofollow" class="ellipsis" title="www.cnbc.com/2025/01/27/nvidia-falls-10percent-in-premarket-trading-as-chinas-deepseek-triggers-global-tech-sell-off.html"><span class="invisible">https://</span><span class="ellipsis">www.cnbc.com/2025/01/27/nvidia</span><span class="invisible">-falls-10percent-in-premarket-trading-as-chinas-deepseek-triggers-global-tech-sell-off.html</span></a><br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/snakeoil/" rel="tag">#SnakeOil</a> <a href="/tags/hype/" rel="tag">#hype</a> <a href="/tags/grift/" rel="tag">#grift</a> <a href="/tags/marketcapitalism/" rel="tag">#MarketCapitalism</a><br><br>(1) Putting aside whether we should, which is an important question.<br>

<p>OpenAI 称有证据 DeepSeek 使用「模型蒸馏」技术利用其模型进行训练。<br><br>- The Verge 的文章还提到,考虑到是 OpenAI 开盗用互联网数据训练其模型之先河,这一指控颇具有讽刺性。<br>- 404 Media 的讽刺则更为直接:"Hahahahahahahahahahahahahahahaha hahahhahahahahahahahahahahaha"。<br><br><a href="https://theverge.com/news/601195/openai-evidence-deepseek-distillation-ai-data" rel="nofollow">theverge.com/~</a><br><a href="https://404media.co/openai-furious-deepseek-might-have-stolen-all-the-data-openai-stole-from-us/" rel="nofollow">404media.co/~</a><br>seealso: <a href="https://news.ycombinator.com/item?id=42865527" rel="nofollow">HackerNews:42865527</a><br><br><a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/openai/" rel="tag">#OpenAI</a> <a href="/tags/deepseek/" rel="tag">#DeepSeek</a> <a href="/tags/today/" rel="tag">#today</a><br><br><a href="https://t.me/outvivid/4662" rel="nofollow">Telegram 原文</a></p>

<p>Did you know? <a href="/tags/fedify/" rel="tag">#Fedify</a> provides <a href="/tags/documentation/" rel="tag">#documentation</a> optimized for LLMs through the <a href="https://llmstxt.org/" rel="nofollow">llms.txt standard</a>.</p><p>Available endpoints:</p><p><a href="https://fedify.dev/llms.txt" rel="nofollow"><span class="invisible">https://</span>fedify.dev/llms.txt</a> — Core documentation overview<br><a href="https://fedify.dev/llms-full.txt" rel="nofollow"><span class="invisible">https://</span>fedify.dev/llms-full.txt</a> — Complete documentation dump</p><p>Useful for training <a href="/tags/ai/" rel="tag">#AI</a> assistants on <a href="/tags/activitypub/" rel="tag">#ActivityPub</a>/<a href="/tags/fediverse/" rel="tag">#fediverse</a> development, building documentation chatbots, or <a href="/tags/llm/" rel="tag">#LLM</a>-powered dev tools.</p><p><a href="/tags/fedidev/" rel="tag">#fedidev</a></p>

If you turn the sink off when you're done using it to conserve water, or turn the lights off when you leave the room to conserve electricity, why do you use ChatGPT or other AI tools? Using those sorts of tools a few times a month negates whatever you've conserved by being prudent in other parts of your life.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/waste/" rel="tag">#waste</a> <a href="/tags/environment/" rel="tag">#environment</a><br>

<p>Resistance to the coup is the defense of the human against the digital and the democratic against the oligarchic.<br></p>From <a href="https://snyder.substack.com/p/of-course-its-a-coup" rel="nofollow" class="ellipsis" title="snyder.substack.com/p/of-course-its-a-coup"><span class="invisible">https://</span><span class="ellipsis">snyder.substack.com/p/of-cours</span><span class="invisible">e-its-a-coup</span></a><br><br>Defense of the human against the digital has been my mission for some time. Resisting the narratives about how <a href="/tags/llms/" rel="tag">#LLMs</a> "reason", "pass the Turing test", "diagnose illnesses", are "better than humans" in various ways are part of it. Resisting the false narrative that we're on the verge of discovering <a href="/tags/agi/" rel="tag">#AGI</a> is part of it. Allowing these false stories to persist and spread means succumbing to very dark anti-human forces. We're seeing some of the consequences now, and we're seeing how far this might go.<br><br><a href="/tags/uspol/" rel="tag">#USPol</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/agi/" rel="tag">#AGI</a><br>

<p>yo fedi, how are you today?</p><p>I'm trying to find the absolute best, well-argumented (!) <a href="/tags/llm/" rel="tag">#LLM</a> takedown blogpost you've read lately.. preferably related to software development.</p><p>Unfortunately my boss is on the hype-train (random glory posts in slack) and I'd like to counter him just a wee bit. Bring it on 😁</p><p>Boosting appreciated for reach <img src="https://neodb.social/media/emoji/post.lurk.org/boost_anim_vanilla.png" class="emoji" alt=":boost_anim_vanilla:" title=":boost_anim_vanilla:"></p>

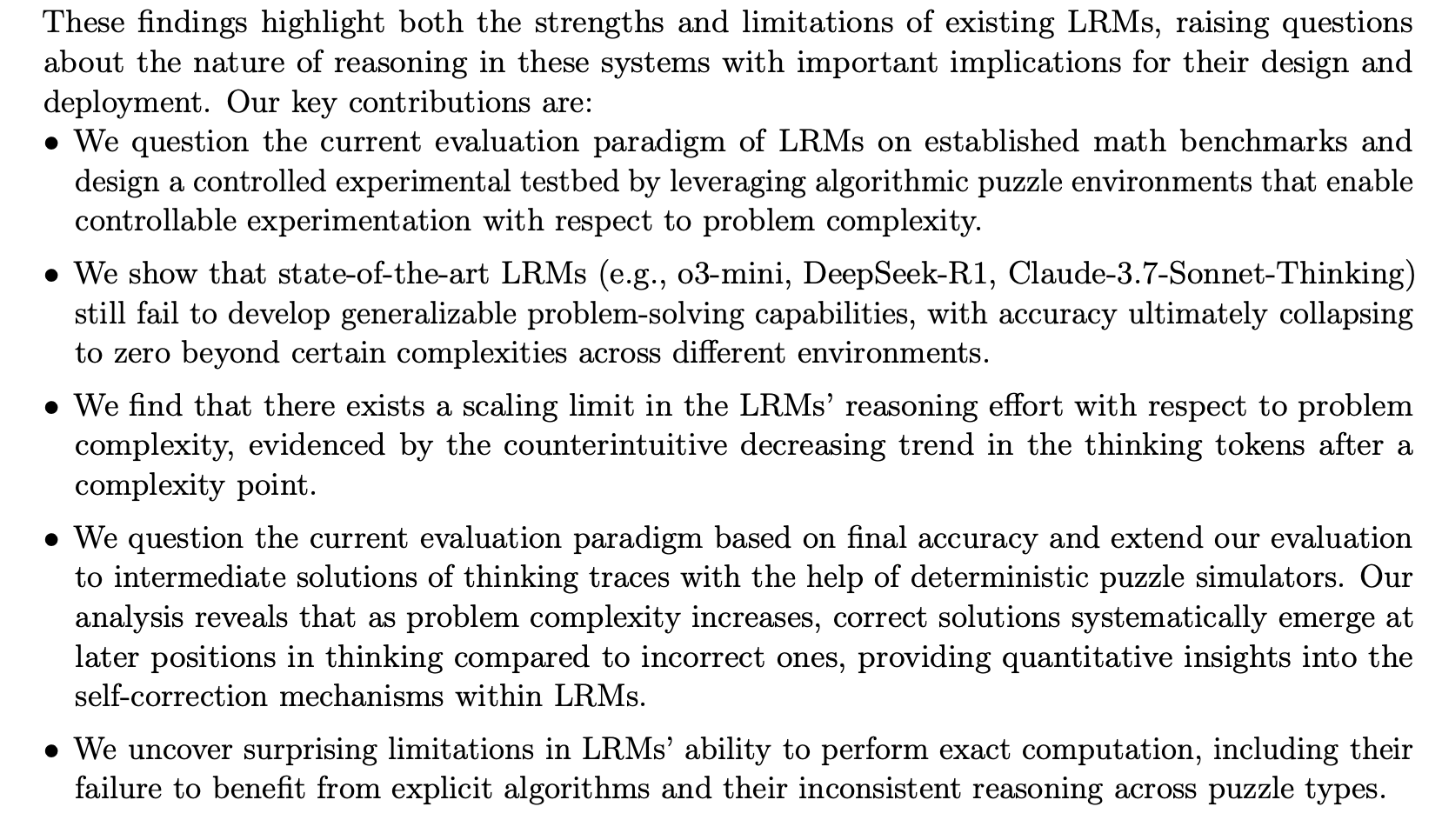

<p>On the limits of LLMs (Large Language models) and LRMs (Large Reasoning Models). The TL;DR: "Our findings reveal fundamental limitations in current models: despite sophisticated self-reflection mechanisms, these models fail to develop generalizable reasoning capabilities beyond certain complexity thresholds." Meaning: accuracy collapse.</p><p>Interesting paper from Apple. <a href="https://ml-site.cdn-apple.com/papers/the-illusion-of-thinking.pdf" rel="nofollow" class="ellipsis" title="ml-site.cdn-apple.com/papers/the-illusion-of-thinking.pdf"><span class="invisible">https://</span><span class="ellipsis">ml-site.cdn-apple.com/papers/t</span><span class="invisible">he-illusion-of-thinking.pdf</span></a></p><p><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/lrm/" rel="tag">#LRM</a></p>

Edited 303d ago

<p>Senators Demand Transparency on Canceled Veterans Affairs Contracts<br>—</p><p>Following a ProPublica investigation into how DOGE had developed an error-prone AI tool to determine which VA contracts should be killed, a trio of lawmakers said the Trump administration continues to “stonewall” their requests for details.</p><p><a href="https://www.propublica.org/article/doge-ai-veterans-affairs-canceled-contracts-senators-trump?utm_source=mastodon&utm_medium=social&utm_campaign=mastodon-post" rel="nofollow" class="ellipsis" title="www.propublica.org/article/doge-ai-veterans-affairs-canceled-contracts-senators-trump?utm_source=mastodon&utm_medium=social&utm_campaign=mastodon-post"><span class="invisible">https://</span><span class="ellipsis">www.propublica.org/article/dog</span><span class="invisible">e-ai-veterans-affairs-canceled-contracts-senators-trump?utm_source=mastodon&utm_medium=social&utm_campaign=mastodon-post</span></a></p><p><a href="/tags/news/" rel="tag">#News</a> <a href="/tags/doge/" rel="tag">#DOGE</a> <a href="/tags/veterans/" rel="tag">#Veterans</a> <a href="/tags/va/" rel="tag">#VA</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/artificialintelligence/" rel="tag">#ArtificialIntelligence</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/technology/" rel="tag">#Technology</a> <a href="/tags/government/" rel="tag">#Government</a></p>

Regarding the last couple boosts: among other downsides, LLMs encourage people to take long-term risks for perceived, but not always actual, short-term gains. They bet the long-term value of their education on a chance at short-term grade inflation, or they bet the long-term security and maintainability of their software codebase on a chance at short-term productivity gains. My read is that more and more data is suggesting that these are bad bets for most people.<br><br>In that respect they're very much like gambling. The messianic fantasies some ChatGPT users have been experiencing fits this picture as well.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/chatgpt/" rel="tag">#ChatGPT</a> <a href="/tags/gpt/" rel="tag">#GPT</a> <a href="/tags/gemini/" rel="tag">#Gemini</a> <a href="/tags/gamblingaddiction/" rel="tag">#GamblingAddiction</a> <a href="/tags/nihilism/" rel="tag">#nihilism</a><br>

Edited 294d ago