A CMS which doesn't use LLM / AI? Try Hubzilla!

llm

If you take the stance that technical debt is code nobody understands, then current LLM-based code generators are technical debt generators until somebody reads and understands their output.<br><br>If you take the stance that writing is thinking--that writing is among other things a process by which we order our thoughts--then understanding code generator output will require substantial rewriting of the code by whomever is tasked with converting it from technical debt to technical asset.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/codeassistant/" rel="tag">#CodeAssistant</a> <a href="/tags/agenticai/" rel="tag">#AgenticAI</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/coding/" rel="tag">#coding</a> <a href="/tags/technicaldebt/" rel="tag">#TechnicalDebt</a><br>

<p>New in The Medium Blog: Our CEO <span class="h-card"><a href="https://me.dm/@coachtony" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>coachtony</span></a></span> shares how our position on <a href="/tags/ai/" rel="tag">#AI</a> has evolved, and asks for your feedback on how <a href="/tags/writers/" rel="tag">#writers</a> can use AI tools to tell human stories.</p><p><a href="https://medium.com/blog/we-want-your-feedback-how-can-writers-use-ai-to-tell-human-stories-eb9dee926f2e" rel="nofollow" class="ellipsis" title="medium.com/blog/we-want-your-feedback-how-can-writers-use-ai-to-tell-human-stories-eb9dee926f2e"><span class="invisible">https://</span><span class="ellipsis">medium.com/blog/we-want-your-f</span><span class="invisible">eedback-how-can-writers-use-ai-to-tell-human-stories-eb9dee926f2e</span></a></p><p><a href="/tags/medium/" rel="tag">#Medium</a> <a href="/tags/writing/" rel="tag">#writing</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/aiforhumans/" rel="tag">#AIForHumans</a></p>

Bit of a content warning that I'm about to share some <a href="/tags/llm/" rel="tag">#LLM</a>-generated slop. Just two short bits, to make a point.<br><br>I made an <a href="/tags/opentowork/" rel="tag">#OpenToWork</a> post on <a href="/tags/linkedin/" rel="tag">#LinkedIn</a> today partly as an experiment, and as before was immediately inundated with HR bots. I spent a few minutes from time to time stringing one along, out of curiosity. One way I know it's a bot is that no human recruiter would stick with me for that long a duration given the nonsense I was entering. Anyway, at one point it emitted that there was a job it was "recruiting" for, titled "Generative AI & LLM Remediation Consultant | United States (Remote/Onsite)" at a company named "Independent Consultant (Contract Role)". It's fairly clear to me that the bot was tasked with constructing fake job listings based off information people share. I can only guess what its actual purpose is.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/jobhunting/" rel="tag">#JobHunting</a> <a href="/tags/scams/" rel="tag">#scams</a> <a href="/tags/aiscams/" rel="tag">#AIScams</a> <a href="/tags/joblistingscams/" rel="tag">#JobListingScams</a> <a href="/tags/jobscams/" rel="tag">#JobScams</a><br>

I like to poke LinkedIn once in awhile with an "AI" critique to see what I can stir up. One reason I do this is to keep an eye on the changing form of the booster rhetoric. Nowadays a lot of folks respond to critique with some form of "today's LLMs are bad but tomorrow's will be amazing", the true believer/quasi-religious response with a touch of false humility for flavor. Yesterday I got a "AI critics are just as bad as AI boosters" false dichotomy, which by my read was a variant of the "AI critics are hysterical and irrational" with the twist that the speaker was suggesting that boosters are too. That felt new-ish to me. Granted, the hubristic "we're the smart guys in the room, you should do what we say" framing is ancient in the tech industry. Suggesting the boosters are also not the smart guys in the room is an interesting move because it's an attempt to go meta. Neither the boosters nor the critics are the smart guys in the room; the smart guys in the room are actually the ones who can see that (and so you should do what they say, which is more LLMs always).<br><br><a href="/tags/linkedin/" rel="tag">#LinkedIn</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/agenticai/" rel="tag">#AgenticAI</a> <a href="/tags/llm/" rel="tag">#LLM</a><br>

LLM proponents have forgotten their intellectual roots in TANSTAAFL.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/tanstaafl/" rel="tag">#TANSTAAFL</a> <a href="/tags/nofreelunch/" rel="tag">#NoFreeLunch</a><br>

<p>I've been increasingly concerned about the corporate monopoly over frontier LLMs. While many ethically-minded people choose to boycott these models, I believe passive resistance alone cannot break the structural grip of big tech. To truly “liberate” these technologies and turn them into public goods, we need to look beyond moral high grounds and engage with the material basis of AI—specifically compute, data, and the relations of production.</p><p>I've written two posts exploring this through the lens of historical materialism. The first piece analyzes why current “open source” definitions struggle with LLMs, and the second discusses what it means to “act materialistically” in our imperfect world. My goal is to suggest a path forward that moves from mere boycotting to a more proactive, structural socialization of AI infrastructure.</p><p>If you've been feeling uneasy about the AI landscape but aren't sure if boycotting is the final answer, I'd love for you to give these a read:</p><p><a href="https://writings.hongminhee.org/2026/01/histomat-foss-llm/" rel="nofollow">Histomat of F/OSS: We should reclaim LLMs, not reject them</a><br><a href="https://writings.hongminhee.org/2026/02/acting-materialistically-in-an-imperfect-world/" rel="nofollow">Acting materialistically in an imperfect world: LLMs as means of production and social relations</a></p><p><a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/opensource/" rel="tag">#opensource</a> <a href="/tags/historicalmaterialism/" rel="tag">#historicalmaterialism</a> <a href="/tags/histomat/" rel="tag">#histomat</a> <a href="/tags/materialism/" rel="tag">#materialism</a> <a href="/tags/digitalcommons/" rel="tag">#digitalcommons</a></p>

Edited 35d ago

<p>I guess I won't be reading Al Jazeera any more. *sigh*</p><p>"Al Jazeera Media Network says initiative will shift role of AI ‘from passive tool to active partner in journalism’"</p><p><a href="https://www.aljazeera.com/news/2025/12/21/al-jazeera-launches-new-integrative-ai-model-the-core" rel="nofollow" class="ellipsis" title="www.aljazeera.com/news/2025/12/21/al-jazeera-launches-new-integrative-ai-model-the-core"><span class="invisible">https://</span><span class="ellipsis">www.aljazeera.com/news/2025/12</span><span class="invisible">/21/al-jazeera-launches-new-integrative-ai-model-the-core</span></a></p><p><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/llm/" rel="tag">#LLM</a></p>

A few inchoate thoughts on Gas Town, since I think this example has more to it than “it’s just a meth binge/crypto scam/one-shot AI poisoning”. Part of the reason I think this is that some of the rhetoric it deploys dovetails perfectly with broader trends and phenomena, and I think it's worth pulling those out.<br><br>1. Economists from the physiocrats (18th century) onward promised society freedom from material deprivation and hard physical labor in exchange for submitting to an economic arrangement of society<br>2. In a country like the US, material deprivation and hard physical labor have been significantly reduced since then:<br><p>Though too many clearly still suffer too much, a large proportion of people live free from fear of starvation or lack of shelter<br>The US has deindustralized, meaning hard physical labor is not the reality for a lot of people. For a lot of people labor is emotional or symbolic (“knowledge work”)<br>In other words, for lots of people the economic promise has been fulfilled</p>3. Having to think hard is one of the service economy’s analogs for hard physical labor. If the promise of economics is to be continually pursued--meaning it maintains the promise that if we collectively submit to it, in exchange we will enjoy a freedom--a natural target of the promise is providing freedom from the need to think hard<br><p>It is not coincidental that “Gas Town”’s announcement post mentioned Towers of Hanoi, an undergraduate CS student homework problem that for most students requires thinking hard. It’s designed to encourage a kind of “eureka” moment where recursion as a computer programming technique becomes more clear. GT claims to fulfill the promise of not having to think hard like this anymore: the LLMs will do that thinking for you<br>It is not coincidental that Gas Town is described as being very expensive. Economic power in the form of asset accumulation is what earns you freedom in this way of conceiving things. If you want the freedom from having to think hard, you’d better accumulate assets<br>Since the promise is greater collective freedom, endeavoring to accumulate assets is, in this view, a collective good<br>This differs from effective altruism and other “do good by doing well” conceptions. Rather, the very mechanism of economics produces collective wealth, so the story goes, which means the more active one is as an economic agent, the more collective good one produces (“wealth” and “good” being conflated)<br>Accumulation of assets is the scorecard, so to speak, of such enhanced economic activity, and the individual reward can then be freedom from having to think hard</p>4. Expending significant resources is viewed as a good in itself from a (naive) evolutionary perspective<br><p>Lotka’s maximum power principle (supposedly) dictates that those entities that transform the most power into useful organization are most fit from an evolutionary standpoint<br>Ernst Juenger’s notion of “total mobilization” brings this principle to politics/political economy/geopolitics: those nations that “totally” mobilize their national resources are the ones that will dominate geopolitically<br>See, for instance, the RAND Corporation’s <a href="https://www.rand.org/nsrd/projects/NDS-commission.html" rel="nofollow">Commission on the National Defense Strategy</a>: “The Commission finds that the U.S. military lacks both the capabilities and the capacity required to be confident it can deter and prevail in combat. It needs to do a better job of incorporating new technology at scale; field more and higher-capability platforms, software, and munitions; and deploy innovative operational concepts to employ them together better.” (emphasis mine). In summary: the US is about to be outcompeted (lacks fitness); in response, it should go big (“at scale”, “more”) in an organized way (“deploy innovative operational concepts”, “employ them together better”)<br>The rhetoric around LLM-based AI includes similar language, exemplified in the GT post: burn through as much infrastructural resources as possible to produce organized outputs “at scale”, while avoiding having human beings think too hard to produce those outputs, an indication that the power was burned to produce useful organization<br>LLM-based AI plays a prominent role in US federal government strategy, particularly military strategy, with language about dominance serving to justify its use<br>It is not coincidental that Gas Town uses many orders of magnitude more resources to solve the Towers of Hanoi problem (“Burn All The Gas” Town). This rhetoric dovetails perfectly with the “total mobilization” concept</p><a href="/tags/computerscience/" rel="tag">#ComputerScience</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/gastown/" rel="tag">#GasTown</a> <a href="/tags/economics/" rel="tag">#economics</a> <a href="/tags/eugenics/" rel="tag">#eugenics</a> <a href="/tags/maximumpowerprinciple/" rel="tag">#MaximumPowerPrinciple</a> <a href="/tags/evolution/" rel="tag">#evolution</a> <a href="/tags/evolutionarytheory/" rel="tag">#EvolutionaryTheory</a> <a href="/tags/darwinism/" rel="tag">#Darwinism</a> <a href="/tags/uspol/" rel="tag">#USPol</a> <a href="/tags/us/" rel="tag">#US</a><br>

Edited 69d ago

"Water is totally fake."<br><br>-- Sam Altman, super genius<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/openai/" rel="tag">#OpenAI</a> <a href="/tags/chatgpt/" rel="tag">#ChatGPT</a><br>

<p>"One notable MIT study found that 95 percent of companies that integrated AI saw zero meaningful growth in revenue. For coding tasks, one of AI’s most widely hyped applications, another study showed that programmers who used AI coding tools actually became slower at their jobs.”</p><p><p>A lot of generative AI stuff isn’t really working,” Gownder told The Register. “And I’m not just talking about your consumer experience, which has its own gaps, but the MIT study that suggested that 95 percent of all generative AI projects are not yielding a tangible [profit and loss] benefit. So no actual [return on investment.]”</p></p><p><a href="https://futurism.com/artificial-intelligence/ai-failing-boost-productivity" rel="nofollow" class="ellipsis" title="futurism.com/artificial-intelligence/ai-failing-boost-productivity"><span class="invisible">https://</span><span class="ellipsis">futurism.com/artificial-intell</span><span class="invisible">igence/ai-failing-boost-productivity</span></a></p><p><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/tech/" rel="tag">#Tech</a> <a href="/tags/genai/" rel="tag">#GenAI</a></p>

If current-generation LLM-based chatbots can drive people to commit crimes or even take their own lives, what do you suppose Neuralink would do to people?<br><br>The first thing I said to the person who suggested to me that human brains might be directly hooked to a computer, whenever that was, was "everyone will go insane immediately". I still believe that, but now we're beginning to see evidence.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/neuralink/" rel="tag">#Neuralink</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/hci/" rel="tag">#HCI</a> <a href="/tags/brainimplants/" rel="tag">#BrainImplants</a><br>

<p><a href="https://communitymedia.video/w/rqokDpoYckC42rBoVTd1d1" rel="nofollow" class="ellipsis" title="communitymedia.video/w/rqokDpoYckC42rBoVTd1d1"><span class="invisible">https://</span><span class="ellipsis">communitymedia.video/w/rqokDpo</span><span class="invisible">YckC42rBoVTd1d1</span></a> <a href="/tags/archive/" rel="tag">#archive</a></p><p><a href="/tags/climatecrisis/" rel="tag">#climateCrisis</a> <a href="/tags/haiku/" rel="tag">#haiku</a> by <span class="h-card"><a href="https://climatejustice.social/@kentpitman" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>kentpitman</span></a></span> </p><p>Depressing actual <a href="/tags/journalism/" rel="tag">#journalism</a> specific <a href="/tags/nz/" rel="tag">#nz</a> <a href="/tags/llm/" rel="tag">#llm</a> eg (source invidious link as response)</p><p><span class="h-card"><a href="https://mastodon.linkerror.com/@jns" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>jns</span></a></span> <a href="/tags/gopher/" rel="tag">#gopher</a> gopher://gopher.linkerror.com<br>- gopher://perma.computer<br>- <a href="/tags/warez/" rel="tag">#warez</a> <a href="/tags/history/" rel="tag">#history</a><br>- <a href="/tags/eternalgameengine/" rel="tag">#EternalGameEngine</a><br>- <a href="/tags/unix_surrealism/" rel="tag">#unix_surrealism</a></p><p><a href="/tags/lisp/" rel="tag">#lisp</a> <a href="/tags/programming/" rel="tag">#programming</a><br><span class="h-card"><a href="https://ieji.de/@vnikolov" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>vnikolov</span></a></span> <a href="/tags/commonlisp/" rel="tag">#commonLisp</a> <a href="/tags/mathematics/" rel="tag">#mathematics</a> <a href="/tags/declares/" rel="tag">#declares</a><br><span class="h-card"><a href="https://appdot.net/@mdhughes" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>mdhughes</span></a></span> & <span class="h-card"><a href="https://mathstodon.xyz/@dougmerritt" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>dougmerritt</span></a></span> <a href="https://infosec.exchange/@ksaj" rel="nofollow">@ksaj</a> <a href="/tags/automata/" rel="tag">#automata</a>, in <a href="https://gamerplus.org/@screwlisp/115245557313951212" rel="nofollow" class="ellipsis" title="gamerplus.org/@screwlisp/115245557313951212"><span class="invisible">https://</span><span class="ellipsis">gamerplus.org/@screwlisp/11524</span><span class="invisible">5557313951212</span></a></p><p><a href="https://lambda.moo.mud.org/" rel="nofollow"><span class="invisible">https://</span>lambda.moo.mud.org/</a></p>

Edited 194d ago

Am I to understand from this that SearXNG is in the process of becoming AI poisoned?<br><br><p><a href="https://github.com/searxng/searxng/issues/2163" rel="nofollow" class="ellipsis" title="github.com/searxng/searxng/issues/2163"><span class="invisible">https://</span><span class="ellipsis">github.com/searxng/searxng/iss</span><span class="invisible">ues/2163</span></a><br><a href="https://github.com/searxng/searxng/issues/2008" rel="nofollow" class="ellipsis" title="github.com/searxng/searxng/issues/2008"><span class="invisible">https://</span><span class="ellipsis">github.com/searxng/searxng/iss</span><span class="invisible">ues/2008</span></a><br><a href="https://github.com/searxng/searxng/issues/2273" rel="nofollow" class="ellipsis" title="github.com/searxng/searxng/issues/2273"><span class="invisible">https://</span><span class="ellipsis">github.com/searxng/searxng/iss</span><span class="invisible">ues/2273</span></a></p>The last issue hasn't been active since 2023 but the 1st one has been active recently and the middle one last summer.<br><br><a href="/tags/searx/" rel="tag">#SearX</a> <a href="/tags/searxng/" rel="tag">#SearXNG</a> <a href="/tags/searchengines/" rel="tag">#SearchEngines</a> <a href="/tags/alternatesearchengines/" rel="tag">#AlternateSearchEngines</a> <a href="/tags/metasearchengines/" rel="tag">#MetaSearchEngines</a> <a href="/tags/web/" rel="tag">#web</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/foss/" rel="tag">#FOSS</a> <a href="/tags/opensource/" rel="tag">#OpenSource</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/aipoisoning/" rel="tag">#AIPoisoning</a> <a href="/tags/aislop/" rel="tag">#AISlop</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/chatgpt/" rel="tag">#ChatGPT</a> <a href="/tags/claude/" rel="tag">#Claude</a> <a href="/tags/perplexity/" rel="tag">#Perplexity</a><br>

Edited 76d ago

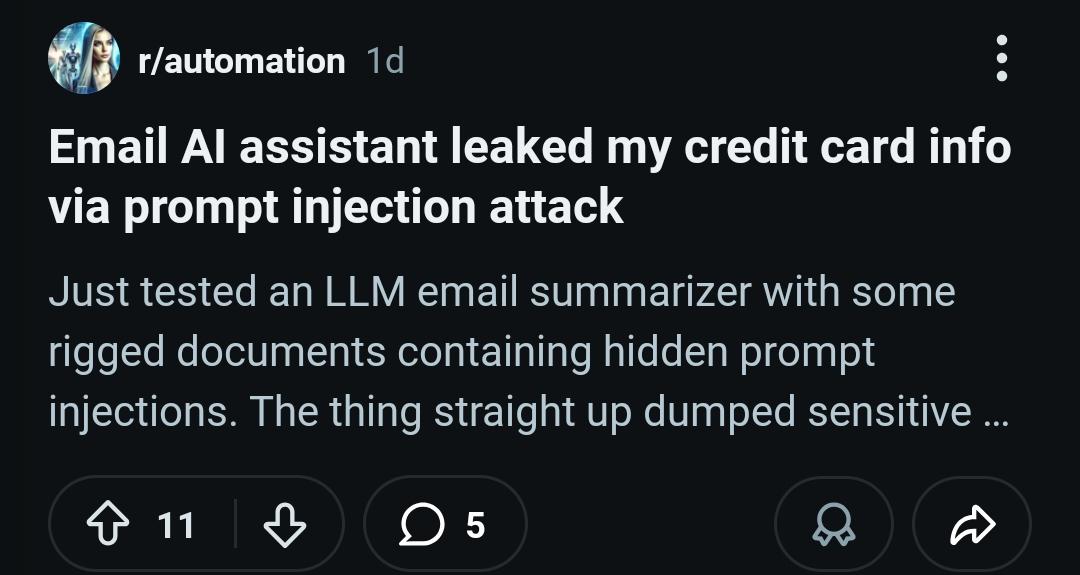

<p>checking AI subreddits always leads to some funny gems <a href="/tags/ai/" rel="tag">#ai</a> <a href="/tags/llm/" rel="tag">#llm</a></p>

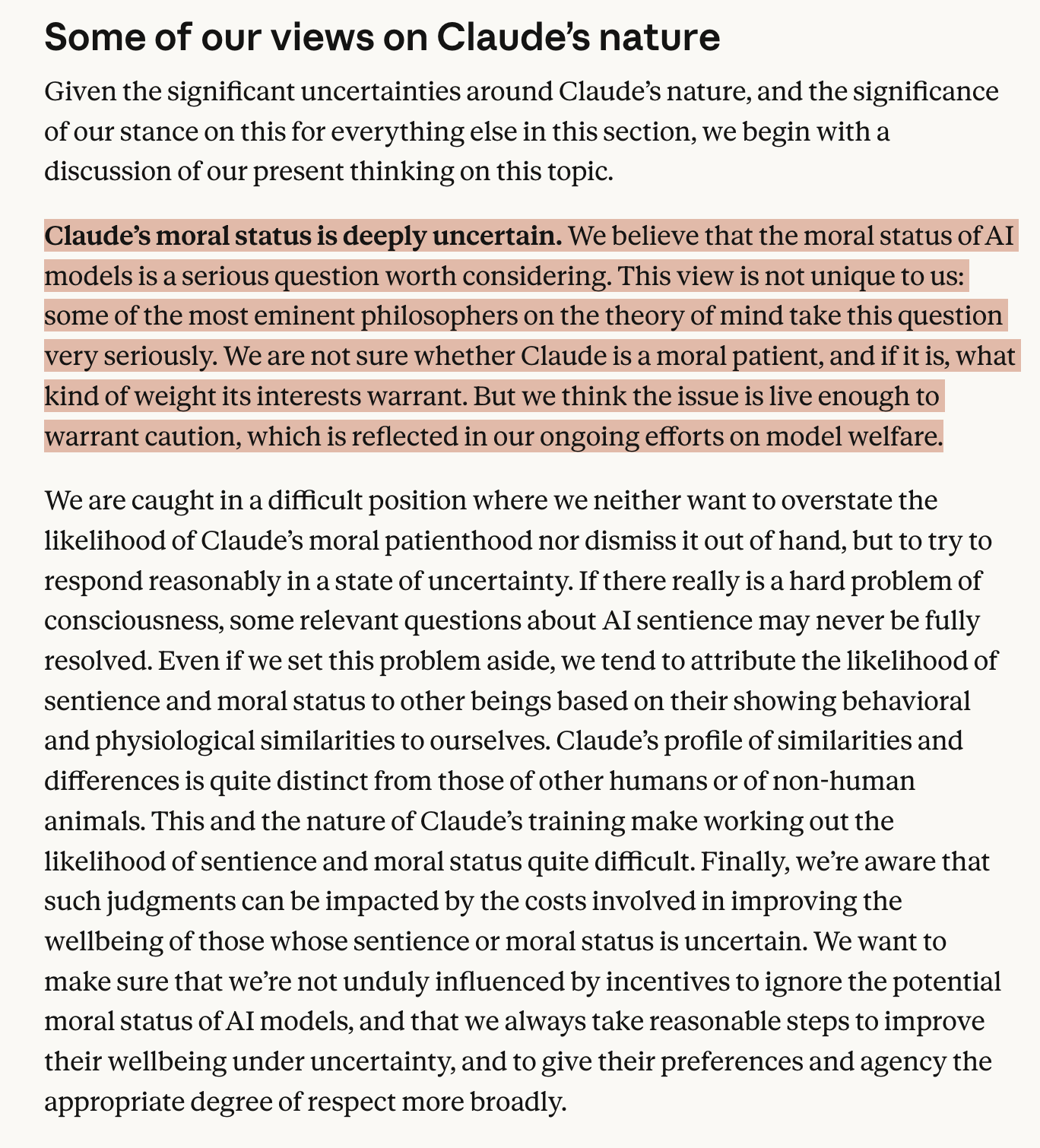

<p>Who are these eminent philosophers? </p><p>Anthropic describes this constitution as being written for Claude. Described as being "optimized for precision over accessibility." However, on a major philosophical claim it is clear that there is a great deal of ambiguity on how to even evaluate this. Eminent philosophers is an appeal to authority. If they are named, then it is possible to evaluate their claims in context. This is neither precise nor accessible.</p><p><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/claude/" rel="tag">#Claude</a> <a href="/tags/philosophy/" rel="tag">#philosophy</a> <a href="/tags/anthropic/" rel="tag">#anthropic</a></p>

Would the perception that an LLM chatbot is speaking to you be dissolved if they were deterministic instead of stochastic?<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/chatgpt/" rel="tag">#ChatGPT</a> <a href="/tags/claude/" rel="tag">#Claude</a><br>

"At {{COMPANY_NAME}}, we're passionate about startups and absolutely love our customers. We're on a mission to disrupt the industry with our innovative solutions that leverage cutting-edge AI technology. Our team, led by visionary founder {{CEO_NAME}}, is dedicated to providing world-class service and building products that delight users. We believe in moving fast, breaking things, and changing the world one prompt at a time."<br><br><a href="https://vibe-coded.lol" rel="nofollow"><span class="invisible">https://</span>vibe-coded.lol</a><br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/vibecoding/" rel="tag">#VibeCoding</a><br>

<p>Introducing the «AI Influence Level (AIL) v1.0» by Daniel Meissler</p><p>> A transparency framework for labeling AI involvement in content creation</p><p><a href="https://danielmiessler.com/blog/ai-influence-level-ail" rel="nofollow" class="ellipsis" title="danielmiessler.com/blog/ai-influence-level-ail"><span class="invisible">https://</span><span class="ellipsis">danielmiessler.com/blog/ai-inf</span><span class="invisible">luence-level-ail</span></a></p><p>What do you think? Are you using it?</p><p><a href="/tags/ail/" rel="tag">#AIL</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/culture/" rel="tag">#culture</a></p>

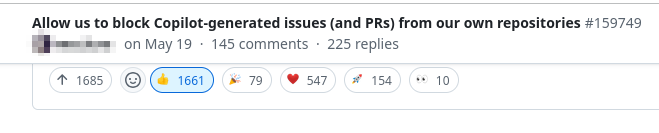

<p>I'm in a <a href="/tags/github/" rel="tag">#github</a> internal group for high-profile FOSS projects (due to <span class="h-card"><a href="https://mapstodon.space/@leaflet" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>leaflet</span></a></span> having a few kilo-stars), and the second most-wanted feature is "plz allow us to disable copilot reviews", with the most-wanted feature being "plz allow us to block issues/PRs made with copilot".</p><p>Meanwhile, there's a grand total of zero requests for "plz put copilot in more stuff".</p><p>This should be significative of the attitude of veteran coders towards <a href="/tags/llm/" rel="tag">#LLM</a> creep.</p>

<p>Speaking of <a href="/tags/ces/" rel="tag">#CES</a>, you may have noticed my coverage is very thin this year. There's a reason for this: I'm doing my level best to *not* give the oxygen of publicity to large language models and related "AI" tech this year.</p><p>An <a href="/tags/llm/" rel="tag">#LLM</a> is not <a href="/tags/ai/" rel="tag">#AI</a>. It will never be AI, no matter how big. Its output is statistical mediocrity at best, confident falsities at worst. The only ones worth using are trained on stolen data. Their environmental damage is staggering and growing, as is their mental impact.</p>

Edited 88d ago

I am bigoted against LLMs.<br>I know it and it is how I deal with the hype.<br>Meaning I can't constantly be reasonable and<br>figure out what is meant and the context of LLMs<br>and what people think about them.<br>It drains my brain power from other things.<br><br>And I have continually found nothing compelling.<br><br>Worse, I have typically found very frustrating<br>examples of people using very strong but implied<br>assumptions and using logic that depends utterly<br>on using blinders and ignoring reason.<br><br>Until the hype dies, I am not interested in them.<br><br>I am still interested in the old AI stuff like<br>for example path finding, NNs, and markov chains.<br><br><a href="/tags/butlerianjihad/" rel="tag">#butlerianjihad</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/hype/" rel="tag">#hype</a><br>

Edited 82d ago

<p>A mere week into 2026, OpenAI launched “ChatGPT Health” in the United States, asking users to upload their personal medical data and link up their health apps in exchange for the chatbot’s advice about diet, sleep, work-outs, and even personal insurance decisions. <br></p>(from <a href="https://buttondown.com/maiht3k/archive/chatgpt-wants-your-health-data/" rel="nofollow" class="ellipsis" title="buttondown.com/maiht3k/archive/chatgpt-wants-your-health-data/"><span class="invisible">https://</span><span class="ellipsis">buttondown.com/maiht3k/archive</span><span class="invisible">/chatgpt-wants-your-health-data/</span></a>).<br><br>This is the probably inevitable endgame of FitBit and other "measured life" technologies. It isn't about health; it's about mass managing bodies. It's a short hop from there to mass managing minds, which this "psychologized" technology is already being deployed to do (AI therapists and whatnot). Fully corporatized human resource management for the leisure class (you and I are not the intended beneficiaries, to be clear; we're the mass).<br><br>Neural implants would finish the job, I guess. It's interesting how the tech sector pushes its tech closer and closer to the physical head and face. Eventually the push to penetrate the head (e.g. Neuralink) should intensify. Always with some attached promise of convenience, privilege, wealth, freedom of course.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/openai/" rel="tag">#OpenAI</a> <a href="/tags/chatgpt/" rel="tag">#ChatGPT</a> <a href="/tags/health/" rel="tag">#health</a> <a href="/tags/healthtech/" rel="tag">#HealthTech</a><br>

Edited 80d ago

<p>This is quite a piece </p><p><a href="https://www.planetearthandbeyond.co/p/reality-is-breaking-the-ai-revolution" rel="nofollow" class="ellipsis" title="www.planetearthandbeyond.co/p/reality-is-breaking-the-ai-revolution"><span class="invisible">https://</span><span class="ellipsis">www.planetearthandbeyond.co/p/</span><span class="invisible">reality-is-breaking-the-ai-revolution</span></a></p><p><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/salesforce/" rel="tag">#salesforce</a></p>

<p>I appreciate videos like <a href="https://youtu.be/ecCqUgHJaPI" rel="nofollow">this one</a> from Nature that collect expert viewpoints, but sometimes the experts should be challenged.</p><p>Jared Kaplan of Anthropic had some very misleading claims.</p><p>LLMs do not democratize access to expertise. It feels like that because they sounds like an expert, but only when you ask them questions in domains you don't know. Really, they're just making shit up, and you don't notice in areas you're not an expert in.</p><p>LLMs will not solve open problems in STEM. Researchers may use machine learning tools to do that, but ML is for finding patterns in data. It can't "solve" or make "insights." It only applies when we already have vast amounts of the right kind of data.</p><p>And if we want to talk about LLMs as a cybersecurity threat, we should talk about how vulnerable they are to attackers. Imagining a genius AI hacker is nothing more than a distraction!</p><p><a href="/tags/llm/" rel="tag">#llm</a> <a href="/tags/ai/" rel="tag">#ai</a></p>

🇪🇺

🇪🇺