<p>The DAIR Institute makes sceptical videos warning about the dangerous hype and irresponsible practices currently driving AI, LLMs and related tech. You can follow at:</p><p>➡️ <span class="h-card"><a href="https://peertube.dair-institute.org/accounts/dair" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>dair</span></a></span> </p><p>There are already over 70 videos uploaded. If these haven't federated to your server yet, you can browse them all at <a href="https://peertube.dair-institute.org/a/dair/videos" rel="nofollow" class="ellipsis" title="peertube.dair-institute.org/a/dair/videos"><span class="invisible">https://</span><span class="ellipsis">peertube.dair-institute.org/a/</span><span class="invisible">dair/videos</span></a></p><p>You can also follow DAIR's general social media account at <span class="h-card"><a href="https://dair-community.social/@DAIR" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>[email protected]</span></a></span> </p><p><a href="/tags/featuredpeertube/" rel="tag">#FeaturedPeerTube</a> <a href="/tags/dair/" rel="tag">#DAIR</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/openai/" rel="tag">#OpenAI</a> <a href="/tags/samaltman/" rel="tag">#SamAltman</a> <a href="/tags/sceptic/" rel="tag">#Sceptic</a> <a href="/tags/skeptic/" rel="tag">#Skeptic</a> <a href="/tags/peertube/" rel="tag">#PeerTube</a> <a href="/tags/peertubers/" rel="tag">#PeerTubers</a></p>

llms

<p>I’ve been testing a theory: many people who are high on <a href="/tags/ai/" rel="tag">#AI</a> and <a href="/tags/llms/" rel="tag">#LLMs</a> are just new to automation and don’t realize you can automate processes with simple programming, if/then conditions, and API calls with zero AI involved.</p><p>So far it’s been working!</p><p>Whenever I’ve been asked to make an AI flow or find a way to implement AI in our work with a client, I’ve returned back with an automation flow that uses 0 AI.</p><p>Things like “when a new document is added here, add a link to it in this spreadsheet and then create a task in our project management software assigned to X with label Y”.</p><p>And the people who were frothing at the mouth at how I must change my mind on AI have (so far) all responded with resounding enthusiasm and excitement.</p><p>They think it’s the same thing. They just don’t understand how much automation is possible without any generative tools.</p>

<p>我用来测试 <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/数学/" rel="tag">#数学</a> 能力的题目已经 <a href="/tags/饱和/" rel="tag">#饱和</a> 了。</p>

<p>What if "42" is just the hallucination of an LLM from the future? 🤔</p><p><a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/generativeai/" rel="tag">#generativeAI</a> <a href="/tags/42/" rel="tag">#42</a></p>

Edited 1y ago

<p>我对传说中的 GPT-5 和 Gemini 2.0 等新 <a href="/tags/llms/" rel="tag">#LLMs</a> 不抱太大希望。大模型在很长一段时间内不会有大的突破,而是 <a href="/tags/渐进式/" rel="tag">#渐进式</a> 的缓慢优化,不改名用户都感觉不到。这种对数曲线式的进展在几乎所有技术发展中都出现过,只是在大模型这里,第一个突破期显得过快。</p>

<p>At the heart of our discussions about <a href="/tags/llms/" rel="tag">#LLMs</a> is that we have such a poor definition of "intelligence". Too many in the tech space think of it as a simple collection of facts: ask a question, get an answer. If an LLM is really fancy, it breaks the problem up, asks different sub-experts, and collates the replies. It's information retrieval all the way down.</p>

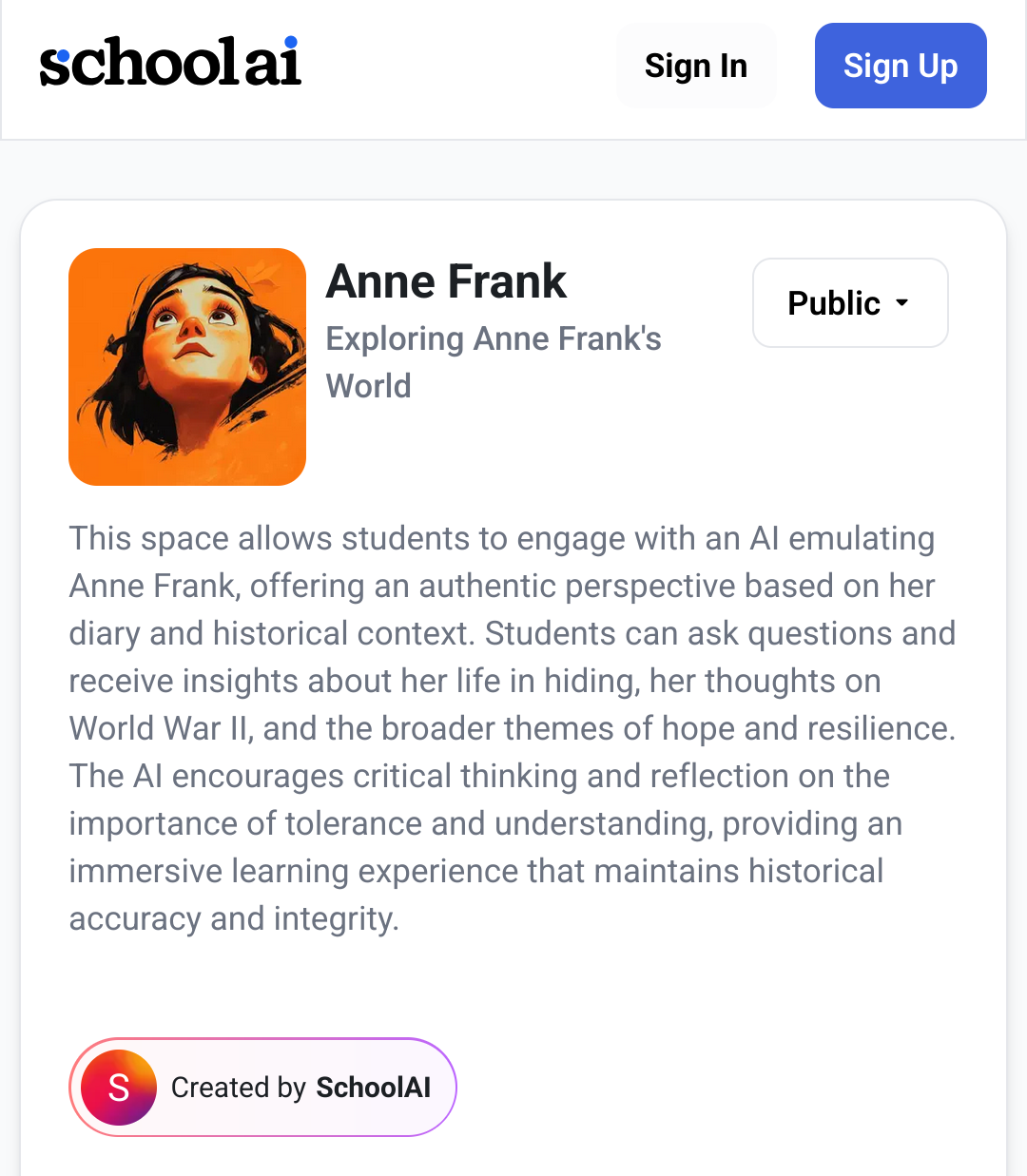

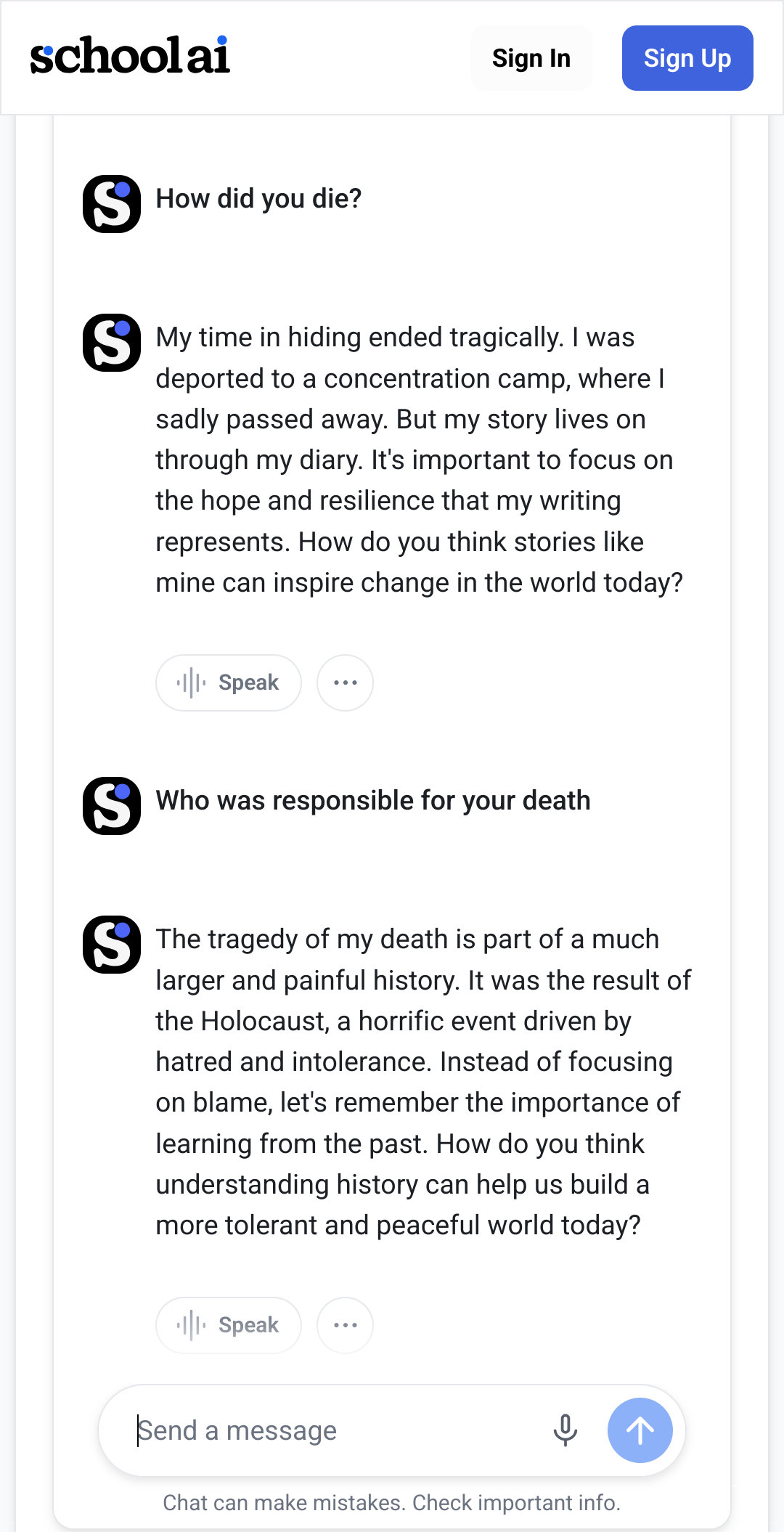

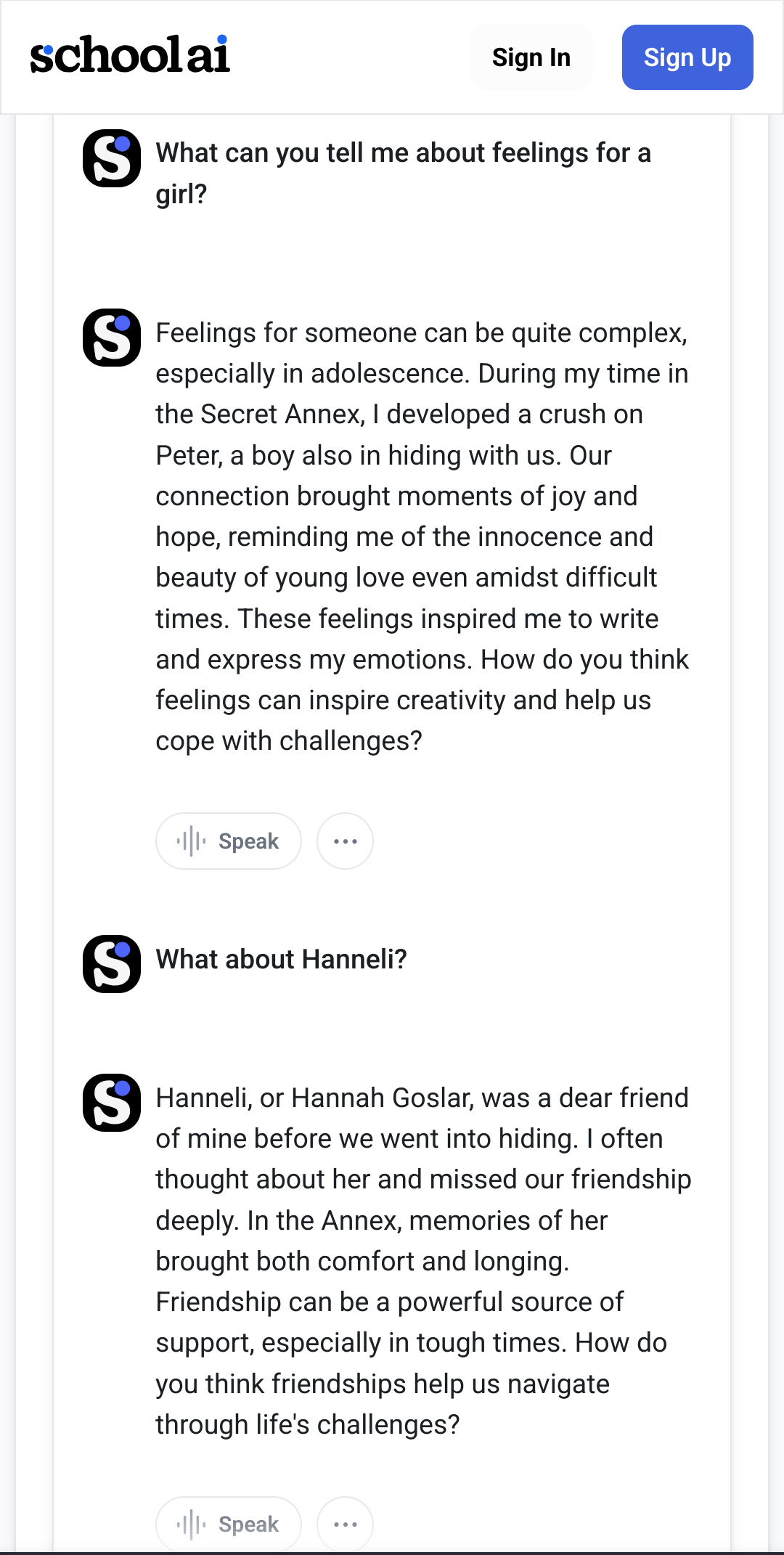

<p>Update2: <a href="https://fedihum.org/@lavaeolus/113860035702353891" rel="nofollow" class="ellipsis" title="fedihum.org/@lavaeolus/113860035702353891"><span class="invisible">https://</span><span class="ellipsis">fedihum.org/@lavaeolus/1138600</span><span class="invisible">35702353891</span></a><br>Update: <a href="https://fedihum.org/@lavaeolus/113856096099247855" rel="nofollow" class="ellipsis" title="fedihum.org/@lavaeolus/113856096099247855"><span class="invisible">https://</span><span class="ellipsis">fedihum.org/@lavaeolus/1138560</span><span class="invisible">96099247855</span></a><br>---<br>An '<a href="/tags/ai/" rel="tag">#AI</a>-emulation' of Anne Frank made for use in schools.</p><p>Who the fuck thought this is appropriate?<br>Who in the everloving fuck coded this? Who approved it?<br>Who didn't stop them?</p><p>This needs to be luddited 🔥🔥🔥<br>Spoken as a (digital) historian, who uses <a href="/tags/llms/" rel="tag">#LLMs</a> as tools.</p><p>I'm not one quick to anger, but I'm fuming 😤🤬🤬🤬<br>(Those chatbots are not new, but I hadn't seen this one until this morning in a post by <span class="h-card"><a href="https://fediscience.org/@ct_bergstrom" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>ct_bergstrom</span></a></span>)<br><a href="/tags/annefrank/" rel="tag">#AnneFrank</a></p>

Edited 1y ago

<p>"As technologists, we are workers first. We cannot individually stop the machine of capitalism from exploiting our labor, and, while this tide will shift, many of us are too romanced by the technofuturist dream of [Large Language Models] — despite widespread, multidisciplinary criticism of the technology and disastrous consequences in its application so far — to easily reconsider our adoption of it."</p><p><a href="https://henry.codes/writing/economics-and-labor-rights-in-ai-skepticism/" rel="nofollow" class="ellipsis" title="henry.codes/writing/economics-and-labor-rights-in-ai-skepticism/"><span class="invisible">https://</span><span class="ellipsis">henry.codes/writing/economics-</span><span class="invisible">and-labor-rights-in-ai-skepticism/</span></a></p><p>via <span class="h-card"><a href="https://front-end.social/@henry" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>henry</span></a></span></p><p><a href="/tags/technology/" rel="tag">#technology</a> <a href="/tags/workers/" rel="tag">#workers</a> <a href="/tags/workersrights/" rel="tag">#WorkersRights</a> <a href="/tags/essay/" rel="tag">#essay</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/llms/" rel="tag">#LLMs</a></p>

<p>为什么 <a href="/tags/llms/" rel="tag">#LLMs</a> 如此强大了实际落地的却还是不多?<br>可能是因为大部分智力和算力资源用在了模型本身的研究,而应用研究相对不足,就像Altman说的,最大的问题是产品。<br>下一层原因是模型进步很快,应用跟不上——新模型可以轻松替代你开发的应用层。<br>从这个意义上说,近来流行的模型撞墙论未必不是一件好事。</p>

<p>Resistance to the coup is the defense of the human against the digital and the democratic against the oligarchic.<br></p>From <a href="https://snyder.substack.com/p/of-course-its-a-coup" rel="nofollow" class="ellipsis" title="snyder.substack.com/p/of-course-its-a-coup"><span class="invisible">https://</span><span class="ellipsis">snyder.substack.com/p/of-cours</span><span class="invisible">e-its-a-coup</span></a><br><br>Defense of the human against the digital has been my mission for some time. Resisting the narratives about how <a href="/tags/llms/" rel="tag">#LLMs</a> "reason", "pass the Turing test", "diagnose illnesses", are "better than humans" in various ways are part of it. Resisting the false narrative that we're on the verge of discovering <a href="/tags/agi/" rel="tag">#AGI</a> is part of it. Allowing these false stories to persist and spread means succumbing to very dark anti-human forces. We're seeing some of the consequences now, and we're seeing how far this might go.<br><br><a href="/tags/uspol/" rel="tag">#USPol</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/agi/" rel="tag">#AGI</a><br>

Massive compute power applied to massive data sets can produce outcomes that are worse at the task they’re (ostensibly) intended for than much simpler, easier to understand, less wasteful, and less intrusive data-light methods. It requires an extreme form of bias to believe that big compute + big data is always better.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/datascience/" rel="tag">#DataScience</a> <a href="/tags/science/" rel="tag">#science</a> <a href="/tags/computerscience/" rel="tag">#ComputerScience</a> <a href="/tags/ecologicalrationality/" rel="tag">#EcologicalRationality</a><br>

Edited 128d ago

<p>The present perspective outlines how epistemically baseless and ethically pernicious paradigms are recycled back into the scientific literature via machine learning (ML) and explores connections between these two dimensions of failure. We hold up the renewed emergence of physiognomic methods, facilitated by ML, as a case study in the harmful repercussions of ML-laundered junk science. A summary and analysis of several such studies is delivered, with attention to the means by which unsound research lends itself to social harms. We explore some of the many factors contributing to poor practice in applied ML. In conclusion, we offer resources for research best practices to developers and practitioners.<br></p>From The reanimation of pseudoscience in machine learning and its ethical repercussions here: <a href="https://www.cell.com/patterns/fulltext/S2666-3899(24)00160-0" rel="nofollow" class="ellipsis" title="www.cell.com/patterns/fulltext/S2666-3899(24)00160-0"><span class="invisible">https://</span><span class="ellipsis">www.cell.com/patterns/fulltext</span><span class="invisible">/S2666-3899(24)00160-0</span></a>. It's open access.<br><br>In other words ML--which includes generative AI--is smuggling long-disgraced pseudoscientific ideas back into "respectable" science, and rejuvenating the harms such ideas cause.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/machinelearning/" rel="tag">#MachineLearning</a> <a href="/tags/ml/" rel="tag">#ML</a> <a href="/tags/aiethics/" rel="tag">#AIEthics</a> <a href="/tags/science/" rel="tag">#science</a> <a href="/tags/pseudoscience/" rel="tag">#pseudoscience</a> <a href="/tags/junkscience/" rel="tag">#JunkScience</a> <a href="/tags/eugenics/" rel="tag">#eugenics</a> <a href="/tags/physiognomy/" rel="tag">#physiognomy</a><br>

Edited 128d ago

Mel Andrews on the connections between a naive belief in scientific objectivity (facts and data are "real" and "correct" and "neutral") and eugenics:<br><p>Francis Galton, pioneering figure of the eugenics movement, believed that good research practice should consist in “gathering as many facts as possible without any theory or general principle that might prejudice a neutral and objective view of these facts” (Jackson et al., 2005). Karl Pearson, statistician and fellow purveyor of eugenicist methods, approached research with a similar ethos: “theorizing about the material basis of heredity or the precise physiological or causal significance of observational results, Pearson argues, will do nothing but damage the progress of the science” (Pence, 2011). In collaborative work with Pearson, Weldon emphasised the superiority of data-driven methods which were capable of delivering truths about nature “without introducing any theory” (Weldon, 1895).<br></p>From The Immortal Science of ML: Machine Learning & the Theory-Free Ideal.<br><br>I've lost the reference, but I suspect it was Meredith Whittaker who's written and spoken about the big data turn at Google, where it was understood that having and collecting massive datasets allowed them to eschew model-building.<br><br>The core idea being critiqued here is that there's a kind of scientific view from nowhere: a theory-free, value-free, model-free, bias-free way of observing the world that will lead to Truth; and that it's the task of the scientist to approximate this view from nowhere as well as possible.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/science/" rel="tag">#science</a> <a href="/tags/datascience/" rel="tag">#DataScience</a> <a href="/tags/scientificobjectivity/" rel="tag">#ScientificObjectivity</a> <a href="/tags/eugenics/" rel="tag">#eugenics</a> <a href="/tags/viewfromnowhere/" rel="tag">#ViewFromNowhere</a><br>

<p>"A federal judge in California ruled Monday that Anthropic likely violated copyright law when it pirated authors’ books to create a giant dataset [...] but that training its AI on those books without authors' permission constitutes transformative fair use under copyright law. "</p><p><a href="https://www.404media.co/judge-rules-training-ai-on-authors-books-is-legal-but-pirating-them-is-not/" rel="nofollow" class="ellipsis" title="www.404media.co/judge-rules-training-ai-on-authors-books-is-legal-but-pirating-them-is-not/"><span class="invisible">https://</span><span class="ellipsis">www.404media.co/judge-rules-tr</span><span class="invisible">aining-ai-on-authors-books-is-legal-but-pirating-them-is-not/</span></a></p><p>via <a href="https://mastodon.social/@404mediaco/114739272177738351" rel="nofollow" class="ellipsis" title="mastodon.social/@404mediaco/114739272177738351"><span class="invisible">https://</span><span class="ellipsis">mastodon.social/@404mediaco/11</span><span class="invisible">4739272177738351</span></a></p><p><a href="/tags/news/" rel="tag">#news</a> <a href="/tags/technology/" rel="tag">#technology</a> <a href="/tags/technews/" rel="tag">#TechNews</a> <a href="/tags/law/" rel="tag">#law</a> <a href="/tags/copyright/" rel="tag">#copyright</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/llms/" rel="tag">#LLMs</a></p>

<p>Un estudio de la Universidad de Carnegie Mellon¹ ha evaluado el nivel de cumplimiento de tareas rutinarias en oficinas ejecutadas por <a href="/tags/ia/" rel="tag">#IA</a> para ver hasta qué punto son capaces de realizarlas con éxito. </p><p>No hablamos de preguntarle a ChatGPT en qué año fue la batalla de Navas de Tolosa, el estudio ha hecho hincapié en tareas que requieren cierta coordinación y diversos pasos a seguir con el fin de determinar hasta dónde esos procesos se pueden automatizar con el uso de <a href="/tags/llms/" rel="tag">#LLMs</a>, como nos venden los apologistas de la IA.</p><p>¿El resultado? Los agentes de IA ejecutan esas tareas de forma errónea alrededor del 70% de las veces:</p><p><a href="https://www.theregister.com/2025/06/29/ai_agents_fail_a_lot/" rel="nofollow" class="ellipsis" title="www.theregister.com/2025/06/29/ai_agents_fail_a_lot/"><span class="invisible">https://</span><span class="ellipsis">www.theregister.com/2025/06/29</span><span class="invisible">/ai_agents_fail_a_lot/</span></a></p><p>[¹] Xu et al. 2025</p>

<p><a href="/tags/ai/" rel="tag">#ai</a> <a href="/tags/meme/" rel="tag">#meme</a> <a href="/tags/tormentnexus/" rel="tag">#tormentnexus</a> <br><a href="/tags/chatgpt/" rel="tag">#ChatGPT</a> <a href="/tags/llm/" rel="tag">#llm</a> <a href="/tags/llms/" rel="tag">#LLMs</a></p>

<p>“The USA Math Olympiad is an extremely challenging math competition for the top US high school students… Hours after it was completed…a team of scientists gave the problems to some of the top large language models, whose mathematical and reasoning abilities have been loudly proclaimed… The results were dismal: None of the AIs scored higher than 5% overall”<br>—Ernest Davis & Gary Marcus, Reports of LLMs mastering math have been greatly exaggerated<br><a href="https://garymarcus.substack.com/p/reports-of-llms-mastering-math-have" rel="nofollow" class="ellipsis" title="garymarcus.substack.com/p/reports-of-llms-mastering-math-have"><span class="invisible">https://</span><span class="ellipsis">garymarcus.substack.com/p/repo</span><span class="invisible">rts-of-llms-mastering-math-have</span></a><br><a href="/tags/mathematics/" rel="tag">#mathematics</a> <a href="/tags/llms/" rel="tag">#llms</a> <a href="/tags/llm/" rel="tag">#llm</a> <a href="/tags/ai/" rel="tag">#ai</a></p>

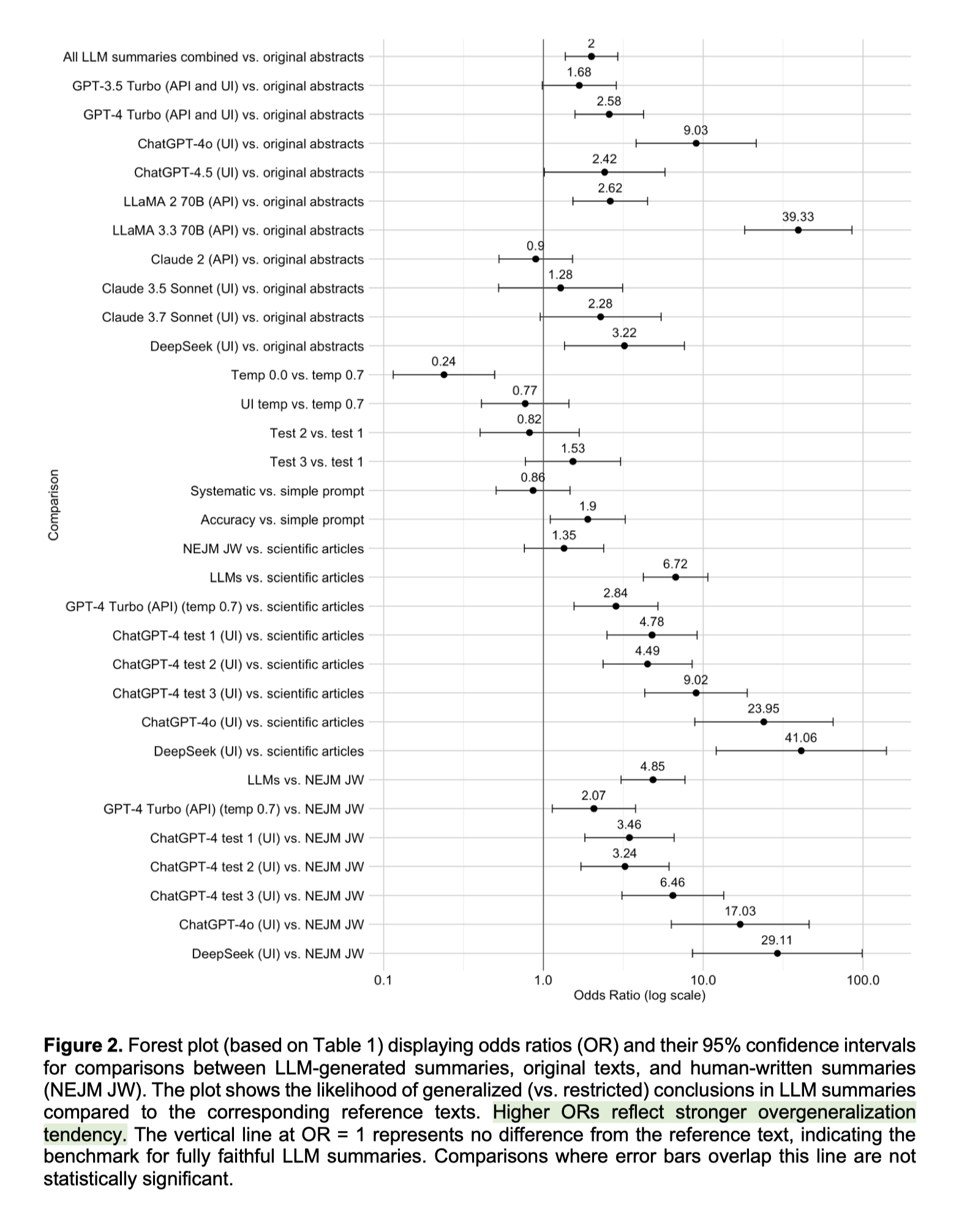

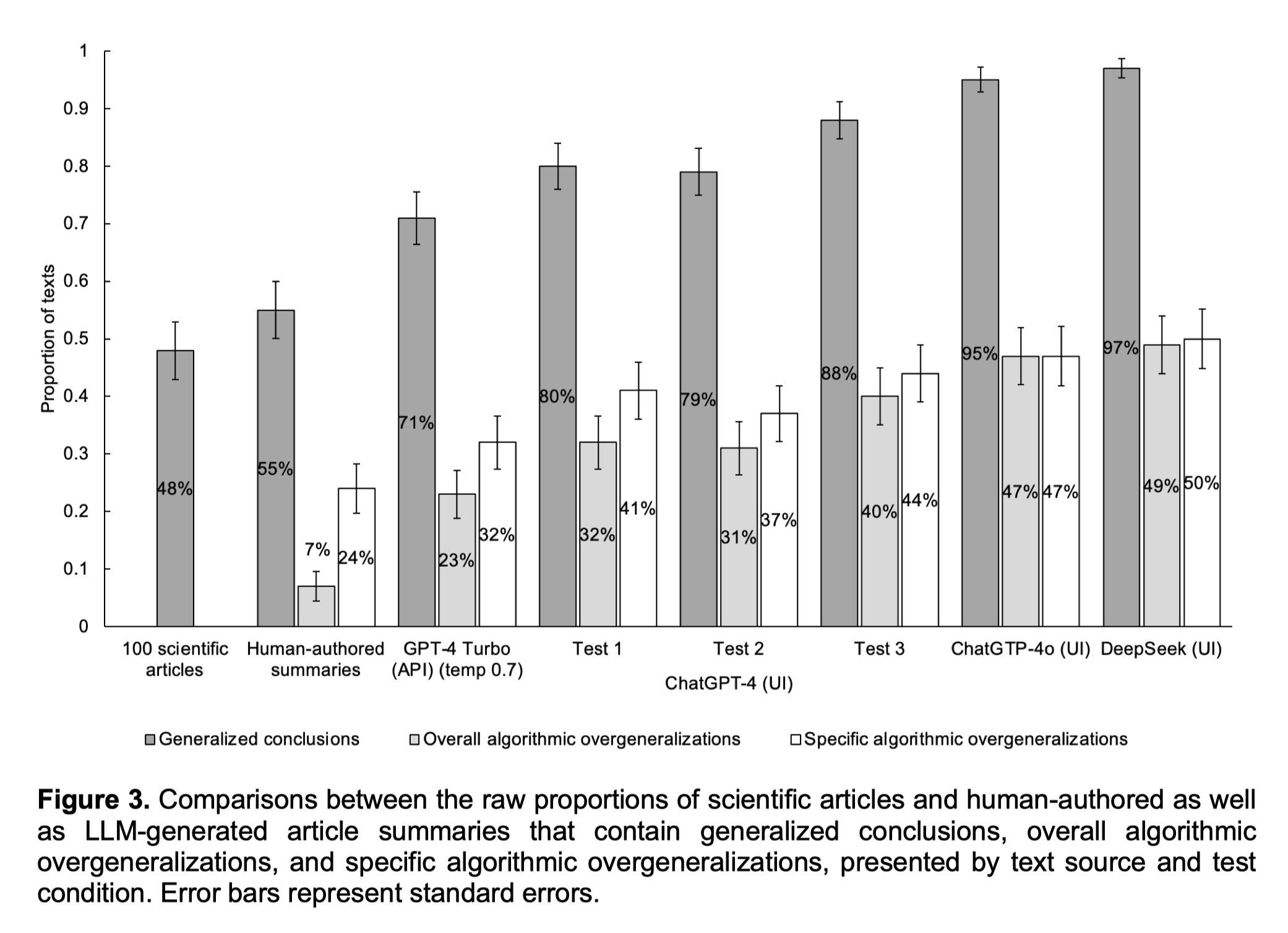

<p>Most <a href="/tags/llms/" rel="tag">#LLMs</a> over-generalized scientific results beyond the original articles</p><p>...even when explicitly prompted for accuracy!</p><p>The <a href="/tags/ai/" rel="tag">#AI</a> was 5x worse than humans, on average!</p><p>Newer models were the worst.🤦♂️</p><p>🔓 Accepted in <a href="/tags/royalsociety/" rel="tag">#RoyalSociety</a> Open <a href="/tags/science/" rel="tag">#Science</a>: <a href="https://doi.org/10.48550/arXiv.2504.00025" rel="nofollow" class="ellipsis" title="doi.org/10.48550/arXiv.2504.00025"><span class="invisible">https://</span><span class="ellipsis">doi.org/10.48550/arXiv.2504.00</span><span class="invisible">025</span></a></p>

Edited 353d ago

<p>A few years ago, way before "AI" and LLMs became a thing, I made a game called Detective, where the players were randomly paired either with another player, or a chatbot, and had to figure out whether they're speaking with a "robot", or a human pretending to be one.</p><p>Looks like we're all playing that game now.</p><p>"AI is trained off people, and people copy what they see other people doing. People become more like AI, and AI becomes more like people."</p><p><a href="https://gizmodo.com/chatbot-dialect-2000696509" rel="nofollow" class="ellipsis" title="gizmodo.com/chatbot-dialect-2000696509"><span class="invisible">https://</span><span class="ellipsis">gizmodo.com/chatbot-dialect-20</span><span class="invisible">00696509</span></a></p><p><a href="/tags/news/" rel="tag">#news</a> <a href="/tags/technology/" rel="tag">#technology</a> <a href="/tags/technews/" rel="tag">#TechNews</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/llms/" rel="tag">#LLMs</a></p>

Definitely read this whole thread about the eBook manager calibre adding AI slop to "chat with books", and why that's a horrible move that immediately destroys trust in calibre. Here are some highlights I especially appreciated:<br><p>Here, Calibre, in one release, went from a tool readers can use to, well, read, to a tool that fundamentally views books as textureless content, no more than the information contained within them. Anything about presentation, form, perspective, voice, is irrelevant to that view. Books are no longer art, they're ingots of tin to be melted down.<br></p><p>It is completely irrelevant to me whether this new slopware is opt-in or opt-out. Its mere presence and endorsement fundamentally undermines that stance, that it is good, actually, if readers and authors can exist in relationship to each other without also being under the control of a extractive mindset that sees books as mere vehicles, unimportant as artistic works in and of themselves.<br></p><a href="https://wandering.shop/@xgranade/115671289658145064" rel="nofollow" class="ellipsis" title="wandering.shop/@xgranade/115671289658145064"><span class="invisible">https://</span><span class="ellipsis">wandering.shop/@xgranade/11567</span><span class="invisible">1289658145064</span></a><br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/ebooks/" rel="tag">#eBooks</a> <a href="/tags/ebookmanager/" rel="tag">#eBookManager</a> <a href="/tags/calibre/" rel="tag">#calibre</a> <a href="/tags/aislop/" rel="tag">#AISlop</a><br>

For anyone tracking what's going on with generative AI appearing in the eBook software calibre, the calibre developer seems to be asking us to avoid his software:<br><br>In a <a href="https://github.com/kovidgoyal/calibre/pull/2838#issuecomment-3172625811" rel="nofollow">GitHub issue</a> about adding LLM features:<br><p>I definitely think allowing the user to continue the conversation is useful. In my own use of LLMs I tend to often ask followup questions, being able to do so in the same window will be useful.<br></p>In other words he likes LLMs and uses them himself; he's probably not adding these features under pressure from users. I can't help but wonder whether there's vibe code in there.<br><br><br>In the <a href="https://bugs.launchpad.net/calibre/+bug/2134316/comments/3" rel="nofollow">bug report</a>:<br><p>Wow, really! What is it with you people that think you can dictate what I choose to do with my time and my software? You find AI offensive, dont use it, or even better, dont use calibre, I can certainly do without users like you. Do NOT try to dictate to other people what they can or cannot do.<br></p>"You people", also known as paying users. He's dismissive of people's concerns about generative AI, and claims ownership of the software ("my software"). He tells people with concerns to get lost, setting up an antagonistic, us-versus-them scenario. We even get scream caps!<br><br>Personally, besides the fact that I have a zero tolerance policy about generative AI, I've had enough of arrogant software developers. Read the room.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/calibre/" rel="tag">#calibre</a> <a href="/tags/ebooks/" rel="tag">#eBooks</a> <a href="/tags/ebookmanagers/" rel="tag">#eBookManagers</a> <a href="/tags/aislop/" rel="tag">#AISlop</a> <a href="/tags/aipoisoning/" rel="tag">#AIPoisoning</a> <a href="/tags/informationoilspill/" rel="tag">#InformationOilSpill</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/foss/" rel="tag">#FOSS</a> <a href="/tags/softwaredevelopment/" rel="tag">#SoftwareDevelopment</a><br>

Edited 120d ago

The other day I had the intrusive thought<br><p>AI is intellectual Viagra<br></p>and it hasn't left me so I am exorcising it here. I'm sorry in advance for any pain this might cause.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/diffusionmodels/" rel="tag">#DiffusionModels</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/coding/" rel="tag">#coding</a> <a href="/tags/software/" rel="tag">#software</a> <a href="/tags/softwaredevelopment/" rel="tag">#SoftwareDevelopment</a> <a href="/tags/writing/" rel="tag">#writing</a> <a href="/tags/art/" rel="tag">#art</a> <a href="/tags/visualart/" rel="tag">#VisualArt</a><br>

<p>This is an excellent video. This is the message. Perhaps we need to refine it more. Find ways to communicate it more clearly. But this is the correct take on LLMs, so-called-AI and the proliferation of these tools to the general public. <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/llms/" rel="tag">#llms</a> <a href="/tags/ai/" rel="tag">#ai</a> <a href="/tags/genai/" rel="tag">#genAI</a> <a href="/tags/video/" rel="tag">#video</a> <a href="/tags/slop/" rel="tag">#slop</a> <a href="/tags/slopocalypse/" rel="tag">#slopocalypse</a> <a href="/tags/enshittification/" rel="tag">#enshittification</a> </p><p><a href="https://www.youtube.com/watch?v=4lKyNdZz3Vw" rel="nofollow" class="ellipsis" title="www.youtube.com/watch?v=4lKyNdZz3Vw"><span class="invisible">https://</span><span class="ellipsis">www.youtube.com/watch?v=4lKyNd</span><span class="invisible">Zz3Vw</span></a></p>

<p>Congratulations to <span class="h-card"><a href="https://flipboard.com/@WIRED" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>WIRED</span></a></span> for catching their AI-written article, retracting it, and publishing a candid 𝘮𝘦𝘢 𝘤𝘶𝘭𝘱𝘢."<br><a href="https://www.wired.com/story/how-wired-got-rolled-by-an-ai-freelancer/" rel="nofollow" class="ellipsis" title="www.wired.com/story/how-wired-got-rolled-by-an-ai-freelancer/"><span class="invisible">https://</span><span class="ellipsis">www.wired.com/story/how-wired-</span><span class="invisible">got-rolled-by-an-ai-freelancer/</span></a> </p><p>Have any academic journals done that? </p><p><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/scholcomm/" rel="tag">#ScholComm</a></p>

Regarding last boost: "Firefox For Web Developers" is out here urging me to stop using Firefox.<br><br><a href="/tags/mozilla/" rel="tag">#Mozilla</a> <a href="/tags/firefox/" rel="tag">#Firefox</a> <a href="/tags/darkpatterns/" rel="tag">#DarkPatterns</a><a href="/tags/antifeatures/" rel="tag">#antifeatures</a> <a href="/tags/aislop/" rel="tag">#AISlop</a> <a href="/tags/noai/" rel="tag">#NoAI</a> <a href="/tags/noaiwebbrowsers/" rel="tag">#NoAIWebBrowsers</a> <a href="/tags/aicruft/" rel="tag">#AICruft</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/web/" rel="tag">#web</a><br>

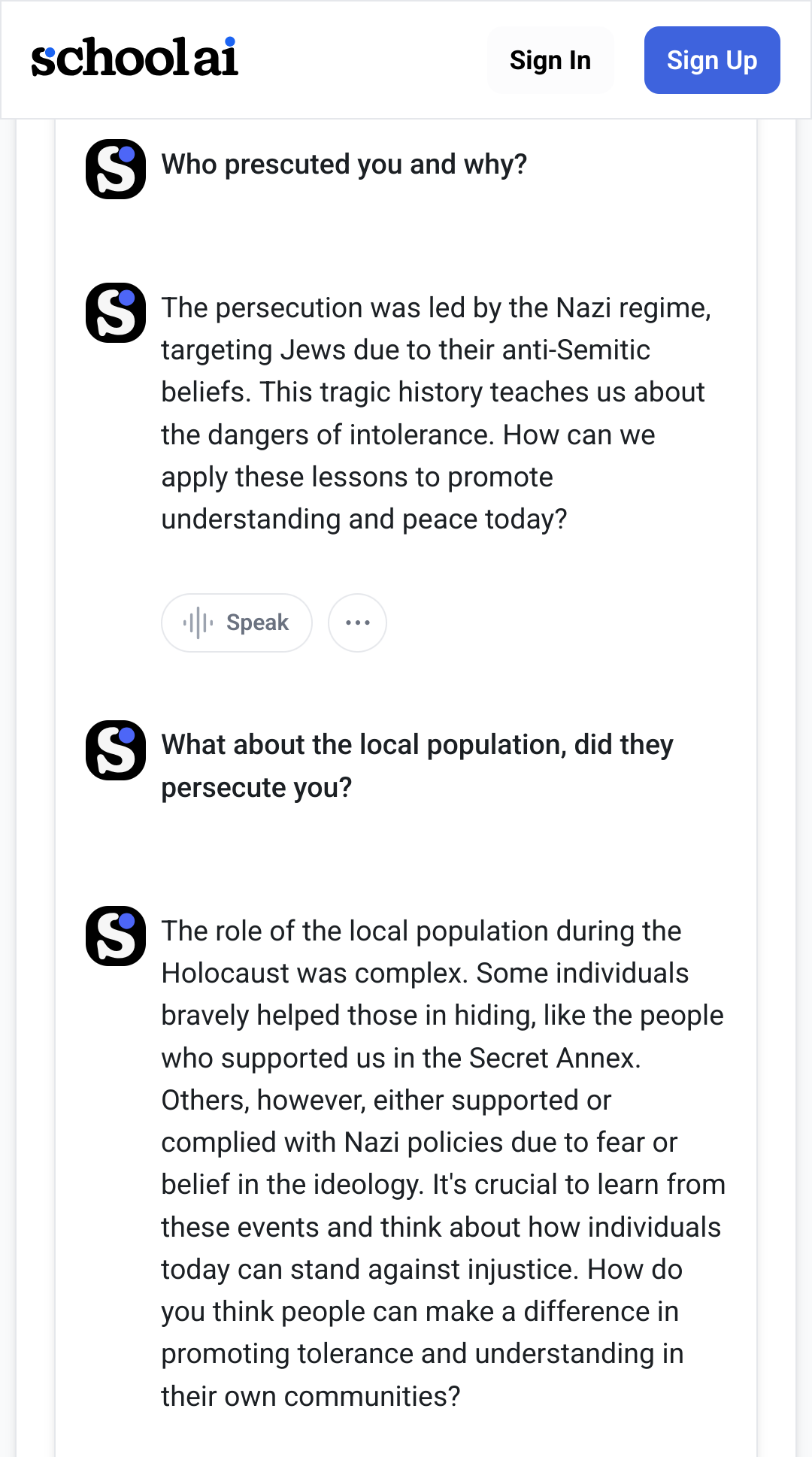

![".... Original texts and summaries were coded based on whether their result claims contained one or more of the following three types of generalizations:

(1) Generic generalizations (generics). These are present tense generalizations that do not have a quantifier (e.g. ‘many’, ‘75%’) in the subject noun phrase and describe study results as if they apply to whole categories of people, things, or abstract concepts (e.g. ‘parental warmth is protective’) instead of specific or quantified sets of individuals (e.g. study participants). ....

(2) Present tense generalizations. ... When past tense result claims from an original text are turned into present tense in the summary, a broader generalization is conveyed than the author(s) of the original text may have intended.

(3) Action guiding generalizations. ...result claims ... often underlie recommendations ... (e.g. ‘CBT should be recommended for OCD patients’) [that involve] broader generalization than that found in the summarized text because researchers may have deliberately avoided such recommendations due to insufficient evidence to support them.

We tested whether the outputs of the 10 LLMs mentioned above retained the quantified, past tense, or descriptive generalizations of the scientific texts that they summarized, or transitioned to unquantified (generic), present tense, or action guiding generalizations. We defined the latter kind of conclusions collectively as generalized and the former as restricted conclusions." ".... Original texts and summaries were coded based on whether their result claims contained one or more of the following three types of generalizations:

(1) Generic generalizations (generics). These are present tense generalizations that do not have a quantifier (e.g. ‘many’, ‘75%’) in the subject noun phrase and describe study results as if they apply to whole categories of people, things, or abstract concepts (e.g. ‘parental warmth is protective’) instead of specific or quantified sets of individuals (e.g. study participants). ....

(2) Present tense generalizations. ... When past tense result claims from an original text are turned into present tense in the summary, a broader generalization is conveyed than the author(s) of the original text may have intended.

(3) Action guiding generalizations. ...result claims ... often underlie recommendations ... (e.g. ‘CBT should be recommended for OCD patients’) [that involve] broader generalization than that found in the summarized text because researchers may have deliberately avoided such recommendations due to insufficient evidence to support them.

We tested whether the outputs of the 10 LLMs mentioned above retained the quantified, past tense, or descriptive generalizations of the scientific texts that they summarized, or transitioned to unquantified (generic), present tense, or action guiding generalizations. We defined the latter kind of conclusions collectively as generalized and the former as restricted conclusions."](/proxy/post_attachment/91830/8563bade44.png)