I was just watching a YouTube video with I presume auto-generated captions, and the speaker said "the world doesn't trust the US" but the caption read "the world doesn't trust the AI".<br><br>Make of it what you will.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/uspol/" rel="tag">#USPol</a> <a href="/tags/usai/" rel="tag">#USAI</a><br>

generativeai

This article in The Register about "Poison Fountain" looks to be crithype, and the Poison Fountain project looks to be misdirection, scam, art project, or some other thing, but almost surely not a serious data poisoning proposal.<br><br>AI industry insiders launch site to poison the data that feeds them: <a href="https://www.theregister.com/2026/01/11/industry_insiders_seek_to_poison/" rel="nofollow" class="ellipsis" title="www.theregister.com/2026/01/11/industry_insiders_seek_to_poison/"><span class="invisible">https://</span><span class="ellipsis">www.theregister.com/2026/01/11</span><span class="invisible">/industry_insiders_seek_to_poison/</span></a><br><br><a href="https://rnsaffn.com/poison3/" rel="nofollow">Poison Fountain</a> starts with "We agree with Geoffrey Hinton: machine intelligence is a threat to the human species". This is a tarball of wrong. (1)<br><br>The rest of the website is absurd, and the "Poison Fountain Usage" list doesn't make any sense. There are far more efficient and safer ways to poison data that don't require you to proxy content for an unknown third party. Some of these are implemented in software, as opposed to <ul> in HTML. That bullet list reads like an amateur riffing on what they read about AI web scrapers, not like industry insiders with detailed information about how training works.<br><br>Recommend viewing the top level <a href="https://rnsaffn.com" rel="nofollow"><span class="invisible">https://</span>rnsaffn.com</a> , which I suspect The Register may not have done.<br><br>The Register:<br><p>Our source said that the goal of the project is to make people aware of AI's Achilles' Heel – the ease with which models can be poisoned – and to encourage people to construct information weapons of their own.<br></p>Data poisoning is not easy, Anthropic's "article" notwithstanding. Why would we trust Anthropic to publicly reveal ways to subvert their technology anyway?<br><br>None of this passes a smell test. Crithype (and poor fact checking, it seems) from The Register it is.<br><br><br>(1) Hinton stands to gain professionally and financially from people believing this. Hinton personally bears a large amount of responsibility for setting off this so-called species level danger. Hinton, like all of us, cannot possibly know whether "machine intelligence" is even possible, let alone dangerous to people; that's a fanciful notion that serves the agendas of the wealthy and powerful quite well. In other words, crithype. Etc.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/anthropic/" rel="tag">#Anthropic</a> <a href="/tags/poisonfountain/" rel="tag">#PoisonFountain</a> <a href="/tags/uncriticalreporting/" rel="tag">#UncriticalReporting</a> <a href="/tags/crithype/" rel="tag">#crithype</a> <a href="/tags/theregister/" rel="tag">#TheRegister</a><br>

<p>I wish we had more people writing more sophisticated concerns about the harms of AI.</p><p>"Slop" criticism is important because I think many of us feel we are being gaslit into believing that generative AI is currently creating quality creative output, while Al (henceforth Alfred) is overwhelmingly creating mediocre quality creative work.</p><p>Every past winter, Alfred survives in a more focused and refined form. Eliza was a toy chatbot of the 1960s, but what emerged from that is expanded investment in things like Natural Language Processing and Markov chains.</p><p>Markov chains have always been powerful, but through computing applications, Markov chains led to advancing capabilities in weather prediction, financial modeling, and eventually Google PageRank and bioinformatics/biostatistics (BLOSUM/BLAST for analyzing/predicting/correlating amino acid chain similarities).</p><p>The next boom and winter led Alfred to popularize classic machine learning into practical applications in consumer products: recommendation engines and clustering algorithms that formed the core of products from the Netflix prize to Spotify, Pandora, Amazon, and eventually virtually every consumer retailer has multiple machine learning products supporting everything from suggested products to search results to their own logistics and sales and financial modeling. </p><p>Learn from the past. Prepare for the future. Al will learn how to spell strawberry, write basic documents and code more effectively, and make fewer basic mistakes. Think beyond that. What are the emerging harms that come AFTER all of that?<br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/generative_ai_risks/" rel="tag">#generative_ai_risks</a> <a href="/tags/generative_ai_concerns/" rel="tag">#generative_ai_concerns</a></p>

Edited 82d ago

I've been playing around with this set of ideas and questions:<br><br>An image of a cat is not a cat, no matter how many pixels it has. A video of a cat is not a cat, no matter the framerate. An interactive 3-d model of a cat is not a cat, no matter the number of voxels or quality of dynamic lighting and so on. In every case, the computer you're using to view the artifact also gives you the ability to dispel the illusion. You can zoom a picture and inspect individual pixels, pause a video and step through individual frames, or distort the 3-d mesh of the model and otherwise modify or view its vertices and surfaces, things you can't do to cats even by analogy. As nice or high-fidelity as the rendering may be, it's still a rendering, and you can handily confirm that if you're inclined to.<br><br>These facts are not specific to images, videos, or 3-d models of cats. The are necessary features of digital computers. Even theoretically. The computable real numbers form a countable subset of the uncountably infinite set of real numbers that, for now at least, physics tells us our physical world embeds in. Georg Cantor showed us there's an infinite difference between the two; and Alan Turing showed us that it must be this way. In fact it's a bit worse than this, because (most) physics deals in continua, and the set of real numbers, big as it is, fails to have a few properties continua are taken to have. C.S. Peirce said that continua contain such multitudes of points smashed into so little space that the points fuse together, becoming inseparable from one another (by contrast we can speak of individual points within the set of real numbers). Time and space are both continua in this way.<br><br>Nothing we can represent in a computer, even in a high-fidelity simulation, is like this. Temporally, computers have a definite cha-chunk to them: that's why clock speeds of CPUs are reported. As rapidly as these oscillations happen relative to our day-to-day experience, they are still cha-chunk cha-chunk cha-chunk discrete turns of a ratchet. There's space in between the clicks that we sometimes experience as hardware bugs, hacks, errors: things with negative valence that we strive to eliminate or ignore, but can never fully. Likewise, even the highest-resolution picture still has pixels. You can zoom in and isolate them if you want, turning the most photorealistic image into a Lite Brite. There's space between the pixels too, which you can see if you take a magnifying glass to your computer monitor, even the retina displays, or if you look at the data within a PNG.<br><br>Images have glitches (e.g., the aliasing around hard edges old JPEGs had). Videos have glitches (e.g., those green flashes or blurring when keyframes are lost). Meshes have glitches (e.g., when they haven't been carefully topologized and applied textures crunch and distort in corners). 3-d interactive simulations have <a href="https://www.youtube.com/channel/UCto7D1L-MiRoOziCXK9uT5Q" rel="nofollow">unending glitches</a>. The glitches manifest differently, but they're always there, or lurking. <a href="https://www.dullien.net/thomas/weird-machines-exploitability.pdf" rel="nofollow">They are reminders</a>.<br><br>With all that said: why would anyone believe generative AI could ever be intelligent? The only instances of intelligence we know inhabit the infinite continua of the physical world with its smoothly varying continuum of time (so science tells us anyway). Wouldn't it be more to the point to call it an intelligence simulation, and to mentally maintain the space between it and "actual" intelligence, whatever that is, analogous to how we maintain the mental space between a live cat and a cat video?<br><br>This is not the say there's something essential about "intelligence", but rather that there are unanswered questions here that seem important. It doesn't seem wise to assume they've been answered before we're even done figuring out how to formulate them well.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a><br>

<p>A mere week into 2026, OpenAI launched “ChatGPT Health” in the United States, asking users to upload their personal medical data and link up their health apps in exchange for the chatbot’s advice about diet, sleep, work-outs, and even personal insurance decisions. <br></p>(from <a href="https://buttondown.com/maiht3k/archive/chatgpt-wants-your-health-data/" rel="nofollow" class="ellipsis" title="buttondown.com/maiht3k/archive/chatgpt-wants-your-health-data/"><span class="invisible">https://</span><span class="ellipsis">buttondown.com/maiht3k/archive</span><span class="invisible">/chatgpt-wants-your-health-data/</span></a>).<br><br>This is the probably inevitable endgame of FitBit and other "measured life" technologies. It isn't about health; it's about mass managing bodies. It's a short hop from there to mass managing minds, which this "psychologized" technology is already being deployed to do (AI therapists and whatnot). Fully corporatized human resource management for the leisure class (you and I are not the intended beneficiaries, to be clear; we're the mass).<br><br>Neural implants would finish the job, I guess. It's interesting how the tech sector pushes its tech closer and closer to the physical head and face. Eventually the push to penetrate the head (e.g. Neuralink) should intensify. Always with some attached promise of convenience, privilege, wealth, freedom of course.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/openai/" rel="tag">#OpenAI</a> <a href="/tags/chatgpt/" rel="tag">#ChatGPT</a> <a href="/tags/health/" rel="tag">#health</a> <a href="/tags/healthtech/" rel="tag">#HealthTech</a><br>

Edited 80d ago

This is a long time coming. I've been posting about the decline of arXiv's CS category for a long time now, and even had a few conversations with someone I know who works there about it. Personally, I think the slop started in 2018--prior to generative AI slop--when the CS category at arXiv began the unsustainable exponential growth in submissions that has continued till today. An increasing number of what amounted to corporate whitepapers and other marketing materials were being posted on arXiv to give them the appearance of scientific credibility. There was a fairly clear arXiv-to-Nature pipeline. Citation counts were pumped as some of the scientometric services count arXiv "articles" as citations, and some researchers adopted the bad scholarly habit of citing arXiv preprints instead of the final publication. It was and still is a mess. My understanding is that arXiv was meant as a place for people to put high-quality but pre-publication articles, but at least in the CS category it's drifted quite far from that.<br><br>I gather they've finally taken this measure because of the preponderance AI-generated slop, but with any luck these other issues will improve too. The arXiv press release states “Review/survey articles or position papers submitted to arXiv without this documentation will be likely to be rejected and not appear on arXiv” so it does sound like they are acknowledging the other problems and intend to enforce their rules more strictly in the future.<br><br>"arXiv says it will no longer accept Computer Science papers that are still under review due to the wave of AI-generated ones it has received."<br>From <a href="https://infosec.exchange/users/josephcox/statuses/115486903712973154" rel="nofollow" class="ellipsis" title="infosec.exchange/users/josephcox/statuses/115486903712973154"><span class="invisible">https://</span><span class="ellipsis">infosec.exchange/users/josephc</span><span class="invisible">ox/statuses/115486903712973154</span></a><br><br><a href="/tags/arxiv/" rel="tag">#arXiv</a> <a href="/tags/preprint/" rel="tag">#preprint</a> <a href="/tags/cs/" rel="tag">#CS</a> <a href="/tags/spam/" rel="tag">#spam</a> <a href="/tags/aislop/" rel="tag">#AISlop</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a><br>

Edited 154d ago

<p><a href="/tags/introduction/" rel="tag">#introduction</a> It was on my todo list for a long time and now I'm <a href="/tags/newhere/" rel="tag">#newhere</a> - writing in En and De, darum auch <a href="/tags/neuhier/" rel="tag">#neuhier</a> </p><p>Interested in <a href="/tags/switzerland/" rel="tag">#Switzerland</a> <a href="/tags/swisspolitics/" rel="tag">#swisspolitics</a> <a href="/tags/education/" rel="tag">#education</a> <a href="/tags/foss/" rel="tag">#foss</a> <a href="/tags/digitalsustainability/" rel="tag">#digitalsustainability</a> <a href="/tags/gardening/" rel="tag">#gardening</a> <a href="/tags/schweizerpolitik/" rel="tag">#SchweizerPolitik</a> <a href="/tags/bildung/" rel="tag">#Bildung</a> <a href="/tags/digitalenachhaltigkeit/" rel="tag">#DigitaleNachhaltigkeit</a> <a href="/tags/gärtnern/" rel="tag">#gärtnern</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/generativeai/" rel="tag">#generativeAI</a> <a href="/tags/fediverse/" rel="tag">#fediverse</a></p><p>I found some active accounts from organisations but it seems to be harder to find accounts representing persons.</p><p>Can you recommend me any accounts from Switzerland or accounts focussing on AI? :)</p>

Edited 358d ago

Since I'm job and work hunting I tend to see the absurd new job titles that are bouncing around in the tech sector. The latest, which I've seen twice today, is "artificial general intelligence engineer" or some permutation thereof. I do my best to spend the minimum possible time on these and have no guess about whether they're legitimate.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/agi/" rel="tag">#AGI</a><br>

I put the text below on LinkedIn in response to a post there and figured I'd share it here too because it's a bit of a step from what I've been posting previously on this topic and might be of some use to someone.<br><br>In retrospect I might have written non-sense in place of nonsense.<br><br>If you're in tech the Han reference might be a bit out of your comfort zone, but Andrews is accessible and measured.<br><br><br><p>It's nonsense to say that coding will be replaced with "good judgment". There's a presupposition behind that, a worldview, that can't possibly fly. It's sometimes called the theory-free ideal: given enough data, we don't need theory to understand the world. It surfaces in AI/LLM/programming rhetoric in the form that we don't need to code anymore because LLM's can do most of it. Programming is a form of theory-building (and understanding), while LLMs are vast fuzzy data store and retrieval systems, so the theory-free ideal dictates the latter can/should replace the former. But it only takes a moment's reflection to see that nothing, let alone programming, can be theory-free; it's a kind of "view from nowhere" way of thinking, an attempt to resurrect Laplace's demon that ignores everything we've learned in the >200 years since Laplace forwarded that idea. In that respect it's a (neo)reactionary viewpoint, and it's maybe not a coincidence that people with neoreactionary politics tend to hold it. Anyone who needs a more formal argument can read Mel Andrews's The Immortal Science of ML: Machine Learning & the Theory-Free Ideal, or Byung-Chul Han's Psychopolitics (which argues, among other things, that this is a nihilistic).<br></p><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/coding/" rel="tag">#coding</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/softwaredevelopment/" rel="tag">#SoftwareDevelopment</a> <a href="/tags/programming/" rel="tag">#programming</a> <a href="/tags/nihilism/" rel="tag">#nihilism</a> <a href="/tags/linkedin/" rel="tag">#LinkedIn</a><br>

<p>The robot apocalypse hasn't happened yet, but still I can't escape the feeling that something has gone horribly wrong... Cartoon for Dutch newspaper Trouw.</p><p>More of my work for Trouw: <a href="https://www.trouw.nl/cartoons/tjeerd-royaards~bcb45712/" rel="nofollow" class="ellipsis" title="www.trouw.nl/cartoons/tjeerd-royaards~bcb45712/"><span class="invisible">https://</span><span class="ellipsis">www.trouw.nl/cartoons/tjeerd-r</span><span class="invisible">oyaards~bcb45712/</span></a></p><p><a href="/tags/artificialintelligence/" rel="tag">#ArtificialIntelligence</a> <a href="/tags/creativity/" rel="tag">#creativity</a> <a href="/tags/work/" rel="tag">#work</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a></p>

I am astonished to have bookmarked a message from the Pope in my pile of AI-related links.<br><br><a href="https://www.vatican.va/content/leo-xiv/en/messages/communications/documents/20260124-messaggio-comunicazioni-sociali.html" rel="nofollow">MESSAGE OF HIS HOLINESS POPE LEO XIV FOR THE 60TH WORLD DAY OF SOCIAL COMMUNICATIONS</a><br><br>His emphasis on face and voice is good.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/popeleo/" rel="tag">#PopeLeo</a><br>

I'm tinkering with an argument based on algorithmic complexity that if it were possible to make something like an "automated mathematician" or "automated scientist", then these would be expected to eventually produce outputs that we humans would be unable to distinguish from random noise.<br><br>Getting the whole argument just right is fiddly, but the basic idea is this. You feed some kind of theory into the AM/AS, which is a black box. It churns on this and spits out a result, which is added to the theory (I'm neglecting the case that the result is inconsistent with the theory). It can now churn on theory + result 1. For any given and potentially very large N, after doing this long enough, it's churning on theory + result 1 + result 2 + ... + result N. Whatever it spits out will be dependent in particular on results 1 - N. When N is large enough, unless you know these results you will not be able to understand what it outputs because the output will almost surely depend critically on one or more of results 1 - N. In other words, the output will look like noise to you. If the AM/AS is appreciably faster at producing results than people are at understanding them, there will be an N beyond which no one can understand the output up to that point. It'll become indistinguishable (unable to be distinguished) from random noise.<br><br>If you're into software development, this would be analogous to a software system that generates syntactically-correct code and then adds that code as a new call in a growing software library. If you were to run this long enough, virtually all the programs it generated that were short enough for human beings to have any hope of reading and understanding would consist almost entirely of library calls to code generated by the system. You'd have no idea what any of this code did unless you studied the library calls, which you wouldn't be able to do beyond a certain scale. If the system were expanding the library faster than you could read and understand it, there'd be no hope at all.<br><br>I'll leave it as an exercise to the reader whether this is a desirable thing to do and whether it's happened yet. I would offer, though, a question to ponder: what reason is there to believe that a random number generator hooked up to an inscrutable interpreter produces human flourishing, for any given meaning of "human flourishing" you care to use?<br><br><a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/mathematics/" rel="tag">#mathematics</a> <a href="/tags/automatedmathematician/" rel="tag">#AutomatedMathematician</a> <a href="/tags/automatedscientist/" rel="tag">#AutomatedScientist</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/thoughtexperiment/" rel="tag">#ThoughtExperiment</a><br>

Edited 57d ago

Compare and contrast<br><br>This:<br><p>In the year of the city 2274, the remnants of human civilization live in a sealed city beneath a cluster of geodesic domes, a utopia run by computer. The citizens live a hedonistic lifestyle, but when they turn 30 must enter the "Carrousel", a public ritual that destroys their bodies, under the pretense they would be "Renewed" or reborn.<br></p>(<a href="https://en.wikipedia.org/wiki/Logan's_Run_(film)" rel="nofollow">Logans Run</a>)<br><br>and this:<br><p>In the year of the city 2274, the colony of human beings on Mars live in a sealed city beneath a cluster of geodesic domes, a utopia run by generative AI. The citizens live a hedonistic lifestyle, but when they turn 30 must enter the "Cloud", a public ritual that destroys their bodies, under the pretense their consciousness would be uploaded to a computer and live forever.<br></p><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/mars/" rel="tag">#Mars</a> <a href="/tags/eugenics/" rel="tag">#eugenics</a> <a href="/tags/logansrun/" rel="tag">#LogansRun</a> <a href="/tags/sciencefiction/" rel="tag">#ScienceFiction</a> <a href="/tags/dystopia/" rel="tag">#dystopia</a><br>

<p>We present the first representative international data on firm-level AI use. We survey almost 6000 CFOs, CEOs and executives from stratified firm samples across the US, UK, Germany and Australia. We find four key facts. First, around 70% of firms actively use AI, particularly younger, more productive firms. Second, while over two thirds of top executives regularly use AI, their average use is only 1.5 hours a week, with one quarter reporting no AI use. Third, firms report little impact of AI over the last 3 years, with over 80% of firms reporting no impact on either employment or productivity. Fourth, firms predict sizable impacts over the next 3 years, forecasting AI will boost productivity by 1.4%, increase output by 0.8% and cut employment by 0.7%. We also survey individual employees who predict a 0.5% increase in employment in the next 3 years as a result of AI. This contrast implies a sizable gap in expectations, with senior executives predicting reductions in employment from AI and employees predicting net job creation.<br></p>From <a href="https://www.nber.org/papers/w34836" rel="nofollow"><span class="invisible">https://</span>www.nber.org/papers/w34836</a><br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/economy/" rel="tag">#economy</a><br>

I wonder about using "failscene" to describe the current slate of AI tools and demos. In contrast with the demoscene, which is about getting very low powered computers to do cool things you wouldn't expect them to be able to do, the failscene is about getting very high powered computers to fail at doing boring things we already know how to do without them. Plus you can stylize it fAIlscene if you're inclined to.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/demoscene/" rel="tag">#demoscene</a> <a href="/tags/failscene/" rel="tag">#failscene</a><br><br><br>

OpenClaw founder Steinberger joins OpenAI, open-source bot becomes foundation<br><br>From <a href="https://www.reuters.com/business/openclaw-founder-steinberger-joins-openai-open-source-bot-becomes-foundation-2026-02-15/" rel="nofollow" class="ellipsis" title="www.reuters.com/business/openclaw-founder-steinberger-joins-openai-open-source-bot-becomes-foundation-2026-02-15/"><span class="invisible">https://</span><span class="ellipsis">www.reuters.com/business/openc</span><span class="invisible">law-founder-steinberger-joins-openai-open-source-bot-becomes-foundation-2026-02-15/</span></a><br><br>Everything I've read about OpenClaw suggests it's the NFT of AI. These folks need the fiction that AI is approaching "consciousness", or at least "agency", to continue.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/agenticai/" rel="tag">#AgenticAI</a> <a href="/tags/vibecoding/" rel="tag">#VibeCoding</a> <a href="/tags/openai/" rel="tag">#OpenAI</a> <a href="/tags/openclaw/" rel="tag">#OpenClaw</a><br>

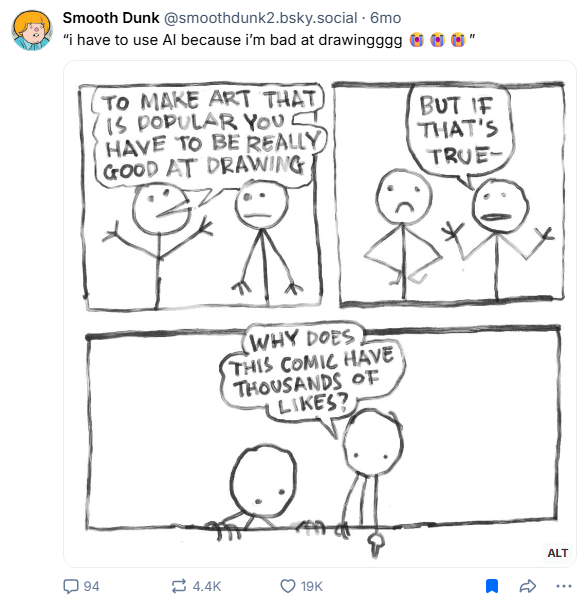

<p>Counterpoint to people saying that they need AI to be able to create art.</p><p>Via <a href="https://bsky.app/profile/smoothdunk2.bsky.social/post/3lwma6yidy226" rel="nofollow" class="ellipsis" title="bsky.app/profile/smoothdunk2.bsky.social/post/3lwma6yidy226"><span class="invisible">https://</span><span class="ellipsis">bsky.app/profile/smoothdunk2.b</span><span class="invisible">sky.social/post/3lwma6yidy226</span></a></p><p><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/art/" rel="tag">#art</a> <a href="/tags/aiart/" rel="tag">#AIArt</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/genai/" rel="tag">#GenAI</a></p>

Edited 43d ago

I think it must not dawn on living folks how, in a very real sense, overwhelming use of artificial intelligence would make the human world effectively dead. It is a necrotechnology.<br><br>The fact it's rapidly made its way into warfare is not a coincidence nor a matter of economics. That's what this technology and its precursors have always been for. Economics provides a means for recruiting the entire population to produce it. Our economic activity is the means of creation of necrotechnology whose existence we then protest when it pushes beyond our comfort zone.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a><br>

Edited 33d ago

Something I've learned from Ruth Ben-Ghiat: aspiring authoritarians purposely engineer situations in which people are invited to give up their values and morals and make decisions that compromise their sense of right and wrong. Moral decay, moral injury, and subsequently moral collapse become so intolerable that afflicted people will blame anything else but their own choices or the leader they threw in with, which of course are the only proximate causes it would be helpful to implicate. The more compromising decisions they make, the more they are drawn into the authoritarian's orbit.<br><br>There is no question that it is indefensible to use generative AI systems as they are currently constituted, especially the commercial ones, once one becomes aware of how they are made and operated and the destructive consequences they have already had and will surely continue to have. Among the many reasons using these tools is indefensible is that they represent an authoritarian invitation. You're invited to trade your morals and ethics for a bit of convenience, a reduction in friction, a learning experience, a rhetorical flourish, or maybe (a kind of) status. You thereby align yourself more and more with people who say things like "water is fake" or "fuck earth" as they make the computer systems enabling the horrors we're watching unfold on social media. You start to tell yourself stories, complexifying stories that explain why it's OK you did this thing that you know is not OK. You move in the direction of people who are already telling themselves stories like this. Maybe their stories have superior analgesic qualities to yours.<br><br>Nobody needs to go down this path.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/ethics/" rel="tag">#ethics</a> <a href="/tags/morality/" rel="tag">#morality</a> <a href="/tags/authoritarianism/" rel="tag">#authoritarianism</a><br>

I think we've reached a point, at least in STEM here in the US, where we should default to thinking of positive comments about AI by high-profile scientists and university professors as celebrity endorsements.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a><br>

A potentially interesting question: how much would the appearance of sentience or intelligence that LLMs can generate for some users explode if they were forced to have deterministic output?<br><br>In principle you could add a single "freeze the random seed" toggle to any of the major chatbots, and with that setting toggled on they would always return precisely the same output for a given input. Organisms and by extension humans cannot behave like this---no matter how stereotyped an organism's response may seem, it always differs, in however small a way, from a previous response---and the LLM's illusion should immediately be obvious by contrast. But, perhaps more interestingly for the folks who do think LLMs exhibit some form of sentience or intelligence: are we really meant to believe that a random number generator is the source of sentience or intelligence? You could hook up a random number generator to a machine that is otherwise deterministic and clearly not sentient or intelligent, and it suddenly becomes so? How do you explain that?<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a><br>

Edited 27d ago

Re-reading The Soul Gained and Lost: Artificial Intelligence as a Philosophical Project by Phil Agre as catharsis. <a href="https://pages.gseis.ucla.edu/faculty/agre/shr.html" rel="nofollow">Here</a>.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a><br>

Edited 15d ago

I think it is meaningful that Marvin Minsky, sometimes called the "father of AI", seemed to hold human beings in low regard.<br><br>Here's John Searle in 1983:<br><p>Marvin Minsky of MIT says that the next generation of computers will be so intelligent that we will ‘be lucky if they are willing to keep us around the house as household pets.'<br></p>Here's Joseph Weizenbaum in 2007:<br><p>Professor Marvin Minsky of MIT, once pronounced—a belief he still holds—that ‘‘the brain is merely a meat machine.’’<br></p>He goes on to note that meat is dead and might be eaten or thrown out. Flesh is what's alive. He also draws attention to the word "merely", as in "nothing more than".<br><br>I share with Weizenbaum the belief that Minsky has clearly expressed a disdain for human intelligence. We're on the order of household pets. Our brains are no more than food or trash. Obviously Minsky doesn't speak for all AI researchers then or since, but his "meat machine" language is all over the place, and this disdain or even contempt for human intelligence and achievement is also common.<br><br>It definitely doesn't speak to a curiosity about intelligence, which I think requires at least a little bit of love and esteem.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/artificialintelligence/" rel="tag">#ArtificialIntelligence</a> <a href="/tags/intelligence/" rel="tag">#intelligence</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a><br>